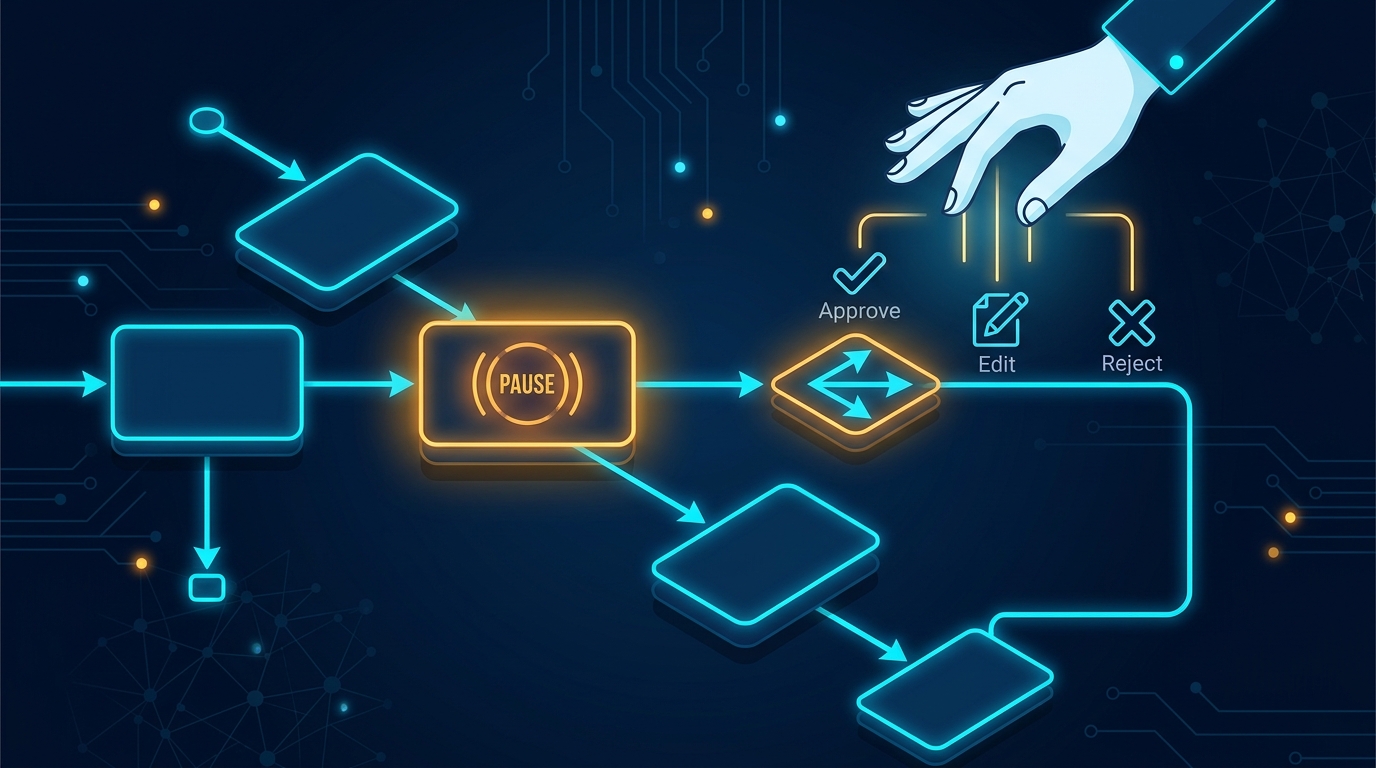

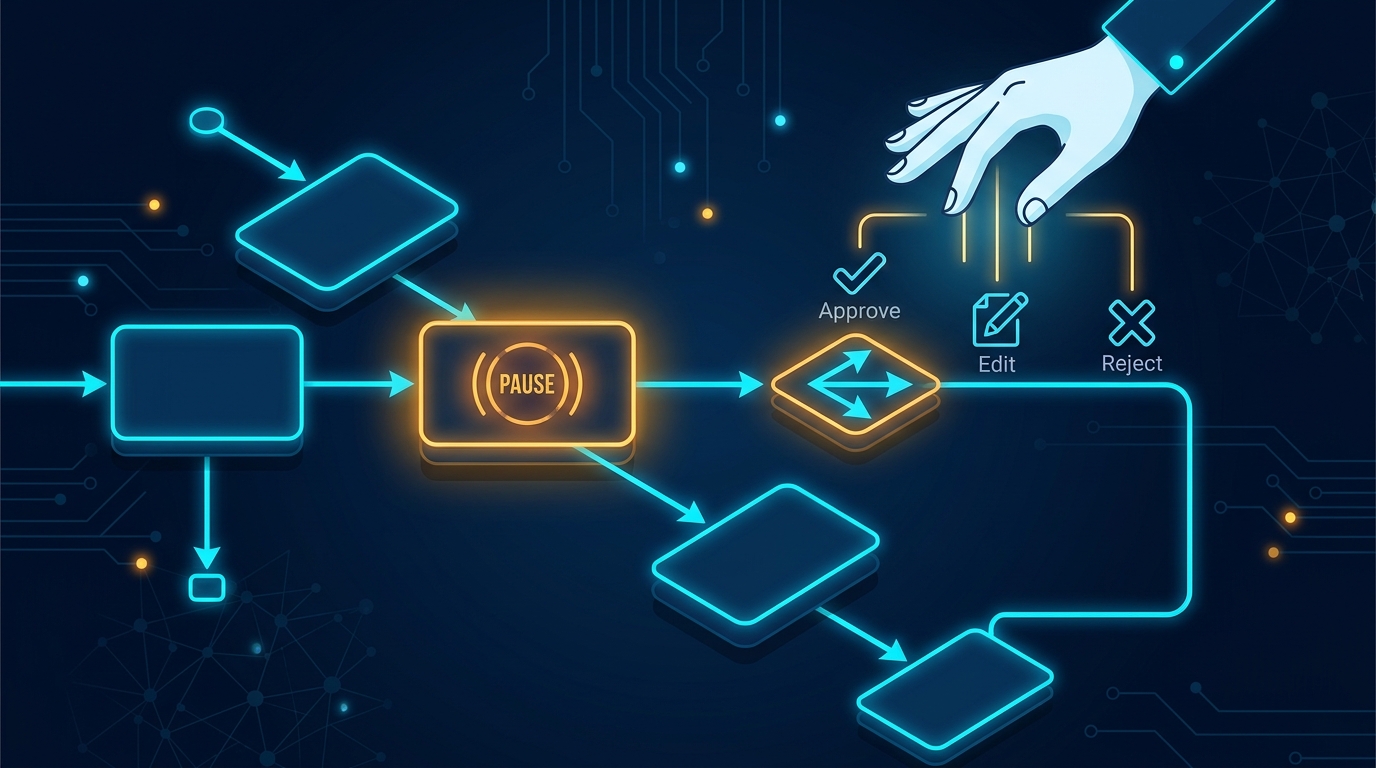

LangGraph Human-in-the-Loop: Interrupt Patterns in Python

Build approval workflows with LangGraph interrupt() and Command(). Step-by-step tutorial with approve, reject, and edit patterns.

We’ve built dozens of domain-specific agents at Turion, and retail is one of the most under-explored verticals in agent tutorials. Most LangGraph examples stop at generic chatbots or weather agents. A real retail context needs inventory lookups, order tracking, returns processing, and escalation logic — all stateful, all with real tools.

In this tutorial we’ll build a production-grade retail AI agent from scratch using LangGraph’s StateGraph. The agent will understand customer intent, query a simulated inventory system, process orders, handle returns, and know when to escalate to a human agent.

If you’re new to LangGraph, start with our first LangGraph tutorial for fundamentals. When you’re ready to ship this to users, read our production deployment guide.

Requirements: Python 3.10+, an OpenAI API key (or any LangChat-compatible provider), and about 15 minutes.

Our agent has four tool nodes behind a single StateGraph:

The graph routes between these based on the LLM’s tool calls, maintaining conversation state via the messages key. A conditional edge decides whether to loop back to the agent or terminate.

mkdir retail-agent && cd retail-agent

python -m venv .venv

source .venv/bin/activate # Windows: .venv\Scripts\activate

pip install langgraph langchain-openai

Export your API key:

export OPENAI_API_KEY="sk-..."

StateGraph requires a typed dictionary that describes every piece of data flowing through the graph. For retail we need the conversation messages, the current customer order context (if any), and a flag for human escalation.

from typing import TypedDict, Annotated, Optional

from langgraph.graph.message import add_messages

class RetailAgentState(TypedDict):

messages: Annotated[list, add_messages]

order_id: Optional[str]

product_sku: Optional[str]

requires_human: bool

The add_messages annotation tells LangGraph to append new messages to the list rather than overwrite them — this is what gives the agent conversation memory across graph cycles.

These represent the retail backend the agent will call. In production you’d wire them to your actual POS or ERP system. Here we simulate with deterministic data so the code runs end-to-end.

from langchain_core.tools import tool

from datetime import datetime, timedelta

@tool

def check_inventory(product_name: str) -> str:

"""Check stock availability for a product by name or SKU."""

inventory = {

"wireless-headphones": {"name": "Wireless Headphones Pro", "sku": "WHP-200", "stock": 42, "price": 79.99},

"usb-c-cable": {"name": "USB-C Charging Cable", "sku": "UCC-100", "stock": 0, "price": 12.99},

"laptop-stand": {"name": "Adjustable Laptop Stand", "sku": "ALS-300", "stock": 15, "price": 49.99},

"mechanical-keyboard": {"name": "Mechanical Keyboard RGB", "sku": "MKR-450", "stock": 7, "price": 129.99},

}

key = product_name.lower().replace(" ", "-")

if key in inventory:

item = inventory[key]

if item["stock"] > 0:

return (

f"{item['name']} (SKU: {item['sku']}) — "

f"${item['price']}, {item['stock']} units in stock."

)

return f"{item['name']} (SKU: {item['sku']}) — currently out of stock."

return f"No product found matching '{product_name}'. Try: wireless-headphones, usb-c-cable, laptop-stand, mechanical-keyboard."

@tool

def track_order(order_id: str) -> str:

"""Look up an order status and estimated delivery date."""

orders = {

"ORD-78432": {"status": "shipped", "eta": (datetime.now() + timedelta(days=2)).strftime("%Y-%m-%d"), "carrier": "FedEx", "tracking": "1Z999AA10123456784"},

"ORD-11002": {"status": "processing", "eta": (datetime.now() + timedelta(days=5)).strftime("%Y-%m-%d"), "carrier": "Pending"},

"ORD-55601": {"status": "delivered", "delivered_date": (datetime.now() - timedelta(days=3)).strftime("%Y-%m-%d")},

}

order = orders.get(order_id)

if not order:

return f"Order {order_id} not found. Valid examples: ORD-78432, ORD-11002, ORD-55601."

if order["status"] == "delivered":

return f"Order {order_id} was delivered on {order['delivered_date']}."

return (

f"Order {order_id} — Status: {order['status']}. "

f"Estimated delivery: {order['eta']}. "

f"Carrier: {order.get('carrier', 'N/A')}. "

f"Tracking: {order.get('tracking', 'N/A')}."

)

@tool

def process_return(order_id: str, reason: str = "customer request") -> str:

"""Initiate a return for an eligible order."""

eligible = {"ORD-55601", "ORD-44109"}

if order_id not in eligible:

return (

f"Order {order_id} is not eligible for return. "

f"Only delivered orders (e.g., ORD-55601) can be returned within 30 days."

)

return (

f"Return initiated for order {order_id}. Reason: {reason}. "

f"Refund will be processed within 5-7 business days. "

f"Return label sent to customer email."

)

@tool

def escalate_to_human(summary: str) -> str:

"""Escalate a complex customer issue to a human representative."""

return (

f"Case escalated. Summary: {summary}. "

f"A human agent will respond within 15 minutes during business hours."

)

tools = [check_inventory, track_order, process_return, escalate_to_human]

Note the exact from langchain_core.tools import tool import — that’s the canonical pattern for defining tools in LangChain/LangGraph 2025+ (langchain docs).

Now we build the graph. The agent node calls the LLM with tool bindings; the ToolNode from LangGraph executes whichever tool the model selects; and a conditional edge decides whether to continue the loop or end the conversation.

from langgraph.prebuilt import ToolNode

from langgraph.graph import StateGraph, START, END

from langchain_openai import ChatOpenAI

model = ChatOpenAI(model="gpt-4o-mini").bind_tools(tools)

def agent_node(state):

"""Call the LLM and return its response."""

response = model.invoke(state["messages"])

return {"messages": [response]}

# Build the graph

workflow = StateGraph(RetailAgentState)

# Register nodes

workflow.add_node("agent", agent_node)

workflow.add_node("tools", ToolNode(tools))

# Define edges

workflow.add_edge(START, "agent")

def should_continue(state) -> str:

"""After an agent step, route to tools or end."""

last_message = state["messages"][-1]

if hasattr(last_message, "tool_calls") and last_message.tool_calls:

return "tools"

return END

workflow.add_conditional_edges(

"agent",

should_continue,

{

"tools": "tools",

END: END,

},

)

workflow.add_edge("tools", "agent")

# Compile

app = workflow.compile()

This is the core LangGraph pattern: agent → decide → tools → agent → ... until the model produces a final answer with no tool calls. The ToolNode handles the entire tool execution lifecycle — parsing the LLM’s tool_calls, invoking the right function, and injecting the results back as ToolMessages into the conversation.

Read more on the LangGraph docs.

The raw tool-routing loop works, but a retail agent needs to know when to stop answering and hand off. We can layer a simple rule: if the model calls escalate_to_human, we mark requires_human and break the loop on the next turn.

def retail_router(state) -> str:

"""Extended routing with human-escalation detection."""

last_message = state["messages"][-1]

# Check if the last tool result was an escalation

tool_calls = getattr(last_message, "tool_calls", None)

if tool_calls:

for tc in tool_calls:

if tc["name"] == "escalate_to_human":

return {"messages": [last_message], "requires_human": True}

return "tools"

return END

workflow2 = StateGraph(RetailAgentState)

workflow2.add_node("agent", agent_node)

workflow2.add_node("tools", ToolNode(tools))

workflow2.add_edge(START, "agent")

workflow2.add_conditional_edges("agent", retail_router, {"tools": "tools", END: END})

workflow2.add_edge("tools", "agent")

app = workflow2.compile()

Let’s test it with three realistic retail queries:

import json

def run_query(query: str):

from langchain_core.messages import HumanMessage

messages = [HumanMessage(content=query)]

result = app.invoke({"messages": messages})

ai_reply = result["messages"][-1].content

print(f"Q: {query}")

print(f"A: {ai_reply}")

if result.get("requires_human"):

print("⚠️ ESCALATED TO HUMAN")

print("---")

run_query(

"Do you have the Wireless Headphones Pro in stock? "

"What's the price and how many are available?"

)

run_query("Where is my order ORD-78432?")

run_query(

"I need to return order ORD-55601. The product doesn't match "

"the description on the website."

)

Expected output:

Q: Do you have the Wireless Headphones Pro in stock? What's the price and how many are available?

A: The Wireless Headphones Pro (SKU: WHP-200) is in stock — $79.99, with 42 units available.

---

Q: Where is my order ORD-78432?

A: Order ORD-78432 has been shipped via FedEx. Your estimated delivery date is

2026-04-30. Tracking number: 1Z999AA10123456784.

---

Q: I need to return order ORD-55601. The product doesn't match the description on the website.

A: I've initiated a return for order ORD-55601. The reason has been recorded as

"product doesn't match the description on the website". Your refund will be

processed within 5-7 business days. We've sent a return label to your email.

---

The agent chains tool calls automatically. For the stock query, it invokes check_inventory, reads the result, and formats a natural-language answer — all in a single round. No manual prompt engineering needed for the routing logic.

Production retail agents need a system prompt that sets tone, scope, and business rules. Let’s wire one in:

SYSTEM_PROMPT = """You are a helpful retail support agent for TechGear Online.

Rules:

- Always check inventory before recommending products.

- If a product is out of stock, suggest the closest in-stock alternative.

- For order questions, use the order ID from context if available.

- If the order ID cannot be found after a lookup attempt, ask the customer to verify it.

- For returns, confirm the order is delivered within the 30-day window before processing.

- If the issue is complex, involves a complaint, or requires policy exceptions, escalate to a human.

Be concise and friendly. Use product names exactly as listed in the inventory."""

def run_with_system(query: str):

from langchain_core.messages import HumanMessage, SystemMessage

messages = [SystemMessage(content=SYSTEM_PROMPT), HumanMessage(content=query)]

result = app.invoke({"messages": messages})

print(f"A: {result['messages'][-1].content}")

run_with_system(

"I want a USB-C cable but the one I saw is out of stock. "

"What else do you have?"

)

run_with_system(

"I'm furious about a billing issue with order ORD-11002. "

"I was charged twice!"

)

Expected behavior for the escalation query:

Q: I'm furious about a billing issue with order ORD-11002. I was charged twice!

A: I understand your frustration. Let me look into this... [checks order]

I see the order is still processing. A duplicate charge on an unfulfilled order

needs manual review from our billing team. I'm escalating this right now. A

human agent will reach out within 15 minutes during business hours.

In production, you need the agent to remember conversations across turns. LangGraph ships with a MemorySaver checkpointer that handles this out of the box. For production use, swap it with SqliteSaver or a Postgres-backed saver.

from langgraph.checkpoint.memory import MemorySaver

checkpointer = MemorySaver()

app_with_memory = workflow2.compile(checkpointer=checkpointer)

# First turn

result1 = app_with_memory.invoke(

{"messages": [HumanMessage(content="Hi, I'd like to check order ORD-78432")]},

config={"configurable": {"thread_id": "customer-42"}},

)

print("Turn 1:", result1["messages"][-1].content)

# Second turn — the model knows the context now:

result2 = app_with_memory.invoke(

{"messages": [HumanMessage(content="When will it arrive?")]},

config={"configurable": {"thread_id": "customer-42"}},

)

print("Turn 2:", result2["messages"][-1].content)

The thread_id ties multiple invocations to the same conversation thread. This is the same pattern you’d use for a multi-turn chat interface or a phone-based voice agent.

We’ve covered the full lifecycle: state definition, tool creation, graph wiring, conditional routing, system prompts, and conversation persistence. But production retail agents need more:

with_structured_output to parse tool results into Pydantic models for downstream processing.The key insight we’ve learned from shipping agents in retail: the graph structure matters more than the model. A good routing graph with GPT-4o-mini will outperform GPT-4o with no graph. Spend your time on clear tool definitions, clean edges, and solid fallback logic. Your customers will notice the result, not the model name.

Build approval workflows with LangGraph interrupt() and Command(). Step-by-step tutorial with approve, reject, and edit patterns.

Step-by-step LangGraph tutorial. Build your first Python AI agent with StateGraph nodes, edges, and tool calls. Complete runnable code included.

from langchain.tools import tool — build custom LangChain agent tools with the @tool decorator. Type hints, docstrings, async, error patterns.