Perplexity AI in 2026: Pro, Deep Research, Comet & API

Pro plan, Deep Research, Comet browser, real-time search, API. Everything Perplexity ships in 2026, plus how it compares to ChatGPT and Gemini.

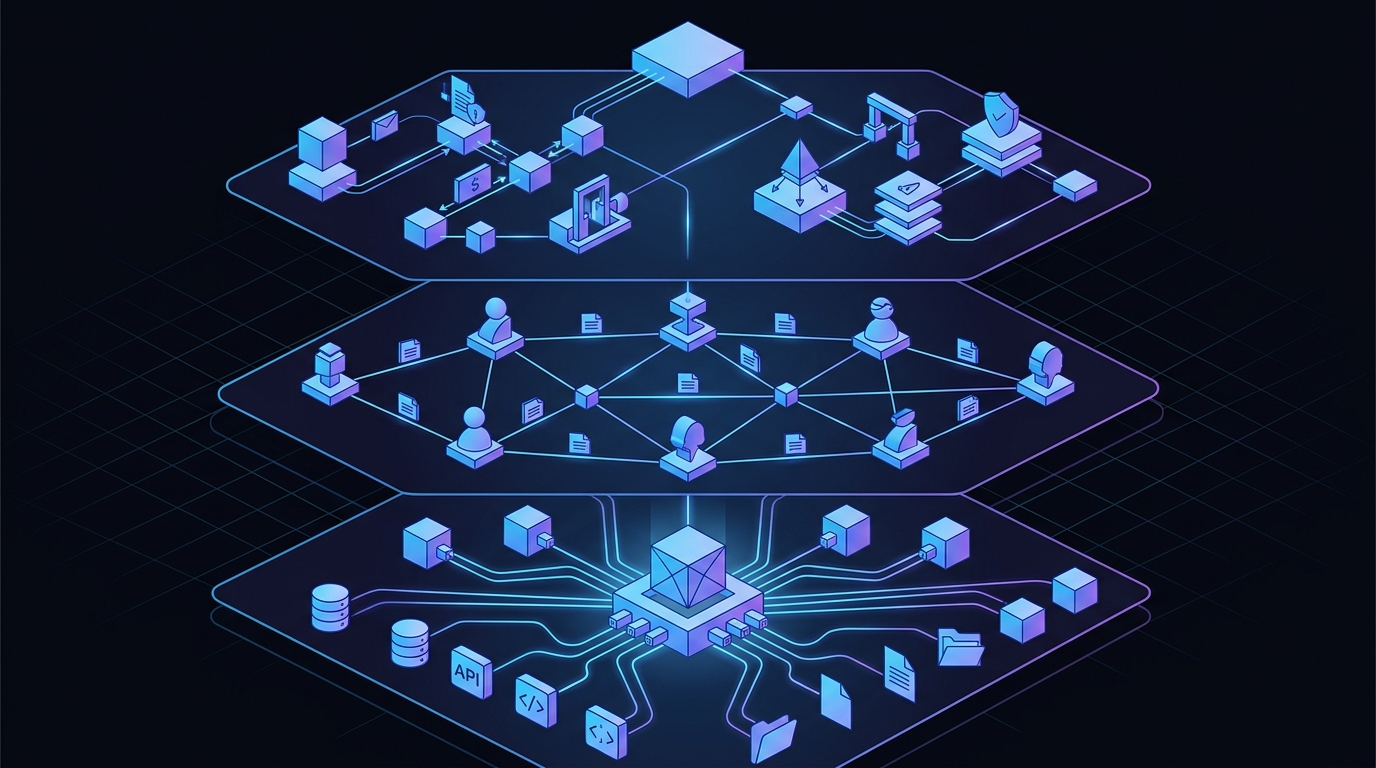

Perplexity launched as an “answer engine” — a cleaner, sourced alternative to Google that synthesized the web into a single paragraph with citations. That was 2023 thinking. In 2026, Perplexity has quietly rebuilt itself into something closer to a research operating system: deep research agents accessible via API, an agentic browser that automates multi-step workflows, and an enterprise stack that rivals dedicated knowledge platforms.

We’ve been shipping production agents for two years now, and the pattern is clear. The bottleneck isn’t model quality or retrieval speed anymore. It’s the orchestration layer between a question and a decision-ready answer. Perplexity is attacking exactly that problem, and doing it across three surfaces simultaneously: the Sonar API, the Comet browser, and the Pro/Max subscription tiers.

This isn’t a product roundup. It’s a look at how Perplexity’s research stack works under the hood, where it outperforms building your own pipeline, and where it still falls short — so you can decide whether to integrate it or roll your own.

Perplexity’s Deep Research (now available via the Sonar Deep Research API) transforms a multi-hour research session into a structured, cited report in 2–4 minutes. The architecture is worth dissecting because it encodes patterns that every engineering team building research tools should understand.

The Sonar Deep Research model (API docs) operates on a 128K token context window and runs a multi-step loop:

This loop isn’t just a chain — it’s a research planner that reasons about its own information needs. On the Humanity’s Last Exam benchmark, Deep Research scored 21.1%, outperforming standalone models like Gemini Thinking and o1. The margin comes from iteration, not model size.

The Sonar Deep Research API ships at $2/M input tokens and $8/M output tokens (source). Here’s what that means in practice:

| Query Type | Approx. Input Tokens | Approx. Output Tokens | Cost |

|---|---|---|---|

| Standard research query | ~4,000 | ~3,000 | ~$0.032 |

| Complex multi-domain topic | ~12,000 | ~8,000 | ~$0.088 |

| Enterprise-grade deep dive | ~20,000 | ~15,000 | ~$0.160 |

At these rates, a team running 500 research queries/month spends roughly $20–50 on API costs — comparable to a single seat on many competitor tools. The real question isn’t price; it’s quality of the synthesis layer, and that’s where most自建 pipelines fail.

Comet Browser is Perplexity’s most ambitious bet: a Chromium-based browser where the AI isn’t a sidebar feature — it’s the control plane. Official announcement.

Most “AI browsers” add a chat panel and call it a day. Comet does something structurally different:

The 23% productivity improvement Perplexity claims is hard to measure independently, but the structural innovation is clear: the browser is no longer a passive viewing surface. It’s an active research agent.

The API is where Perplexity separates itself from ChatGPT-style completions. Every Sonar model returns structured citations alongside the response content, and every query hits the live web by default.

| Model | Best For | Cost (per M tokens) |

|---|---|---|

| Sonar | Fast factual queries | $1.00 in / $1.00 out |

| Sonar Pro | Deeper analysis, file uploads | $3.00 in / $3.00 out |

| Sonar Reasoning | Multi-step reasoning | $5–15 in / $15–25 out |

| Sonar Deep Research | Full research reports | $2.00 in / $8.00 out |

Pro subscribers receive $5 in monthly API credits, which covers ~100 standard queries or ~10–15 deep research calls. For teams integrating Perplexity into agent workflows, the API is the real product.

Perplexity’s API is fully compatible with the OpenAI Chat Completions interface, meaning any application using openai SDK works with zero code changes:

from openai import OpenAI

client = OpenAI(

api_key=os.environ["PERPLEXITY_API_KEY"],

base_url="https://api.perplexity.ai",

)

response = client.chat.completions.create(

model="sonar-deep-research",

messages=[

{

"role": "system",

"content": "You are a technical analyst. Structure findings with clear sections and cite all sources."

},

{

"role": "user",

"content": "Compare vLLM and SGLang for high-throughput LLM serving with benchmarks."

}

],

max_tokens=4096,

)

print(response.choices[0].message.content)

print("Sources:", response.citations)

The critical difference from standard completions APIs is the response.citations field — a list of URLs for every claim in the generated text. For our team, this replaces hours of manual fact-checking in agent pipelines that produce research summaries.

Perplexity’s pricing structure has matured from a simple free/pro split into four distinct tiers (full pricing):

Pro at $20/month — The sweet spot for individual power users. Unlimited searches, 20 daily deep research queries, access to Claude 4 and GPT-5, file uploads, and $5 API credit. If you’re a developer or researcher using Perplexity daily, this pays for itself in one saved manual research session.

Max at $200/month — For users who need unlimited deep research, Comet browser access, o3-pro reasoning models, and unrestricted Labs usage. The 10× price jump is justified only for heavy researchers or teams doing daily competitive intelligence. See our full breakdown in Perplexity AI: The Complete Guide to AI-Powered Search.

Enterprise at $40–$325/seat/month — Adds team management, audit logs, internal knowledge search, SSO/SAML, and dedicated support. The internal knowledge search is the killer feature here: query your company’s wiki, docs, and files alongside the open web with the same inference pipeline. For teams where research is a core business function, the ROI is measurable in hours saved per employee per week.

The trend is unambiguous: search is becoming agentic, and agentic tools are consuming search as infrastructure.

For teams building research-heavy agents — competitive intelligence pipelines, due-diligence workflows, academic literature reviews — Perplexity’s API offers a compelling off-the-shelf research layer. You get:

Where Perplexity doesn’t yet compete well:

Perplexity’s search infrastructure runs on Vespa.ai — an open-source platform that handles indexing, ranking, and serving of billions of documents. Combined with their Sonar model family (built on Meta’s Llama architecture and post-trained for reasoning), Perplexity has built a vertically integrated research stack that most teams cannot replicate without significant engineering investment.

Our recommendation for teams evaluating Perplexity as infrastructure:

Perplexity isn’t selling a better Google. It’s selling a programmable research layer — and for teams that run on information, that’s the infrastructure that matters.

Further reading: For more on building with AI tools, see our AI Infrastructure Stack: 2026 Edition and our Complete Guide to AI Agent Frameworks 2026.

Pro plan, Deep Research, Comet browser, real-time search, API. Everything Perplexity ships in 2026, plus how it compares to ChatGPT and Gemini.

How MCP, A2A, and ACP converge into a two-layer protocol stack for production agents — and what it means for your architecture in 2026.

Perplexity, ChatGPT, Gemini, AI Overviews — how to structure content so AI engines cite your brand. AEO strategies for engineers.