The AI Infrastructure Stack: 2026 Edition

A refreshed view of the production AI stack at the start of 2026 — what changed since 2024, what's consolidating, and where the next round of innovation is landing.

The AI Infrastructure Stack: 2026 Edition

Two years after the first version of our stack explainer, the shape of AI infrastructure has consolidated and the open questions have changed. 2024 asked: “How do we run LLMs in production?” 2026 asks: “How do we run agent fleets in production, efficiently, across mixed GPU generations, with spend governance, across sovereign regions?”

This is the refreshed stack view. What’s stable, what’s new, and what the next shift looks like.

The Six Layers, Revised

The layer model has survived:

- Compute — GPUs, TPUs, custom silicon

- Serving — inference engines

- Orchestration — agent frameworks, tool use, memory

- Data — vectors, features, retrieval

- Observability — tracing, evals, cost

- Gateway — the entry point to AI services

What’s changed is mostly inside each layer. Let’s walk through.

Layer 1 — Compute: Three-Generation Fleets

Where 2024 was H100-dominant, 2026 fleets are typically three generations of GPU running simultaneously:

- A100 — still cheap, still serving 7–13B models and legacy workloads

- H100 — workhorse for 70B inference and older training

- B200 / B300 — frontier training, large-model inference, hot paths

The old approach of “one GPU type, many workloads” has given way to workload-matched placement. Platform teams route training to B200, bulk inference to H100, dev/small-model to A100/L40S.

AMD MI300X / MI325X is meaningfully represented in 2026 fleets — ~25% of new deployments we see include AMD GPUs, up from near-zero in 2024. ROCm’s software story is now equivalent for most workloads. See MI300X vs H100.

Custom silicon (Groq, Cerebras, AWS Trainium/Inferentia, TPUs) holds steady 15–20% share of inference traffic, concentrated in hosted-API providers. Few teams build on them directly.

Pricing

The neocloud vs hyperscaler gap narrowed but didn’t close. Hyperscalers dropped H100 on-demand prices 40%. Neoclouds still undercut by 25–40%. B200 follows the same pattern a year behind.

GPU reserved pricing for 1-year commits in early 2026:

- A100 80GB: $0.70–$1.10/hr

- H100 80GB: $1.40–$2.20/hr

- B200 192GB: $3.20–$4.80/hr

- MI300X 192GB: $1.80–$2.80/hr

Layer 2 — Serving: Consolidation Around vLLM + Specialized Runtimes

The LLM inference server picture consolidated:

- vLLM continues to dominate open-source, ~60% share in our consulting book

- SGLang carved a real niche for agent-heavy workloads, ~15% share, particularly strong for structured outputs

- TGI remains a solid choice with ~10% share, loved by HF-ecosystem teams

- TensorRT-LLM holds the throughput ceiling for orgs that invest in it, ~10% share concentrated in very large shops

- Others (LMDeploy, LightLLM, MLC) are niche

The big 2026 shift: disaggregated inference — separating prefill and decode onto different node pools — is now standard practice for large-scale deployments. vLLM, SGLang, and TRT-LLM all support it. Throughput wins of 30–50% on long-prompt workloads are real. See Disaggregated Inference.

FP4 is the default precision for B200 inference. FP8 on H100. INT4 (AWQ/GPTQ) for A100. Quantization is no longer optional.

Layer 3 — Orchestration: The Agent Era

This is where the biggest 2024→2026 shift lives.

In 2024, orchestration meant “a LangChain or LangGraph pipeline with some tool calls.” In 2026, agent frameworks became a proper category with enterprise-grade options:

- LangGraph and LangGraph Cloud are mature; the reference for most production agents

- CrewAI has strong enterprise adoption, especially for structured multi-agent workflows

- AutoGen 2 (Microsoft) ships with real tooling for AzureML deployments

- AWS Bedrock Agents and Google Agent Builder are managed alternatives

- Anthropic’s Agent SDK landed as a first-class option for Claude-native agents

The Model Context Protocol (MCP) won as the tool-calling standard. Nearly every framework supports it. Tool registries are shared across agents. See our Multi-Agent Orchestration Infrastructure guide.

Agent infrastructure is now a distinct category from inference serving. Different scaling, different observability, different resource shapes. See Agent Infrastructure: What’s Different.

Layer 4 — Data: Vector DB Consolidation; Context Stack Expansion

Vector DBs consolidated:

- Pinecone remains the managed default

- Qdrant won the open-source self-host battle

- Weaviate carved a niche in hybrid-search-heavy workloads

- Milvus holds the very-large-scale segment

- pgvector + pgvectorscale continues to grow share, especially among Postgres-native teams

What’s new: the context stack — the set of systems managing an agent’s memory, history, and retrieval — expanded beyond vector DBs alone.

Production agents now routinely run:

- Vector DB for semantic search

- Full-text search for lexical (Postgres FTS, Elastic, Meilisearch)

- A graph store for entity relationships (Neo4j, Memgraph, Dgraph)

- A key-value store for hot facts (Redis, DynamoDB)

- An event log for historical traversal (Kafka, EventStore)

This stack has a name now: “context engineering.” See Context Engineering: Storage, Retrieval, and the New Memory Stack.

Layer 5 — Observability: Langfuse and OTel Won

Two-year pattern clear: Langfuse and OTel-native backends beat siloed proprietary SDKs.

Most production deployments we see now use:

- OTel for tracing (GenAI semantic conventions are stable)

- Langfuse (managed or self-hosted) for LLM-specific UI

- A general APM (Datadog, Honeycomb, Grafana) for everything else

Evals are finally first-class. Every serious team has a regression-testing harness gate-keeping prompt/model changes. See Model Evals in Production.

Cost attribution is standard. “Who spent what on which model” is a report finance can pull any day of the month.

Layer 6 — Gateway: LiteLLM and Portkey Stable; Guardrails Integrated

LiteLLM (open source) and Portkey (managed) remain the dominant gateway options. Kong AI Gateway is strong for Kong shops.

2026 additions:

- Guardrails (PII redaction, injection detection, output filtering) are now integrated into the gateway layer, not a separate product

- Semantic caching is standard

- Cost-aware routing (route to cheapest model that meets quality bar) is increasingly default

The gateway is the single most valuable piece of infrastructure to add early. Still.

What’s New In 2026

Things that didn’t exist (or barely existed) in 2024:

1. Sovereign AI infrastructure

Regional requirements drove real investment in sovereign inference stacks. European, Indian, Gulf-region, and Japanese providers operate full AI stacks inside their respective jurisdictions. EU AI Act compliance drove a lot of this. See Running Sovereign AI.

2. Edge inference

Small models (3B, 7B) running on consumer GPUs and even phones is production-viable. Llama 4 Tiny, Qwen 3 Edge, Phi-5 are designed for this. Inference-on-device use cases (privacy, latency, offline) are real. See Inference at the Edge.

3. Agent orchestration platforms

As noted: agent infra is a category now. Platforms like LangGraph Cloud, Bedrock Agents, Turion Agents ship managed agent orchestration.

4. Context engineering

Previously ad-hoc memory patterns are now named, productized, and discussed. Short-term memory (KV cache, context window), working memory (active context), long-term memory (vector + graph stores) is a standard decomposition.

5. AI platform teams

“AI platform engineer” is a real role. The playbook is settling. See Building an AI Platform Team.

What Got Harder

1. Multi-region agent consistency. Running an agent fleet across 5 regions with consistent behavior is harder than stateless inference because of context stores, tool registries, and provider variations by region.

2. Compliance breadth. EU AI Act, India DPDP, US state regs, sector-specific (HIPAA, FINRA). Legal teams need to stay current. Engineering pays the cost of translating requirements into controls.

3. Rapid model deprecation. OpenAI, Anthropic, and Google deprecate models aggressively. Your app needs to tolerate model churn without user-visible regressions.

4. Agent debugging. Long trajectories, tool-call loops, emergent behaviors. Observability is better but the underlying problem is genuinely hard.

5. Cost scaling in multi-agent systems. An agent that calls another agent that calls a third creates token amplification that’s easy to miss until the bill arrives.

Patterns That Will Be Standard by 2027

Early predictions:

- Multi-modal by default. Text, vision, audio in the same inference pipeline. Stack has to handle it uniformly.

- Fine-tuning as a service. Managed LoRA pipelines integrated with inference endpoints. Customers tune without running training infra.

- Per-user adapters at scale. Millions of tiny LoRAs, dynamically loaded at serve time, personalizing per user.

- Probabilistic SLOs. “98% of user tasks succeed within 3 tool calls” replacing latency SLOs.

- Compute-efficient reasoning. Test-time compute patterns (search, chain-of-thought, verification) become first-class.

Recommendations For 2026 Planning

If you’re starting fresh: Use hosted APIs + LiteLLM gateway + pgvector + OTel + Langfuse. Deploy with Claude, GPT-4o-class, or Llama-3.3-70B. Add fine-tuning only when baseline quality gaps are measurable.

If you’re mid-growth: Time to invest in platform engineering. AI FinOps, eval regression, multi-cloud gateway, security reviews. These compound.

If you’re at scale: B200 for new commits. Invest in disaggregated serving, multi-LoRA per tenant, context engineering, and agent orchestration infrastructure.

Across all sizes: Treat AI infrastructure as software. Evals, CI, canaries, rollbacks. The teams that do this ship 10x faster.

Further Reading

- The AI Infrastructure Stack Explained (2024)

- The State of AI Infrastructure 2025

- Building an AI Platform Team: Roles, Tools, and Rituals

- Agent Infrastructure: What’s Different from LLM Serving

Planning your 2026 AI infrastructure? Let’s talk — we help shops from pre-launch to global scale.

Related Posts

State of AI Infrastructure 2026: Mid-Year Reality Check

A mid-2026 ground-truth report: B200 reality, SGLang's $400M spinout, agent infra going mainstream, and the three patterns dominating production.

The State of AI Infrastructure 2025

A ground-truth report on where AI infrastructure stands at the start of 2025 — GPU availability, inference pricing, the neocloud wars, and the architecture patterns winning in production.

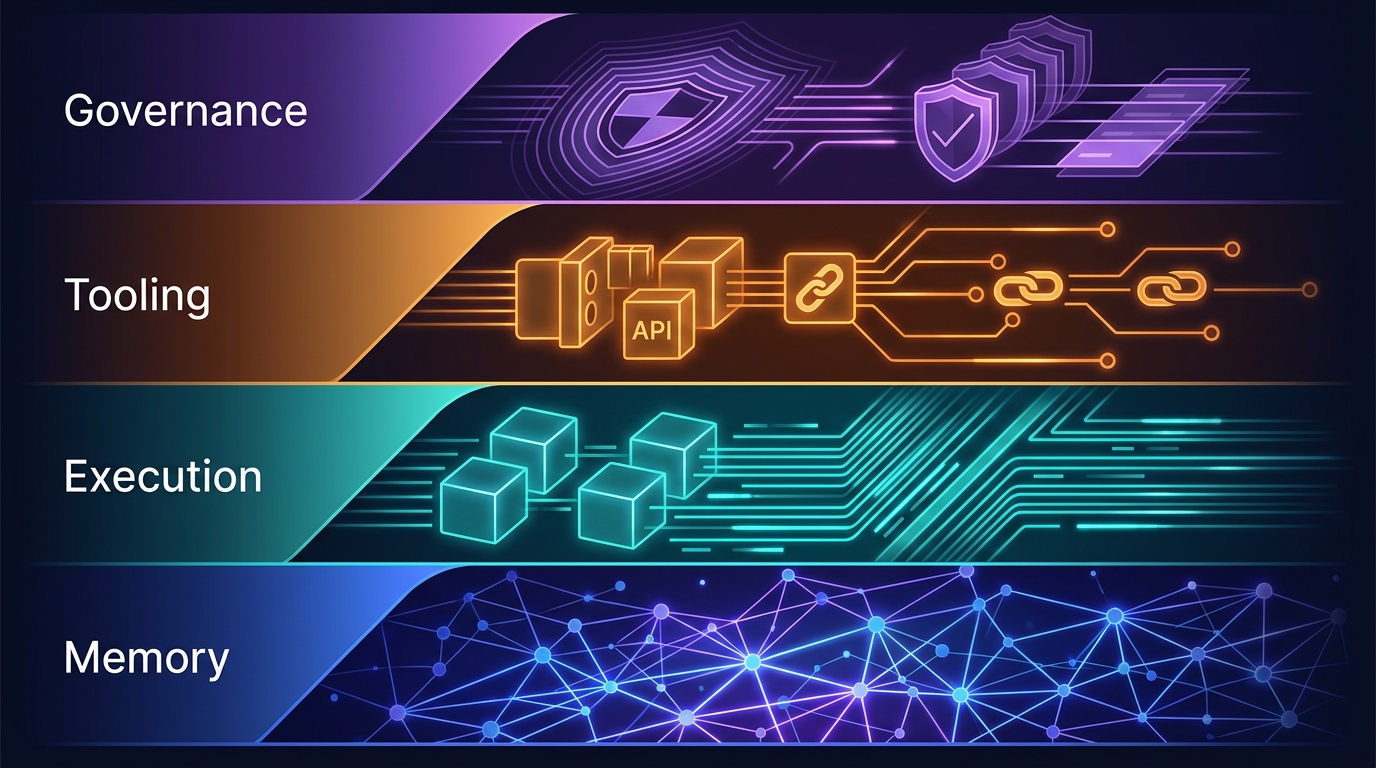

The Four-Layer Agent Infrastructure Stack: Where the Moat Actually Lives in 2026

A generation of agent startups will get commoditized. The ones that survive own one of four stateful layers: Memory, Execution, Tooling, or Governance. Here's how to tell the difference between a moat and glue code.