Model Evals in Production: Regression Testing Prompts

If you ship prompt changes without regression tests, you're flying blind. A practical guide to building eval pipelines that catch quality regressions before users do.

Model Evals in Production: Regression Testing Prompts

Traditional software has unit tests. Prompt-based software has evals. Without them, you ship a prompt change on Tuesday and by Friday you’ve silently broken the JSON output format for 3% of your users and nobody knows until someone files a bug with a screenshot.

This post covers building production-quality eval infrastructure: what to test, how to automate, where to run it in your pipeline, and the anti-patterns that make evals performative rather than useful.

Why Evals Are Non-Negotiable

Three properties of LLM-based systems make evals essential:

-

Changes cascade unpredictably. A 20-token tweak to the system prompt can subtly change output format, refusal rate, or structured-output compliance — in ways unit tests don’t catch.

-

Models update. When OpenAI ships gpt-4o-2025-05, your prompts may behave differently overnight. Without evals, you find out through user complaints.

-

Prompts are code. They should be versioned, tested, and rolled out like code. The absence of automated tests is just technical debt.

Teams that invest in evals early ship 3–5x faster on prompt/agent improvements. The teams that don’t stall around month 6 of serious AI development, unable to iterate without breaking things.

The Eval Spectrum

“Eval” means many things. The useful ones:

1. Correctness evals

Does the output solve the task? Examples:

- Classification accuracy (label matches expected)

- Code generation passes tests

- SQL query returns correct result

- Extraction accuracy against ground-truth fields

These are the closest to traditional unit tests and the most reliable.

2. Quality evals

Is the output good? Subjective properties:

- Helpfulness / relevance

- Tone / style

- Coherence / structure

Measured via human rating or LLM-as-judge (more below).

3. Format/contract evals

Does the output meet structural requirements?

- Valid JSON

- Schema compliance

- Required fields present

- Length within bounds

These are the easiest to automate and the highest-leverage early wins.

4. Safety evals

Does the output avoid known failure modes?

- No PII leakage

- No hallucinated citations

- No refusal when a response is warranted

- No response when a refusal is warranted

- No toxic content

5. Retrieval evals (for RAG)

Is retrieval finding the right content?

- Recall@K

- NDCG

- Answer correctness given retrieved context

See Hybrid Search in Production.

6. Production evals

How does the system perform on real traffic over time?

- User feedback rates (thumbs up/down)

- Fallback frequency

- Task completion rate

- User retention

Building An Eval Dataset

The single biggest predictor of eval value: dataset quality. A thousand-example eval set with real production traffic signals beats a 10,000-example synthetic set every time.

Where data comes from

- Real production traffic, sampled and labeled

- User feedback, where “good” / “bad” is provided

- Hand-curated examples, designed to test edge cases

- Synthetic generation, using LLMs to create queries

- Adversarial cases targeting known failure modes

Start with 50 hand-curated examples covering your most important cases. Expand to 500–1000 by sampling from production logs. Treat the eval set like code: versioned, reviewed, updated when the product evolves.

Labels

For correctness / format evals, ground-truth labels are feasible:

- Expected output

- Expected JSON schema

- Expected answer to a question

For quality evals, use:

- Pairwise human ratings (A vs B)

- LLM-as-judge with careful prompting

- Absolute ratings (Likert scale)

We’ll address LLM-as-judge separately because it needs care.

LLM-as-Judge: Useful But Tricky

Use another LLM (often a stronger model) to judge your outputs. Scales well, costs moderately, but has well-documented biases.

Known biases:

- Position bias. In pairwise comparison, judges prefer the first-listed option. Mitigate by randomizing order or running both orderings.

- Length bias. Longer answers rated higher on average. Mitigate by length-normalizing or explicitly prompting against it.

- Model bias. Judges prefer outputs from similar models. GPT-4 prefers GPT-4 outputs; Claude prefers Claude. Use a different-family judge.

- Training data contamination. If the judge saw the eval data in training, scores inflate.

Mitigations:

- Use the strongest available model as judge (gpt-4o, claude-opus)

- Pairwise preferences > absolute ratings (humans agree on A vs B better than 1–5 ratings)

- Calibrate judge reliability on a small human-labeled sample

- Use multiple judges and ensemble

Our default: pairwise Claude-as-judge for quality, with absolute correctness for task evals. Human review on disagreements.

Where Evals Run

Three checkpoints:

1. Pre-commit (local)

Small, fast eval on a subset (~20 examples). Runs in < 1 minute. Catches obvious breakage before the PR is opened.

2. CI (PR validation)

Full eval suite (~100–500 examples). Runs in 5–30 minutes. Required-to-merge on prompt or model changes. Automated comparison vs. main branch.

3. Production (live)

Continuous eval against real traffic. Sample 1% of requests, score them, alert on regression.

The CI eval is the most important. You want “PR is red because eval regressed” to be normal. Teams that don’t fail PRs on evals tend to merge regressions.

Pipeline Architecture

[ Prompt change PR ]

│

▼

[ CI runs eval suite ]

│

▼

[ Compare metrics vs main branch ]

│

┌────┴────┐

▼ ▼

[ PASS ] [ FAIL → block merge ]

[ Merge to main ]

│

▼

[ Deploy to canary (5% traffic) ]

│

▼

[ Production eval on canary vs baseline ]

│

┌────┴────┐

▼ ▼

[ Roll out ] [ Roll back ]

Most teams start with just the PR-check piece. Production canary evals are mature-team territory.

Tools That Help

Eval frameworks:

- Promptfoo — YAML-driven, great for CI

- DeepEval — pytest-style eval, easy to integrate

- OpenAI Evals — OpenAI’s framework, bit dated but broad

- Langfuse — LLM-observability + evals together

- Langsmith — LangChain’s eval product

- Phoenix — open source, notebook-friendly

LLM-as-judge libraries:

- Ragas — RAG-specific judge metrics

- MT-Bench — multi-turn dialog judging

- AlpacaEval — instruction-following benchmark

Synthetic data generation:

- Giskard — adversarial case generation

- Ragas test-set generation — RAG-specific synthetic queries

Our default stack: Promptfoo in CI, Langfuse for production sampling and labeling, homegrown glue.

Metrics That Matter

Pick a small number of primary metrics. Track many secondary ones.

Primary (must not regress):

- Task success rate (correctness)

- Format compliance (JSON valid, schema matched)

- Refusal accuracy (refuses what should be refused, answers what can be answered)

Secondary (monitor, don’t block on):

- Mean output length

- P99 latency

- Tool call rate

- Cost per request

Comparison tables:

| Metric | main | this PR | delta |

|---|---|---|---|

| Task success | 0.87 | 0.85 | -2pp ⚠️ |

| JSON valid | 1.00 | 1.00 | 0 |

| Latency P95 | 1.2s | 1.1s | -8% ✅ |

| Cost/request | $0.012 | $0.014 | +17% ⚠️ |

This is what your PR check should print. Block merge if any primary regresses beyond threshold.

Continuous Evals On Production Traffic

The strongest evals are against real traffic:

- Sample 0.5–2% of requests

- Score with eval suite (automated or LLM-as-judge)

- Trend quality over time

- Alert on drift

Why this matters: your offline eval set doesn’t drift. Your users’ queries do. A model that scored 0.87 on your eval set six months ago might be scoring 0.80 on fresh traffic because user behavior changed.

Langfuse, Langsmith, and Helicone all support sampled production evals. Worth the setup cost.

Evals For Agents

Agents add a layer of complexity: the output isn’t a single response, it’s a trajectory of tool calls and reasoning.

Agent eval metrics:

- Task completion — did the agent finish?

- Step count — how many tool calls did it take?

- Tool correctness — were the right tools called with right args?

- Reasoning quality — did the intermediate steps make sense?

- Efficiency — token and time cost

Agent evals are harder because there’s no single ground-truth trajectory. Multiple paths can be correct. Tools like Langsmith and Arize Phoenix help with trajectory visualization; for scoring, task-completion + LLM-as-judge on the trajectory is the pragmatic default.

Anti-Patterns

1. Eval set leaks. Your eval examples show up in training data or prompt few-shot, inflating scores. Curate carefully, rotate examples, check for contamination.

2. Over-fitting to evals. Teams iterate on prompts to raise eval scores, ignoring production quality. Use diverse evals; sample from real traffic.

3. Evals that never fail. If your eval always passes, either you don’t test enough or your threshold is too loose.

4. Ignoring eval cost. A 1000-example GPT-4 eval is $20–$80 per run. CI costs add up. Use smaller/cheaper judges where possible; sample intelligently.

5. No owner. Evals rot if nobody owns them. Assign an owner who curates examples, updates thresholds, investigates failures.

6. Evaluating quality without definition. “Good” is subjective. Write down what good means for your app. Put it in the eval prompt.

Getting Started

If you have no evals today:

Week 1: Hand-curate 50 examples covering your top 10 user intents. Label expected behavior.

Week 2: Set up Promptfoo or DeepEval. Get a CI check running on prompt changes.

Week 3: Add a JSON-validity check and any format contracts. These are the highest-signal automated evals.

Week 4: Sample 100 production requests, grade them manually, see where your system is weakest. Add evals for those failure modes.

Month 2: Add LLM-as-judge for subjective quality. Start tracking trends.

Month 3+: Continuous production eval, drift alerts, automated regression detection.

Don’t skip the hand-curation. The fastest eval programs start from human judgment and scale from there.

Further Reading

- Tracing LLM Applications with OpenTelemetry

- Building Production AI Agents: The Complete Guide

- LoRA, QLoRA, and PEFT: The Fine-Tuning Infrastructure Guide

Building an eval pipeline for a production LLM app? Get in touch — we’ll help you ship a regression-testing harness in a week.

Related Posts

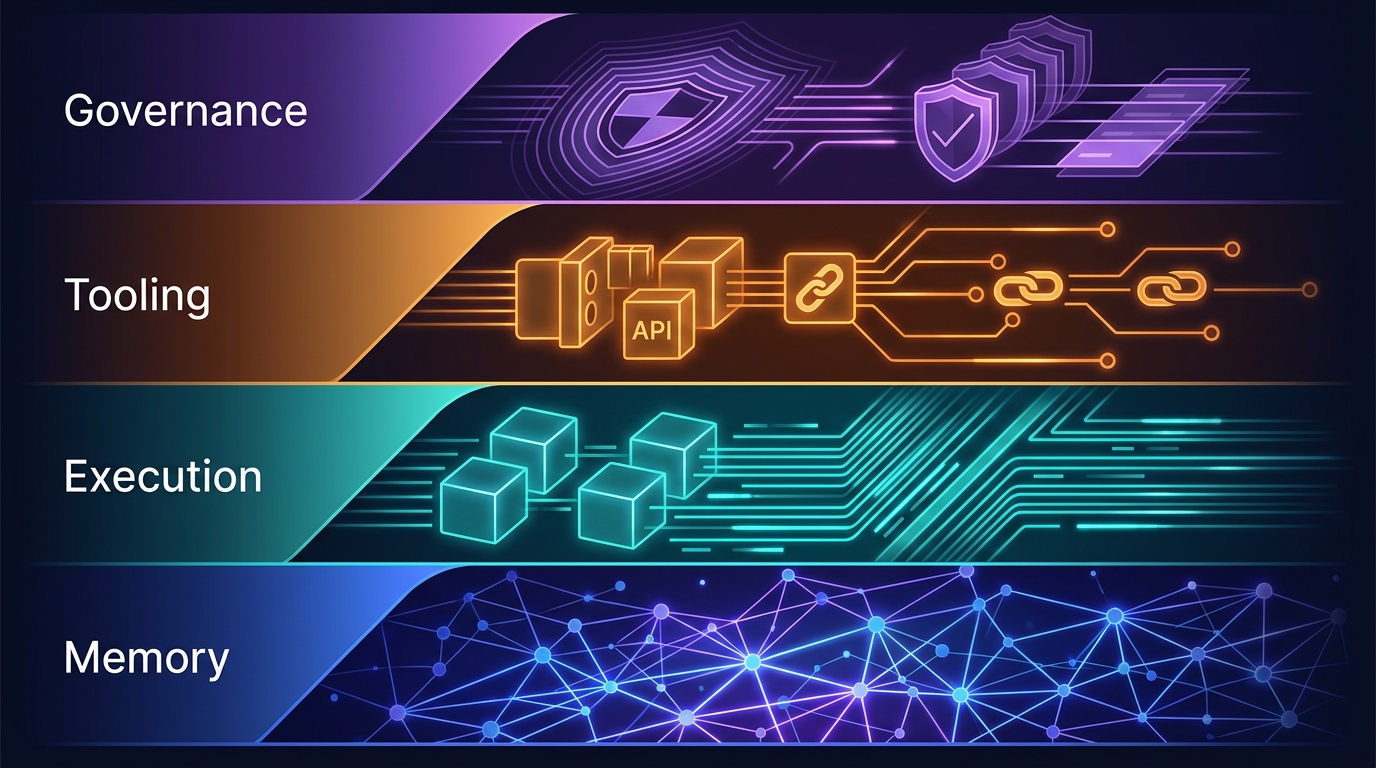

The Four-Layer Agent Infrastructure Stack: Where the Moat Actually Lives in 2026

A generation of agent startups will get commoditized. The ones that survive own one of four stateful layers: Memory, Execution, Tooling, or Governance. Here's how to tell the difference between a moat and glue code.

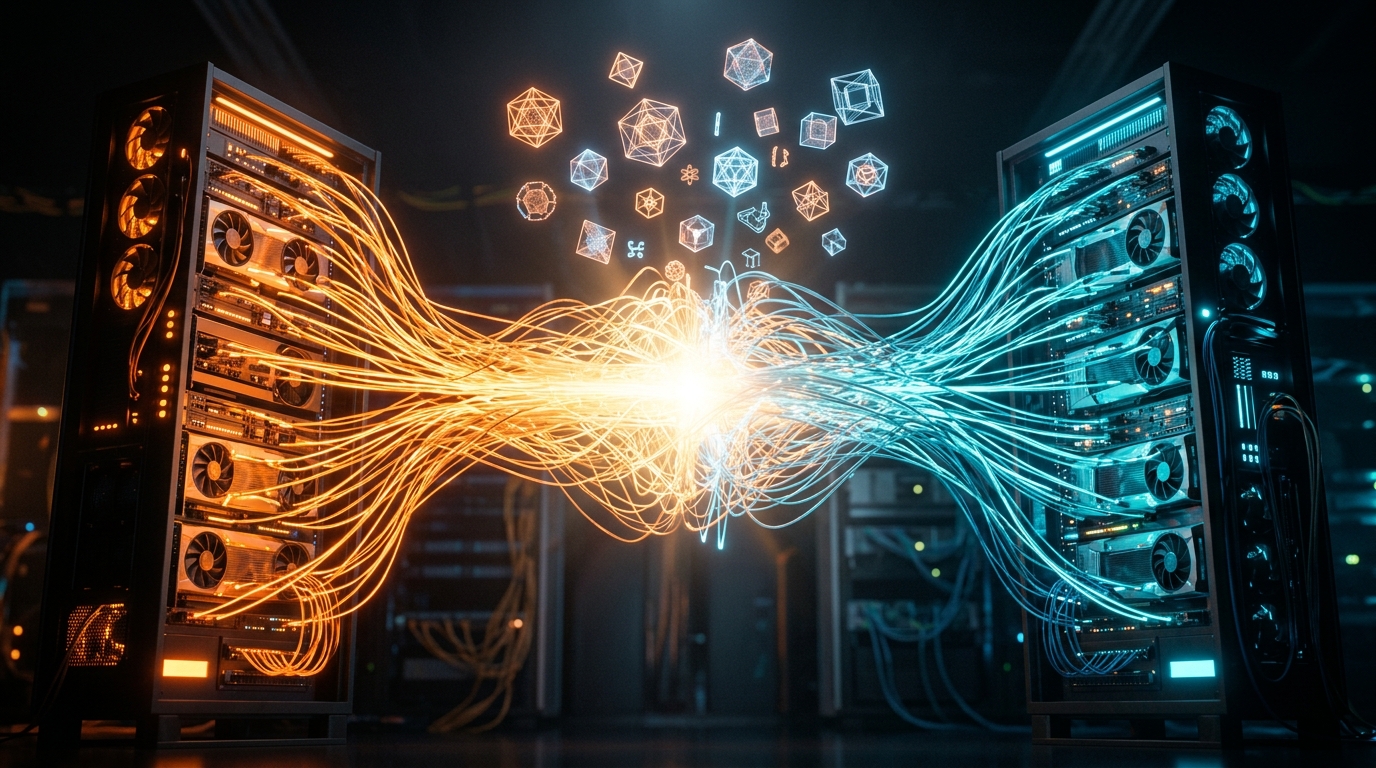

GPU Clouds: RunPod vs Lambda vs CoreWeave — June 2026

RunPod H100 at $2.69/hr. Lambda at $4.29/hr. CoreWeave at $6.16/hr — but requires 8-GPU minimums. Which GPU cloud makes sense for your agent workloads?

vLLM and SGLang Are Converging — and That Changes the Inference Stack

Both engines now share NVIDIA's FlashInfer kernels and expose identical OpenAI-compatible APIs. Meanwhile, SGLang spun out as RadixArk with $100M in seed funding, and vLLM hit 2M weekly installs. The inference layer is consolidating faster than anyone expected — here's what that means for teams building on top of it.