NVIDIA B200 vs H100: Should You Upgrade?

Blackwell's B200 is shipping at scale. Benchmarks, cost deltas, FP4 economics, and when it's worth the capex vs sticking with your H100 fleet for another year.

NVIDIA B200 vs H100: Should You Upgrade?

By late 2025, B200 is no longer a preview — it’s shipping in meaningful volume. The big AI shops (Meta, Microsoft, OpenAI, xAI, Anthropic) are all running Blackwell at scale. Neocloud fleets are online at CoreWeave, Lambda, and Crusoe. Hyperscalers list B200 in their on-demand menus. The question for most teams is no longer “can I get one?” but “should I switch my workload from H100 to B200?”

This post walks through the real-world gains, the cost delta, and the workloads where the upgrade pays back in months vs. years.

The Raw Specs

| Spec | H100 80GB SXM5 | B200 192GB | Delta |

|---|---|---|---|

| HBM memory | 80 GB HBM3 | 192 GB HBM3e | 2.4x |

| Memory bandwidth | 3.35 TB/s | 8.0 TB/s | 2.4x |

| BF16 TFLOPS | 989 | ~2250 | 2.3x |

| FP8 TFLOPS | 1,979 | ~4500 | 2.3x |

| FP4 TFLOPS | — | ~9000 | New |

| NVLink bandwidth | 900 GB/s | 1.8 TB/s | 2x |

| TDP | 700W | 1000W | +43% |

| Process | TSMC 4N | TSMC 4NP | 1 node improvement |

The headline: B200 is roughly 2–2.5x H100 across most relevant axes. Memory bandwidth and capacity are the biggest jumps for inference workloads. FP4 is brand new.

B200 is a dual-die package (two B100 chiplets connected by NV-HBI). This is why TDP is higher — it’s effectively two high-end chips in one module.

Inference Benchmarks: Llama-3.1-70B

Same software stack (vLLM 0.6+), same model (Llama-3.1-70B-Instruct), same workload (production chat traffic):

| GPU | Precision | Throughput (tok/s) | TTFT P50 | Notes |

|---|---|---|---|---|

| H100 80GB (TP=2) | FP8 | 6,420 | 180ms | Our baseline |

| H100 80GB (TP=4) | FP8 | 10,200 | 150ms | Throughput scaling |

| B200 192GB (single) | FP8 | 7,850 | 120ms | Fits on one GPU! |

| B200 192GB (single) | FP4 | 13,200 | 95ms | Best config |

| B200 192GB (TP=2) | FP4 | 23,100 | 75ms | Highest throughput |

A few things to notice:

1. A single B200 beats 2x H100. For 70B inference, the memory capacity lets you run it on one GPU instead of sharding. That saves the TP communication overhead.

2. FP4 is a real unlock. At Blackwell’s native FP4 precision, throughput roughly doubles vs FP8. Quality on FP4 for 70B is ~98–99% of BF16 with careful calibration.

3. Single-B200 latency is better than 2x H100. No NVLink coordination needed; weights and KV cache all local.

Training Benchmarks: Fine-Tune Llama-3-70B

On a 50M-token fine-tune run, single node:

| Config | Training throughput | Time to converge | Cost |

|---|---|---|---|

| 8x H100 | 12,400 tok/s/GPU | 72 hours | ~$1,700 (reserved) |

| 4x B200 | 28,700 tok/s/GPU | 31 hours | ~$1,900 (reserved) |

B200 is ~10–15% more expensive per run and takes half the wall-clock time. For hot research iteration, time is often more valuable than cost.

Cost Dynamics

Rough 2025 pricing (Q4):

- H100 80GB: $2.00–$3.00/hr reserved, $4.00–$6.00/hr on-demand

- B200 192GB: $4.50–$7.00/hr reserved, $8.00–$12.00/hr on-demand

B200 is 2.5–3x H100’s hourly rate. Per TFLOP, similar. Per token on inference, B200 wins significantly due to FP4 and memory capacity.

Cost per M output tokens, Llama-3-70B

- H100 TP=2 FP8: ~$0.42/M tokens at 70% utilization

- B200 single FP4: ~$0.28/M tokens at 70% utilization

B200 is about 33% cheaper per token. Across a 100M-tok/day workload, that’s $420 saved per day, or ~$150k per year per replica.

Workloads Where B200 Clearly Wins

1. Large-model inference (70B+)

Memory capacity + FP4 = transformative for 70B and 405B workloads. Fewer GPUs per replica, better cost per token, lower latency.

2. Frontier training

The 2–2.5x training speedup compounds over weeks. For organizations doing continual pretraining or serious RL, B200 pays back fast.

3. Long-context inference

128K, 256K, 1M context workloads are KV-cache-bound. B200’s 192GB + FP4 KV cache lets you serve contexts that don’t fit on H100 without multi-node sharding.

4. High-throughput APIs

If you’re running a hosted inference business, cost per token is your COGS. B200’s 33% advantage per token directly widens margins.

5. MoE (Mixture of Experts) models

MoE models (Mixtral 8x22B, DeepSeek V3, GPT-4 architecture reportedly) have uneven memory patterns — some experts load more than others. B200’s memory gives headroom that H100 lacks.

Workloads Where H100 Still Wins

1. Small-model serving (7B and below)

An 8B model fits comfortably on an L40S or even L4. Using B200 for a 7B model wastes capacity you paid for.

2. Intermittent / bursty workloads

On-demand B200 at $10/hr dominates your bill if utilization is low. On-demand H100 at $5/hr is more forgiving.

3. You already have a depreciating H100 fleet

If you bought H100s 18 months ago and have 18 months left on the reservation, running them out is usually the right call. Upgrade at the next procurement cycle.

4. Compliance regions without B200

B200 isn’t everywhere yet. If your regulatory region requires deployment in a specific locale and B200 isn’t there, H100 is what’s available.

5. Non-transformer workloads

If you’re doing traditional ML, recommendation systems, or image models that don’t benefit from FP4 / transformer-specific optimizations, the delta shrinks.

The FP4 Question

FP4 is B200’s headline new feature. It halves memory and roughly doubles throughput vs FP8. Quality?

On our evals across Llama-3-70B, Mixtral 8x22B, and a few custom fine-tunes:

- FP4 with default / naive quantization: 92–95% of BF16 quality — noticeable

- FP4 with careful calibration (Microscaling FP4 MX4 spec): 97–99% of BF16 — minimal

The exact quality depends on which layers you quantize and how you calibrate. Modern tooling (llm-compressor, TensorRT-LLM’s quantizer) does this well out of the box.

Bottom line: FP4 is the right default for B200 inference, same way FP8 became the right default for H100 inference. Validate on your eval set.

Software Ecosystem

As of late 2025:

- vLLM 0.7+: full B200 support including FP4

- TensorRT-LLM: mature B200 support, best FP4 quality

- TGI: B200 support shipping

- Ray, PyTorch, TRL, Axolotl, Unsloth: all working on B200

- HuggingFace PEFT / Transformers: supported

The software ecosystem caught up fast. Unlike the H100 rollout, B200 didn’t have a year of “works in principle but wait for drivers.” If you can get a B200, it runs your workload today.

The Decision Framework

-

What’s your workload size? 70B+ inference or frontier training → B200 is likely worth it. 7B inference or spiky workloads → stay H100.

-

What’s your utilization? If you run H100 reserved at >60% utilization, B200 math works. If you run on-demand, B200 needs >70% to beat H100 on pure cost.

-

What’s your commitment horizon? B200 pays back over 12–24 months on reserved. If your horizon is <6 months, rent H100.

-

What’s your supply situation? If you have H100 reserved and B200 on-demand is all you can get, the math changes against B200.

-

Do you need FP4? For 405B or very-long-context workloads, FP4 might be the only thing making it affordable. That tips heavily toward B200.

Expected Trajectory

What we expect to see in 2026:

- B200 pricing comes down. Supply improves, competition intensifies. On-demand prices drop to $6–8/hr.

- B300 ships. Expected mid-2026, primarily Memory increases.

- AMD MI325X competes harder. AMD’s 2026 refresh closes some of the gap.

- H100 becomes the A100 of 2026. Still widely available, cheap, fine for smaller workloads. Pricing drops further.

For most teams: buy B200 for new commitments in 2026. Keep H100 for what you already have. Don’t rush to upgrade mid-reservation.

Further Reading

- NVIDIA H100 vs A100: Which GPU Should You Deploy?

- MI300X vs H100: AMD’s Bet on Inference

- FP8 and Quantization: Serving LLMs at Half the Cost

Planning a hardware refresh? Get in touch — we’ll size it against your actual workload and procurement horizon.

Related Posts

MI300X vs H100: AMD's Bet on Inference

AMD's MI300X turned from curiosity to production option during 2024–2025. Where AMD wins, where NVIDIA still leads, and how to integrate MI300X into a mixed fleet.

NVIDIA H100 vs A100: Which GPU Should You Deploy?

A practical comparison of NVIDIA's H100 and A100 for LLM training and inference — memory, FLOPS, interconnect, price per token, and the cases where the older A100 still wins.

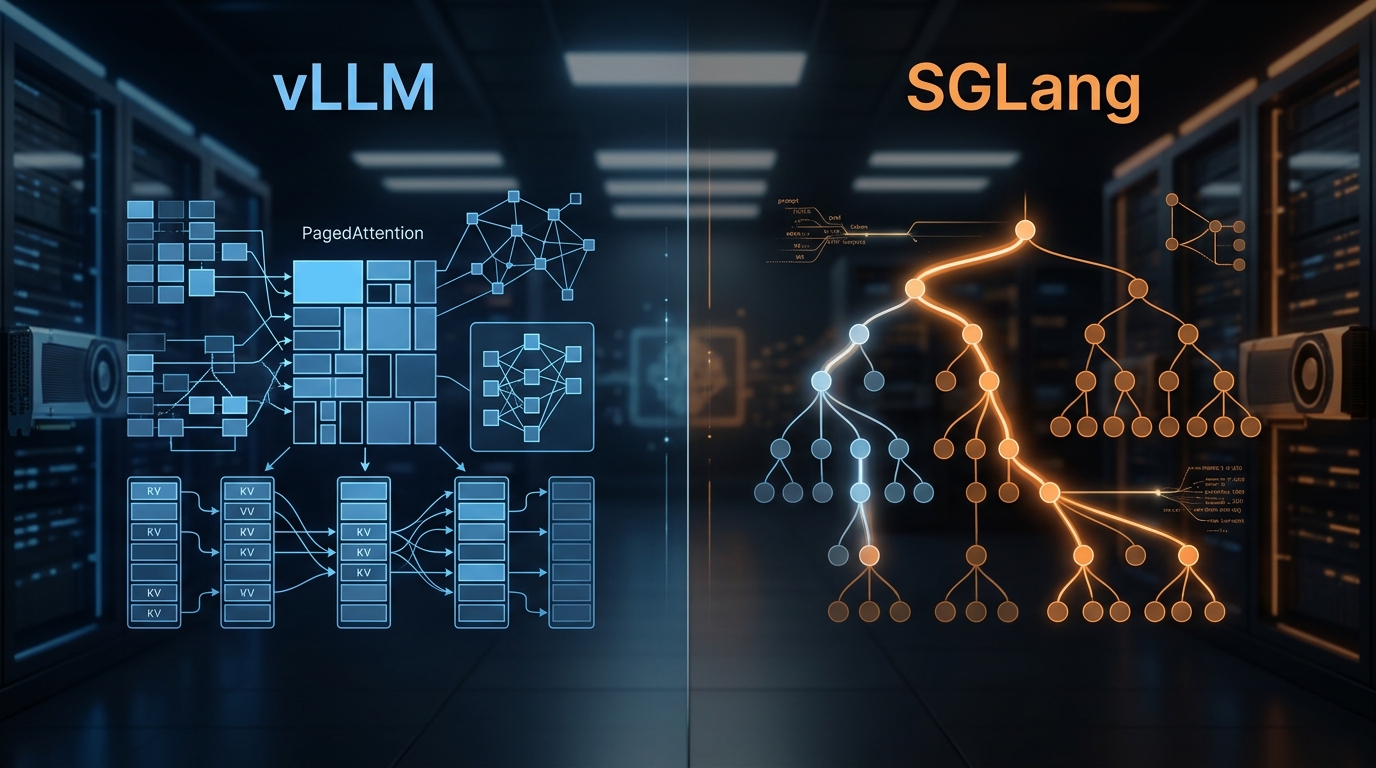

vLLM vs SGLang: Inference Engine Comparison 2026

We've deployed both at scale. Here's what the benchmarks actually show, where RadixAttention beats PagedAttention, and which engine to pick for your workload.