All Posts

#2024

#2025

#2026

#a100

#a2a

#adapters

#adoption

#agentic-ai

#agents

#ai

#ai-studio

#aider

#alibaba

#amd

#analysis

#answer-engine

#anthropic

#api

#api-integration

#architecture

#arize-phoenix

#assistants-api

#attribution

#autogen

#automation

#autonomous

#awq

#aws

#b200

#batching

#beginners

#benchmark

#benchmarks

#blackwell

#bm25

#browser-agents

#budget

#case-studies

#ci-cd

#claude

#claude-code

#cli

#clinical-decision-support

#codex

#coding

#coding-agents

#cognitive-architecture

#collaboration

#comet

#commitments

#comparison

#compliance

#compute

#computer-use

#concepts

#consumer-gpu

#context-engineering

#context-window

#continuous-batching

#copilot

#coreweave

#cost

#cost-analysis

#crewai

#crusoe

#cursor

#customer-service

#decode

#deep-dive

#deepeval

#deployment

#design

#developer-tools

#development

#devin

#devops

#disaggregation

#documentation

#dotnet

#edge-ai

#embeddings

#enterprise

#eu-ai-act

#evals

#evaluation

#fine-tuning

#finops

#fintech

#fly-io

#fp8

#framework

#frameworks

#fraud-detection

#free-tier

#gemini

#github

#glossary

#google

#governance

#gptq

#gpu

#gpu-cloud

#gpu-memory

#guide

#h100

#hardware

#healthcare

#hiring

#human-in-the-loop

#hybrid-search

#ide

#india

#industry-analysis

#inference

#infrastructure

#interoperability

#kong-ai

#kubernetes

#kv-cache

#lambda

#langchain

#langfuse

#langgraph

#langsmith

#latency

#litellm

#llama

#llama-cpp

#llamaindex

#llm

#llm-gateway

#llm-ops

#llm-quality

#llm-security

#llm-serving

#lockin

#lora

#machine-learning

#manufacturing

#mcp

#memory

#metrics

#mi300x

#microsoft

#microsoft-agent-framework

#mig

#milvus

#ml-ops

#mlops

#mlx

#model-serving

#models

#monitoring

#multi-agent

#multi-cloud

#neocloud

#news

#no-code

#notebooklm

#nvidia

#nvidia-gpu-operator

#observability

#okta

#ollama

#on-device

#open-source

#openai

#opencode

#openhands

#opensource

#opentelemetry

#operator

#optimization

#orchestration

#org

#paged-attention

#pagedattention

#pair-programming

#parallel

#patient-engagement

#patterns

#peft

#perplexity

#pgvector

#pinecone

#platform-engineering

#platform-updates

#portkey

#postgres

#prefill

#production

#prompt-injection

#prompts

#protocols

#proxy

#python

#qdrant

#qlora

#quality-assurance

#quantization

#qwen

#radixattention

#rag

#ray

#ray-serve

#recap

#reference

#reliability

#reranker

#research

#resilience

#retail

#retrieval

#retrospective

#review

#rocm

#roi

#runpod

#safety

#salesforce

#scaling

#scheduler

#search

#security

#self-hosting

#semantic-kernel

#seo

#sglang

#snowflake

#software-development

#sovereignty

#speculative-decoding

#sst

#stack

#state-of-industry

#subagents

#supply-chain

#task-tool

#team

#tensorrt-llm

#terminal

#terminology

#testing

#tgi

#throughput

#tokens

#tools

#tracing

#trading

#training

#trends

#triton

#tutorial

#vector-database

#vllm

#weaviate

-

Deep Dives

Deep DivesThe AI Agent Protocol Stack: MCP, A2A & What Comes Next

Andrius Putna •#ai #agents #infrastructure #protocols #mcp -

Deep Dives

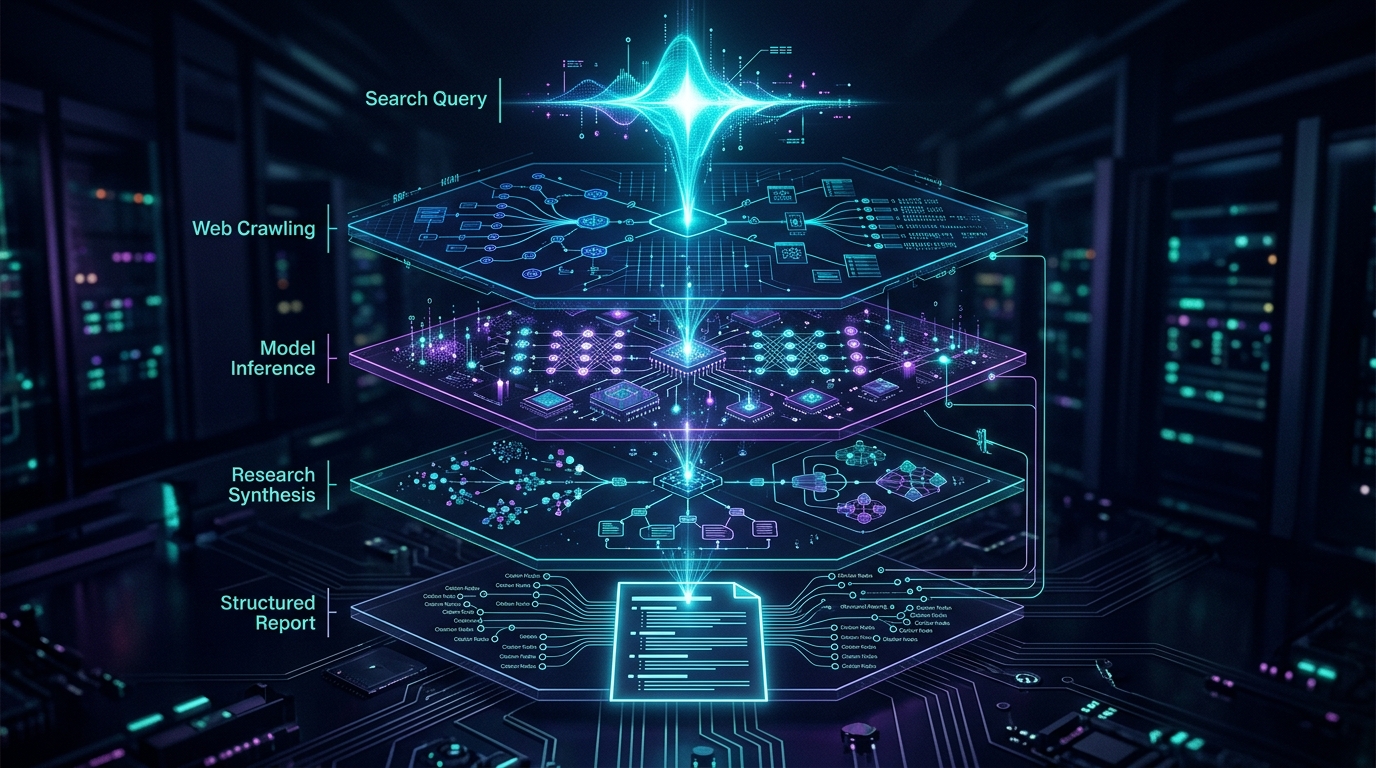

Deep DivesPerplexity Deep Research: From Search to Infrastructure

Balys Kriksciunas •#ai #agents #infrastructure #research #perplexity -

Comparisons

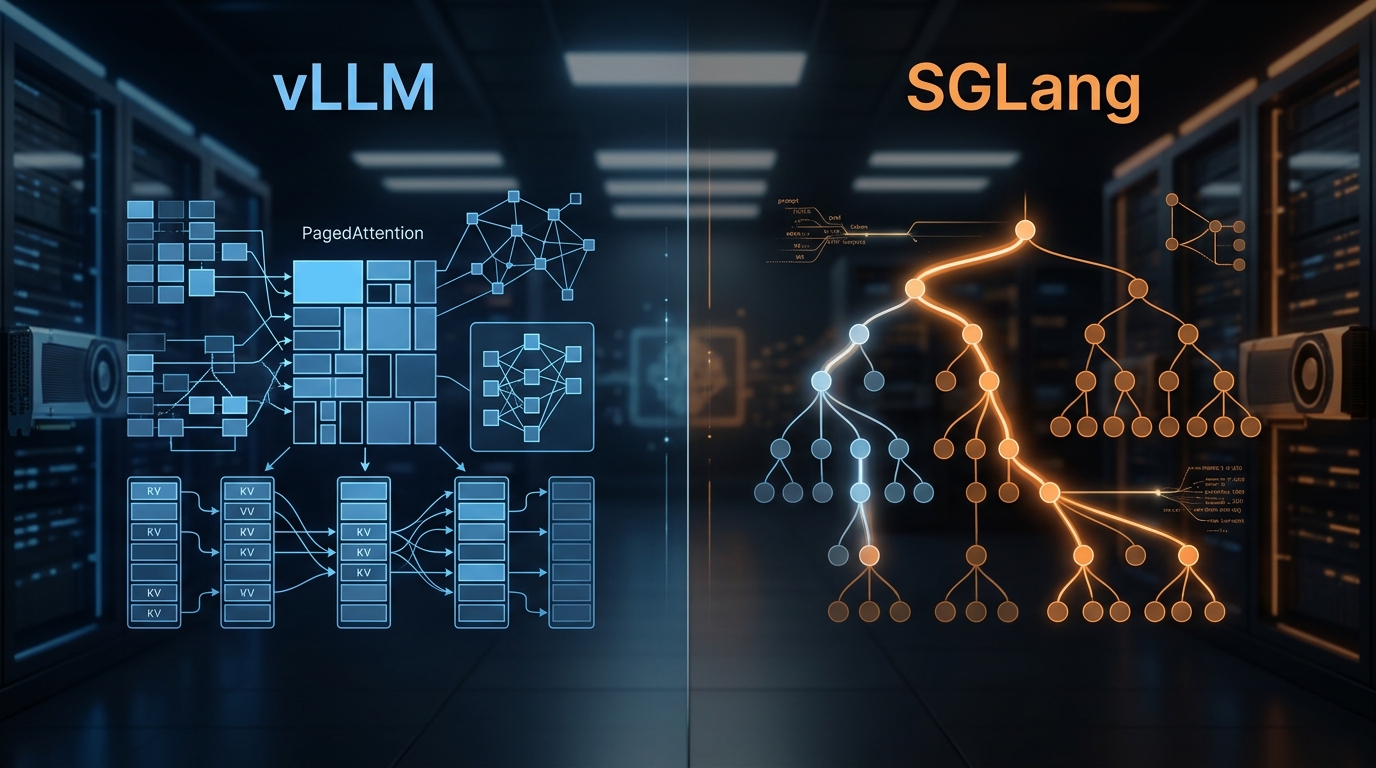

ComparisonsvLLM vs SGLang: Inference Engine Comparison 2026

Balys Kriksciunas •#ai #infrastructure #vllm #sglang #comparison -

Industry Analysis

Industry AnalysisLangSmith vs Langfuse vs Arize Phoenix: LLM Observability in 2026

Balys Kriksciunas •#ai #agents #observability #langsmith #langfuse -

Deep Dives

Deep DivesState of AI Infrastructure 2026: Mid-Year Reality Check

Balys Kriksciunas •#ai #infrastructure #state-of-industry #2026 #analysis -

Deep Dives

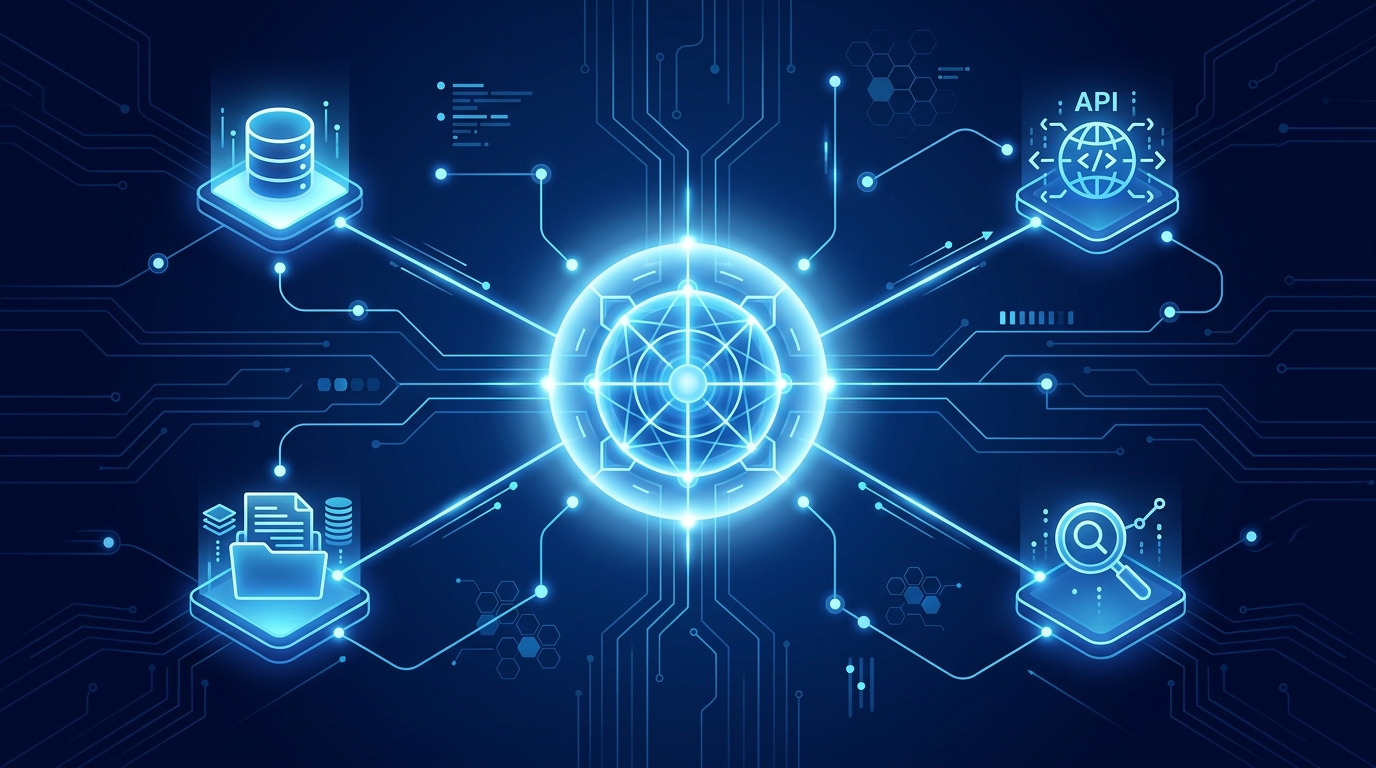

Deep DivesModel Context Protocol (MCP): Agent Builder's Guide

Andrius Putna •#ai #agents #mcp #deep-dive #infrastructure - Infrastructure

Building an AI Platform Team: Roles, Tools, and Rituals

Balys Kriksciunas •#ai #infrastructure #platform-engineering #team #hiring - Infrastructure

GPU FinOps: Reducing Your $10M AI Compute Bill

Balys Kriksciunas •#ai #infrastructure #finops #gpu #cost - Infrastructure

Disaggregated Inference: 30–50% Throughput Wins

Balys Kriksciunas •#ai #infrastructure #inference #disaggregation #prefill - Infrastructure

Multi-Agent Orchestration Infrastructure: Lessons from Production

Balys Kriksciunas •#ai #infrastructure #multi-agent #orchestration #crewai - Infrastructure

Context Engineering: Storage, Retrieval, and the New Memory Stack

Balys Kriksciunas •#ai #infrastructure #context-engineering #memory #rag - Infrastructure

Agent Infrastructure: What's Different from LLM Serving

Balys Kriksciunas •#ai #infrastructure #agents #orchestration #mcp - Infrastructure

Inference at the Edge: Running LLMs on Consumer GPUs

Balys Kriksciunas •#ai #infrastructure #edge-ai #on-device #consumer-gpu - Infrastructure

Running Sovereign AI: EU and India Infrastructure Playbooks

Balys Kriksciunas •#ai #infrastructure #sovereignty #eu-ai-act #india - Infrastructure

MI300X vs H100: AMD's Bet on Inference

Balys Kriksciunas •#ai #infrastructure #gpu #amd #mi300x - Infrastructure

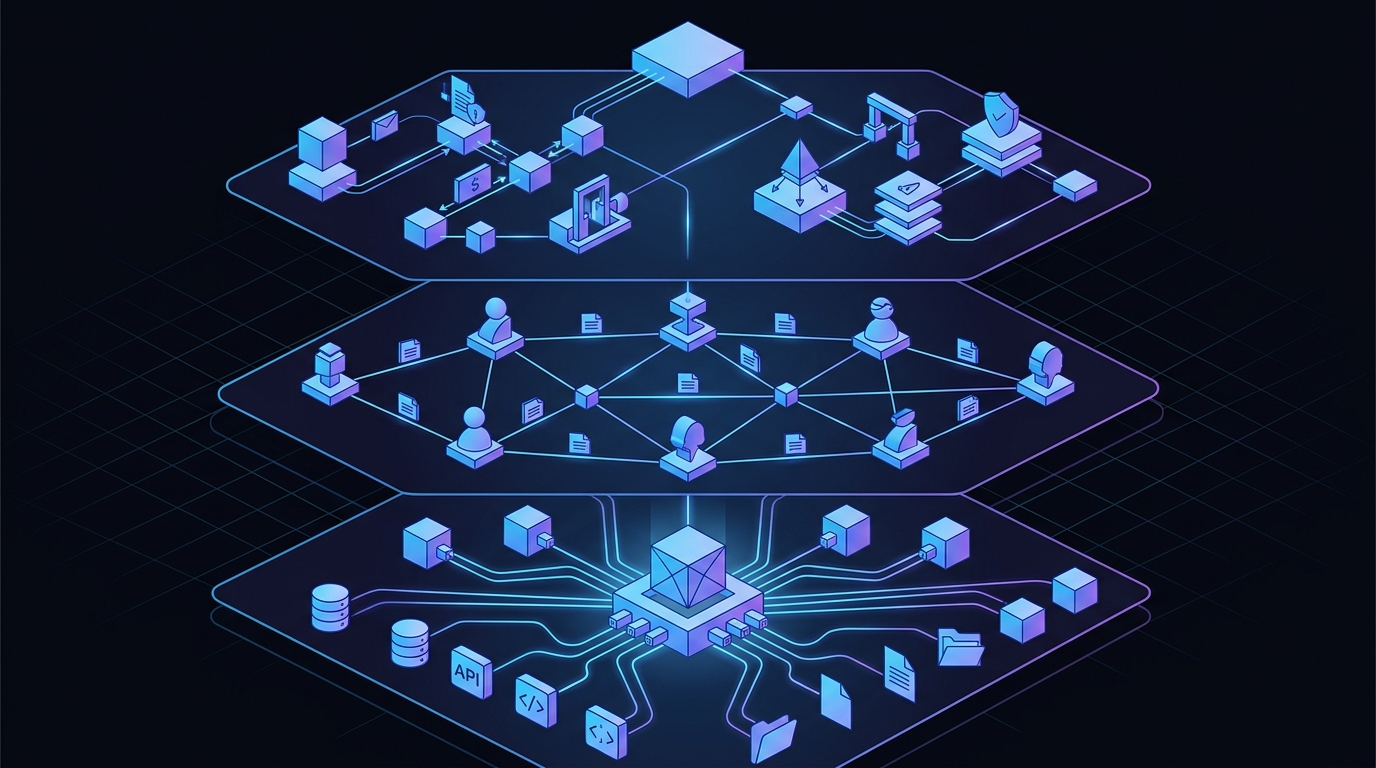

The AI Infrastructure Stack: 2026 Edition

Balys Kriksciunas •#ai #infrastructure #state-of-industry #analysis #trends - Infrastructure

NVIDIA B200 vs H100: Should You Upgrade?

Balys Kriksciunas •#ai #infrastructure #gpu #nvidia #b200 - Infrastructure

Model Evals in Production: Regression Testing Prompts

Balys Kriksciunas •#ai #infrastructure #evals #testing #llm-quality - Infrastructure

LoRA, QLoRA, and PEFT: The Fine-Tuning Infrastructure Guide

Balys Kriksciunas •#ai #infrastructure #lora #qlora #peft - Infrastructure

Securing RAG Pipelines: Prompt Injection via Data

Balys Kriksciunas •#ai #infrastructure #security #rag #prompt-injection - Infrastructure

Hybrid Search in Production: BM25 + Dense Retrieval

Balys Kriksciunas •#ai #infrastructure #hybrid-search #bm25 #rag - Infrastructure

Ray Serve vs Kubernetes for Model Serving

Balys Kriksciunas •#ai #infrastructure #ray #ray-serve #kubernetes - Infrastructure

AI FinOps: Tracking Token Spend Across Your Org

Balys Kriksciunas •#ai #infrastructure #finops #cost #tokens - Infrastructure

KV Cache Optimization Techniques for LLM Serving

Balys Kriksciunas •#ai #infrastructure #kv-cache #inference #vllm - Infrastructure

Speculative Decoding for Production LLMs

Balys Kriksciunas •#ai #infrastructure #speculative-decoding #inference #latency - Infrastructure

LLM Gateway Patterns: LiteLLM, Portkey, and Kong AI

Balys Kriksciunas •#ai #infrastructure #llm-gateway #litellm #portkey - Infrastructure

FP8 and Quantization: Serving LLMs at Half the Cost

Balys Kriksciunas •#ai #infrastructure #fp8 #quantization #awq - Infrastructure

pgvector at Scale: When Postgres Is Enough

Balys Kriksciunas •#ai #infrastructure #pgvector #postgres #vector-database - Infrastructure

vLLM vs TGI vs Triton: LLM Inference Server Benchmarks

Balys Kriksciunas •#ai #infrastructure #vllm #tgi #triton - Infrastructure

Multi-Cloud GPU Strategy: Avoiding Lock-in and Saving 40%

Balys Kriksciunas •#ai #infrastructure #multi-cloud #gpu #lockin - Infrastructure

The State of AI Infrastructure 2025

Balys Kriksciunas •#ai #infrastructure #state-of-industry #2025 #analysis - Guides

Building Production AI Agents: The Complete Guide from Prototype to Deployment

Andrius Putna •#ai #agents #production #deployment #infrastructure - Infrastructure

Self-Hosting Llama 3: A Production Deployment Guide

Balys Kriksciunas •#ai #infrastructure #llama #self-hosting #inference - Infrastructure

Tracing LLM Applications with OpenTelemetry

Balys Kriksciunas •#ai #infrastructure #observability #opentelemetry #tracing - Infrastructure

GPU Cloud Comparison: CoreWeave, Runpod, Lambda

Balys Kriksciunas •#ai #infrastructure #gpu-cloud #coreweave #lambda - Infrastructure

PagedAttention Explained: How vLLM Achieves 24x Throughput

Balys Kriksciunas •#ai #infrastructure #vllm #paged-attention #kv-cache - Infrastructure

Continuous Batching for LLMs: Why It Matters

Balys Kriksciunas •#ai #infrastructure #inference #batching #vllm - Infrastructure

Kubernetes for GPU Workloads: A Primer

Balys Kriksciunas •#ai #infrastructure #kubernetes #gpu #mig - Infrastructure

Choosing a Vector Database in 2024: A Practical Guide

Balys Kriksciunas •#ai #infrastructure #vector-database #pinecone #qdrant - Infrastructure

vLLM: The Open-Source Inference Engine Changing LLM Serving

Balys Kriksciunas •#ai #infrastructure #inference #vllm #llm-serving - Infrastructure

NVIDIA H100 vs A100: Which GPU Should You Deploy?

Balys Kriksciunas •#ai #infrastructure #gpu #nvidia #h100 - Infrastructure

The AI Infrastructure Stack Explained (2024)

Balys Kriksciunas •#ai #infrastructure #llm #gpu #inference