Model Context Protocol (MCP): Agent Builder's Guide

How MCP standardizes tool and context access for AI agents with code examples, architecture patterns, production lessons, and security.

The AI agent interoperability conversation used to sound like a standards war. MCP versus A2A versus open agent protocols — which one wins?

That framing was wrong from the start. The protocols never competed for the same slot in the stack. They solve different problems at different layers, and the ecosystem has now converged on a clear architecture.

We’ve been deploying agents across customer-facing RAG pipelines, internal workflow orchestrators, and multi-vendor agent meshes for the past 18 months. Through that work, the layering became obvious long before the press caught up. Here’s where things actually stand, what the data says, and how to design your stack around it.

The agent interoperability picture in 2026 breaks cleanly into three layers:

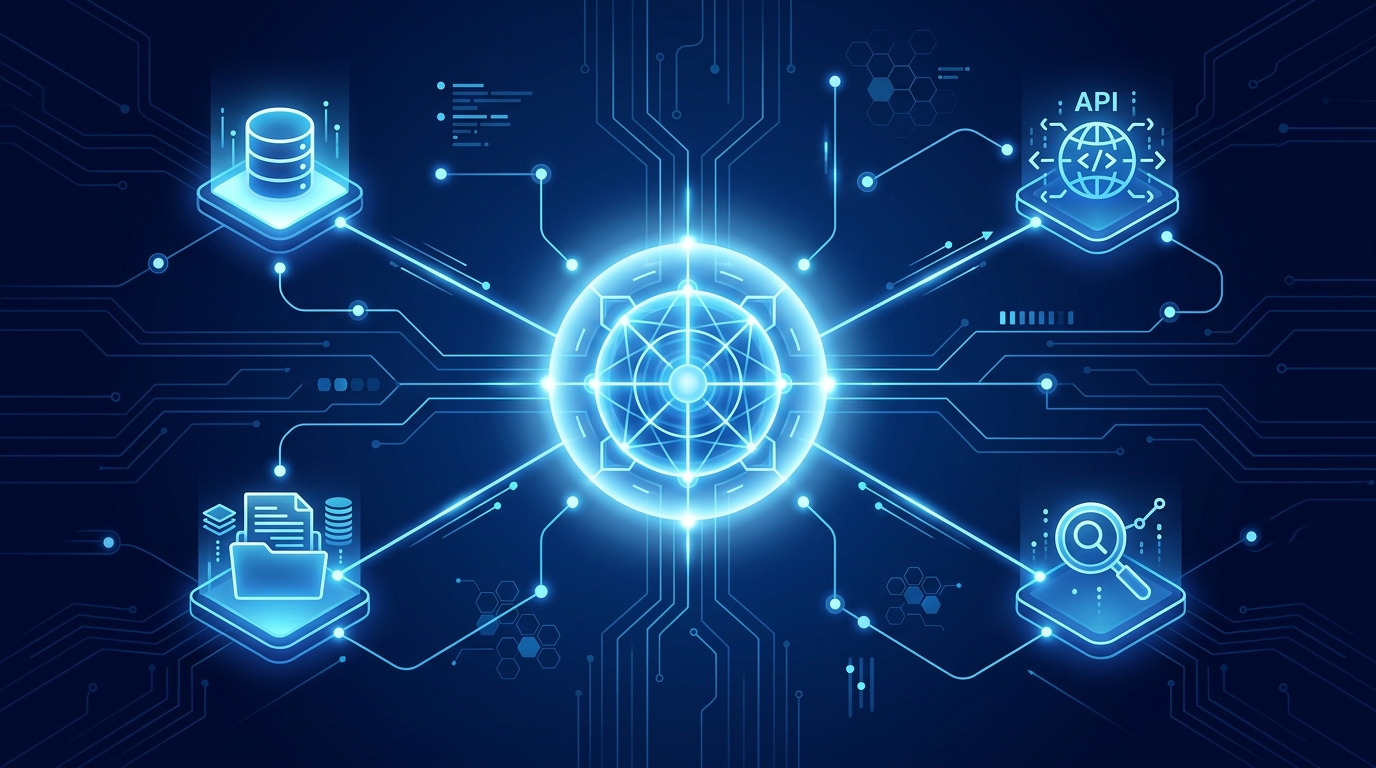

Layer 1 — Tool Integration (Vertical). How a single agent connects to external capabilities: databases, APIs, file systems, code execution. This is MCP’s domain.

Layer 2 — Agent Coordination (Horizontal). How agents discover each other, negotiate tasks, and exchange results across organizational or framework boundaries. This is A2A and ACP’s domain.

Layer 3 — Identity and Trust (Cross-Cutting). How agents prove who they are and verify counterparties. OAuth 2.1, Agent Cards, and W3C DIDs span all layers.

The most common mistake we see is treating MCP and A2A as alternatives. They are not. An agent that only implements MCP has no way to talk to another agent. An agent that only implements A2A has no standardized way to reach its tools. You need both.

Anthropic launched the Model Context Protocol in November 2024. Fifteen months later, it hit 97 million monthly SDK downloads and 18,000+ community-indexed servers across the Glama.ai and MCP.so registries. Every major framework — LangChain, LangGraph, AutoGen, CrewAI, OpenAI Agents SDK — consumes MCP servers as tool sources. Model Context Protocol Guide covers the full implementation details.

But the more interesting story is not the download count. It’s the transport convergence.

The 2025-03-26 MCP spec introduced Streamable HTTP, which collapsed the old dual-endpoint architecture (HTTP+SSE) into a single /mcp endpoint using standard HTTP POST/GET. Servers respond immediately for fast operations, upgrade to SSE streaming for long-running tasks, and can operate statelessly. This means MCP servers can now sit behind any standard load balancer — round-robin, no sticky sessions required.

That last detail matters more than you’d expect. Before Streamable HTTP, scaling MCP required session-affinity infrastructure. After it, an MCP server is just another Kubernetes pod or serverless function. The deployment model became identical to scaling a REST API.

The security story matured in parallel:

MCP was donated to the Linux Foundation’s Agentic AI Foundation (AAIF) with membership spanning Anthropic, OpenAI, Google, Microsoft, AWS, Block, Cloudflare, and Bloomberg. That governance convergence is what killed the “MCP vs. the alternatives” narrative. When the biggest competitors all sit on the same foundation board, you’re not looking at a winner-take-all market. You’re looking at infrastructure.

If MCP answers “how does my agent use a tool?”, A2A answers “how does my agent delegate work to your agent?”

Google’s Agent-to-Agent Protocol has grown to 150+ participating organizations and was formally donated to the Linux Foundation in June 2025 (Linux Foundation Press Release, April 2026). The SDKs cover Python, TypeScript, Go, Java, and .NET, and the GitHub repository sits at 21.9k stars.

The core abstraction is the Agent Card — a JSON document that serves as a digital business card for any agent. It declares capabilities, skills, supported input/output modes, and security schemes without exposing internal reasoning, plans, or tool implementations. This opacity is deliberate: a2a agents collaborate based on declared capabilities, not by inspecting each other’s internals.

{

"name": "Inventory Agent",

"description": "Manages product inventory and stock levels",

"url": "https://agent.example.com/",

"version": "1.0.0",

"capabilities": {

"streaming": true,

"pushNotifications": false

},

"skills": [

{

"id": "check_stock",

"name": "Check Stock Levels",

"description": "Query current inventory for any product"

}

],

"securitySchemes": {

"oauth2": {

"type": "oauth2",

"flows": {

"clientCredentials": {

"tokenUrl": "https://auth.example.com/token"

}

}

}

}

}

Discovery works through three strategies:

/.well-known/agent-card.jsonTasks follow a defined lifecycle: submitted → working → completed/failed/canceled, with support for input_required and auth_required intermediate states. Terminal states are final — subsequent work requires a new task within the same contextId.

As we covered in our April 2026 platform updates, A2A is now shipping in production across Google Cloud with Apigee acting as an API-to-agent bridge — meaning you don’t need to manage Agent Card endpoints yourself.

Not every protocol fits neatly into the two-layer model. IBM’s Agent Communication Protocol (ACP) deserves mention because it pursues a different architectural philosophy: REST-native, broker-mediated, optimized for multi-framework enterprise environments where teams want existing HTTP toolchain compatibility rather than a new JSON-RPC transport.

ACP’s strength is pragmatically unglamorous: it works with whatever load balancers, API gateways, and monitoring stacks you already run. For teams migrating from legacy service meshes into agent architectures, that friction reduction is real.

The commerce-specific protocols — ACP for commerce (IBM/Linux Foundation) and Google’s Universal Commerce Protocol (UCP) — handle payment, fulfillment, and transaction semantics. MCP and A2A deliberately do not cover these concerns. If your agents process financial transactions, you’ll need a commerce-layer protocol regardless of your tool and coordination choices.

Here’s the practical decision framework we use when scoping new agent deployments:

Use MCP via Streamable HTTP. Wrap each external system as a separate MCP server (database, API, file system). Run them as discrete processes with their own credentials. Put them behind your existing load balancer. No sticky sessions.

Use MCP for tool access and evaluate A2A for coordination if agents span different trust domains or teams. For tightly-coupled intra-service workflows, your framework’s native orchestration (LangGraph StateGraph, OpenAI Agents handoffs) may be sufficient — you don’t need A2A to delegate work between services in the same deployment.

Implement both MCP and A2A. MCP gives each agent standardized tool access. A2A enables cross-boundary delegation via Agent Cards and the task lifecycle. The combination is increasingly becoming the expected baseline for enterprise agent deployments (Digital Applied, March 2026).

Add ACP or UCP as a third protocol. Don’t attempt to encode payment semantics into A2A artifacts or MCP tools. Use the commerce layer protocol for what it was designed for.

Not everything is settled. Three issues will dominate the protocol conversation for the rest of 2026:

Fine-grained authorization. OAuth 2.1 gets you identity and coarse access control, but agents need capability-level permissions — “this agent can call check_stock but not update_inventory.” Standardized capability tokens are in active discussion across AAIF working groups.

Cross-protocol interoperability. An MCP server today cannot be directly consumed as an A2A skill, even though the concepts map closely. Reported joint MCP/A2A specification work (Q3 2026) aims to bridge this gap. Until it ships, teams maintain dual implementations — one MCP server and one A2A endpoint — for each service.

Decentralized identity via W3C DIDs. ANP (Agent Network Protocol) and several other emerging projects use W3C Decentralized Identifiers as the trust anchor instead of OAuth. The technical vision is compelling for trustless agent marketplaces, but the infrastructure (DID resolution, verifiable credential issuance) isn’t production-ready for most enterprise teams.

The era of protocol wars is over. MCP won the tool layer, A2A is winning the coordination layer, and the Linux Foundation now houses governance for all three major protocols under one roof. That’s not accidental — it’s the ecosystem recognizing that agents need a standardized stack, just like web services needed HTTP, TLS, and OAuth to mature.

For teams building in 2026: stop debating which protocol. Start shipping agents that use the right protocol at the right layer. Your architecture should look like this:

┌──────────────────────────────────────────┐

│ Agent (your application) │

├──────────────────┬───────────────────────┤

│ MCP Client │ A2A Client │

│ (tool access) │ (agent coord.) │

├──────────────────┼───────────────────────┤

│ MCP Server │ Agent Card + A2A │

│ (your tools) │ Endpoint │

├──────────────────┴───────────────────────┤

│ OAuth 2.1 + Agent Card = Identity │

└──────────────────────────────────────────┘

Everything above that box is your business logic. Everything below it is infrastructure you shouldn’t have to implement yourself.

For more on the framework-level implications, see our Complete Guide to AI Agent Frameworks 2026 and the OpenAI Agents SDK deep dive. Both cover how these major frameworks consume the protocol layer.

How MCP standardizes tool and context access for AI agents with code examples, architecture patterns, production lessons, and security.

Serving agents isn't the same as serving LLMs. Different concurrency models, different observability, different failure modes. A tour of what production agent infrastructure actually looks like.

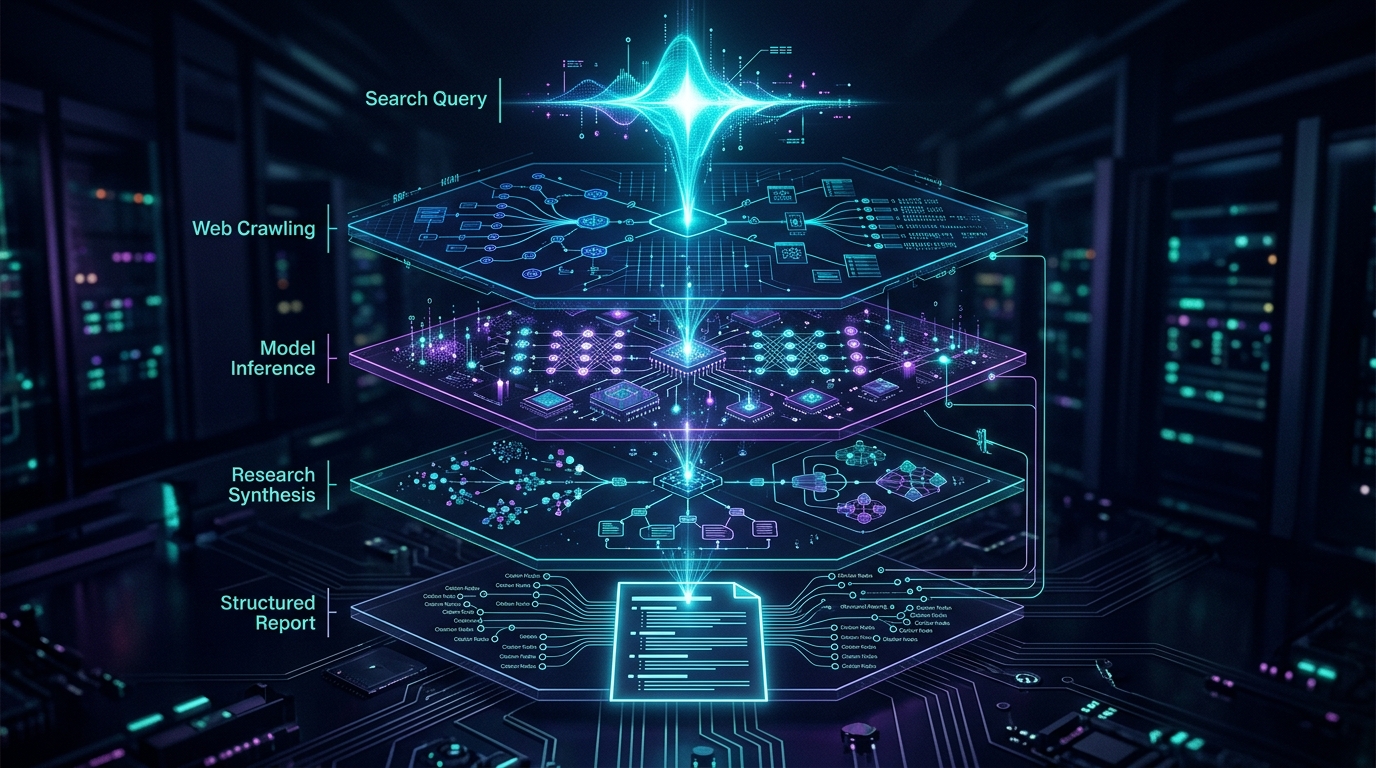

Perplexity's Deep Research API and Comet browser turn ad-hoc search into programmable research infrastructure. Here's what changed and why it matters.