Agent Infrastructure: What's Different from LLM Serving

Serving agents isn't the same as serving LLMs. Different concurrency models, different observability, different failure modes. A tour of what production agent infrastructure actually looks like.

Agent Infrastructure: What’s Different from LLM Serving

Most teams build their first agent on top of an LLM inference stack and expect it to work like a chatbot. Then they hit weird production problems: requests that run for minutes; tool calls that fail silently; agents that generate 200 LLM calls per user request; observability stacks that can’t make sense of the traces.

Agent infrastructure is a different animal. Not “LLM serving plus some extra.” Its concurrency model, failure modes, observability needs, and cost patterns are distinct. This post maps what’s actually different, and the patterns that production agent platforms look like in 2026.

The Core Difference: Stateful Long-Running Work

An LLM call is a request/response: prompt in, completion out, done in seconds. Resources are returned when the HTTP request completes.

An agent is a long-running stateful task:

- Minutes or hours of execution

- Many LLM calls, many tool calls, many retries within one logical task

- State that must survive process restarts, network blips, worker failures

- Branches, parallel tasks, merges

- Human-in-the-loop approval points where the agent may wait indefinitely

This shift — from stateless call to stateful task — changes almost every piece of the supporting infrastructure.

The Five Concrete Differences

1. Concurrency model

LLM serving: threads or async contexts per request, all in one process. Lifetime in seconds.

Agents: durable workflows orchestrated by a workflow engine. State persisted between steps. Can survive process restarts. Can wait on external events.

Implementation options:

- Temporal / DBOS / Inngest / Restate — workflow engines; state is their whole value proposition

- Celery + database — cheap, familiar, missing some workflow semantics

- LangGraph — can persist state via checkpoints; lightweight workflow-ish

- Custom — often ends up re-implementing Temporal, poorly

Our default: Temporal for non-trivial agent systems. LangGraph’s checkpointer for simpler ones. Don’t hand-roll this.

2. LLM call amplification

A single user request to an agent typically produces 5–50 LLM calls internally. A bad loop can produce 500.

Implications:

- Your “QPS” and “TPS” become meaningless at the user-request level. You care about LLM calls per user-request distribution.

- Cost per user-request has much wider variance than cost per LLM call.

- Backends get hammered by one bursty agent more than many small users.

- Rate limits must apply at user-session level, not just per-call.

Budget per-user-session LLM call caps aggressively. Alert on outliers.

3. Observability shape

LLM tracing: one span per API call, maybe with retries.

Agent tracing: a trace tree that can branch, merge, parallelize, pause for human input, resume days later. Complete traces can have thousands of spans.

Tools that handle this well: Langfuse, Langsmith, Arize Phoenix, Datadog APM with careful OTel config. See Tracing LLM Applications with OpenTelemetry.

What you want to visualize:

- The trajectory (steps taken)

- Token and dollar cost per step

- Which tools were called and their outputs

- Where the agent stalled, backtracked, or failed

- Decision branches (why this path and not that)

Standard HTTP-service observability doesn’t capture this well. Invest in a proper LLM observability stack.

4. Failure modes

LLM serving failures: 429, 500, timeout, bad output. Easy to classify.

Agent failures include:

- Runaway loops — agent keeps calling tools, never terminates

- Tool dependency outages — downstream tool fails; agent gets confused or retries forever

- Context drift — agent builds up stale context, makes worse decisions over time

- Hallucinated states — agent “thinks” it completed an action it didn’t

- Cross-turn confusion — resumed agent forgets what it was doing

- Partial failures — agent’s intermediate outputs are wrong but it doesn’t notice

Need agent-specific guardrails:

- Max turns / max LLM calls per task

- Max tool calls per turn

- Periodic state validation

- Dead-man’s-switch timeouts per session

- Human escalation thresholds

5. Cost patterns

LLM serving: cost = tokens × price, predictable per call.

Agent serving: cost = (LLM calls per task) × (tokens per call) × price. Two sources of variance. Agent cost per user-request can vary 100x.

Attribution becomes harder:

- One logical user task spans many LLM calls

- Tool calls have their own costs (API fees, compute fees)

- Long-running agents accrue cost while idle (persistent state, running workers)

Finance wants “$X per completed user task.” You need to track full trajectory cost.

Reference Architecture

What a production-grade agent infrastructure looks like:

[ Users / API ]

│

▼

[ API gateway ]

│

▼

[ Agent orchestrator (Temporal / LangGraph / Inngest) ]

│ │ │

▼ ▼ ▼

[ Workers ] [ State ] [ Event bus ]

│ [ store ] [ (Kafka, ]

│ [(Postgres)][ Redis) ]

│

├── [ LLM gateway (LiteLLM / Portkey) ]

│ └── (OpenAI, Anthropic, Google, self-hosted)

│

├── [ Tool registry (MCP) ]

│ ├── Internal tools

│ ├── External APIs

│ └── Sandboxed code execution

│

├── [ Context store ]

│ ├── Vector DB

│ ├── Graph DB

│ └── Working memory cache

│

└── [ Observability ]

├── Langfuse (LLM traces)

├── Datadog (operational metrics)

└── Eval pipeline

The orchestrator is the main new component vs a plain LLM stack. Everything else is an evolution of LLM infrastructure.

Workflow Engine Choice

Temporal

Most comprehensive. Handles durable state, retries, human-in-the-loop, signals, long-running tasks. Polyglot (Go, Java, Python, TypeScript, .NET SDKs).

Operating it: either self-host (Kubernetes + Postgres + Elastic) or use Temporal Cloud.

Our default for non-trivial agent systems.

Inngest

Simpler than Temporal. Function-based. Good DX for Node/TS teams. Managed SaaS with self-host option.

Great for teams wanting less operational burden.

LangGraph (+ checkpointer)

Built into the agent framework itself. State persists via a checkpointer (Postgres, Redis, etc). Not a full workflow engine — no retries with backoff, no schedules, no signals — but good enough for many cases.

Best for when the whole stack is LangGraph-based and workflow needs are moderate.

DBOS

Durable workflow on top of Postgres. Uses Postgres transactions for exactly-once guarantees. Lightweight. Emerging.

Custom

Rolling your own is where we’ve seen the most pain. Don’t.

Tool Registry: MCP Won

The Model Context Protocol (MCP) is now the default standard for agent tool registries. Every major framework (LangGraph, AutoGen, CrewAI, Anthropic’s Agent SDK, OpenAI Agents) speaks MCP.

Production MCP setup:

- A central MCP registry (internal) that serves tool descriptions and endpoints

- Tool endpoints authenticated per agent identity

- Rate-limited and audit-logged per tool invocation

- Versioned tool schemas

- Mock/dev variants for testing

Tools should follow the principle of least privilege. Not every agent gets every tool. See Securing RAG Pipelines: Prompt Injection via Data for the security implications.

Context Store

Agent memory is more than a vector DB. Production agents work with:

- Working memory — the current turn’s context (in-memory, ephemeral)

- Short-term memory — recent turns in the session (Redis, local DB)

- Long-term memory — durable facts about the user or task (Postgres, graph DB)

- Semantic memory — retrievable knowledge (vector DB)

- Episodic memory — past task trajectories for learning (data warehouse)

The context store is the combination of all these. See Context Engineering.

Human-In-the-Loop

Production agents often need human approval for high-stakes actions. The infrastructure pieces:

- Pause/resume workflow support — the orchestrator has to handle indefinite waits cleanly (Temporal signals, Inngest events).

- Notification channels — when the agent is waiting, notify the right human (Slack, email, in-product notification).

- Approval UI — humans see what the agent proposes and approve or modify.

- Context snapshot — the approver sees the reasoning, not just the conclusion.

- Audit trail — who approved what, when, with what rationale.

Underrated: the approval UI is where your whole system’s user experience lives. Invest in it.

Autoscaling Agents

Not the same as autoscaling inference servers.

Inference servers: scale on request rate or queue depth. Stateless, lightweight horizontal scaling.

Agent workers: need to handle long-running tasks. Scaling up easy; scaling down requires draining — waiting for in-flight tasks to complete before terminating.

Patterns:

- Worker pool per task type — each pool scales independently. One pool for “research agents,” another for “code agents.”

- Priority queues — premium customers’ tasks get priority scheduling.

- Concurrency caps per customer — prevent one customer from monopolizing workers.

- Spot-friendly agents — workflows that tolerate worker preemption; when a worker dies, Temporal (or equivalent) resumes the task on another worker.

Evals for Agents

Standard LLM evals (correctness on a benchmark) don’t fully capture agent behavior. You also care about:

- Task completion rate — did the agent finish what it was asked?

- Step efficiency — how many steps did it take vs. optimal?

- Tool use correctness — were the right tools called?

- Recovery from errors — when a tool failed, did the agent recover?

- Budget adherence — did it stay within token/cost/time limits?

See Model Evals in Production: Regression Testing Prompts for the eval pipeline side.

For agents, we also run “trajectory replay” evals — run the agent against known scenarios, compare the full trajectory to a gold-standard path.

The Bill

A realistic production agent system costs more than a chat app:

- Orchestrator (Temporal) infrastructure

- Worker pool (CPU) + occasional GPU for local model calls

- LLM gateway + heavy LLM use

- Vector DB + graph DB + hot cache

- Observability tools (Langfuse + Datadog)

- Eval compute

For an internal-tool agent serving a few hundred users: $2,000–$8,000/month. For a consumer agent at scale: $50k+/month minimum. For enterprise SaaS platform serving many tenants: $200k+/month.

Budget accordingly. And invest in AI FinOps from day one — agent costs will surprise you.

The Short Version

- Agent infrastructure is different from LLM serving because of state, duration, and amplification

- Workflow engine is the single most important new piece. Use Temporal or similar; don’t roll your own.

- LLM gateway becomes more important, not less — caps, rate limits, cost attribution.

- Context store is a multi-system concept, not just a vector DB.

- Evals need to handle trajectories, not just single responses.

- Cost scales differently. Budget and attribute carefully.

Most teams building their first real agent system underestimate this for a quarter. Then they rebuild. Plan for the shape early.

Further Reading

- Building Production AI Agents: The Complete Guide

- Multi-Agent Orchestration Infrastructure: Lessons from Production

- Context Engineering: Storage, Retrieval, and the New Memory Stack

Building production agent infrastructure? Reach out — we’ve built agent platforms from MVP to multi-tenant scale.

Related Posts

The Agent Durability Gap: Why Production Agents Fail (and How to Fix It)

Agents that work in demos fail in production. The gap isn't model quality — it's infrastructure. Durability, checkpointing, and recovery are the missing layers.

Multi-Agent Orchestration Infrastructure: Lessons from Production

Multi-agent systems are harder to operate than single agents by roughly the order of their agent count. Hard-won lessons from production deployments — coordination, state, cost, and failure handling.

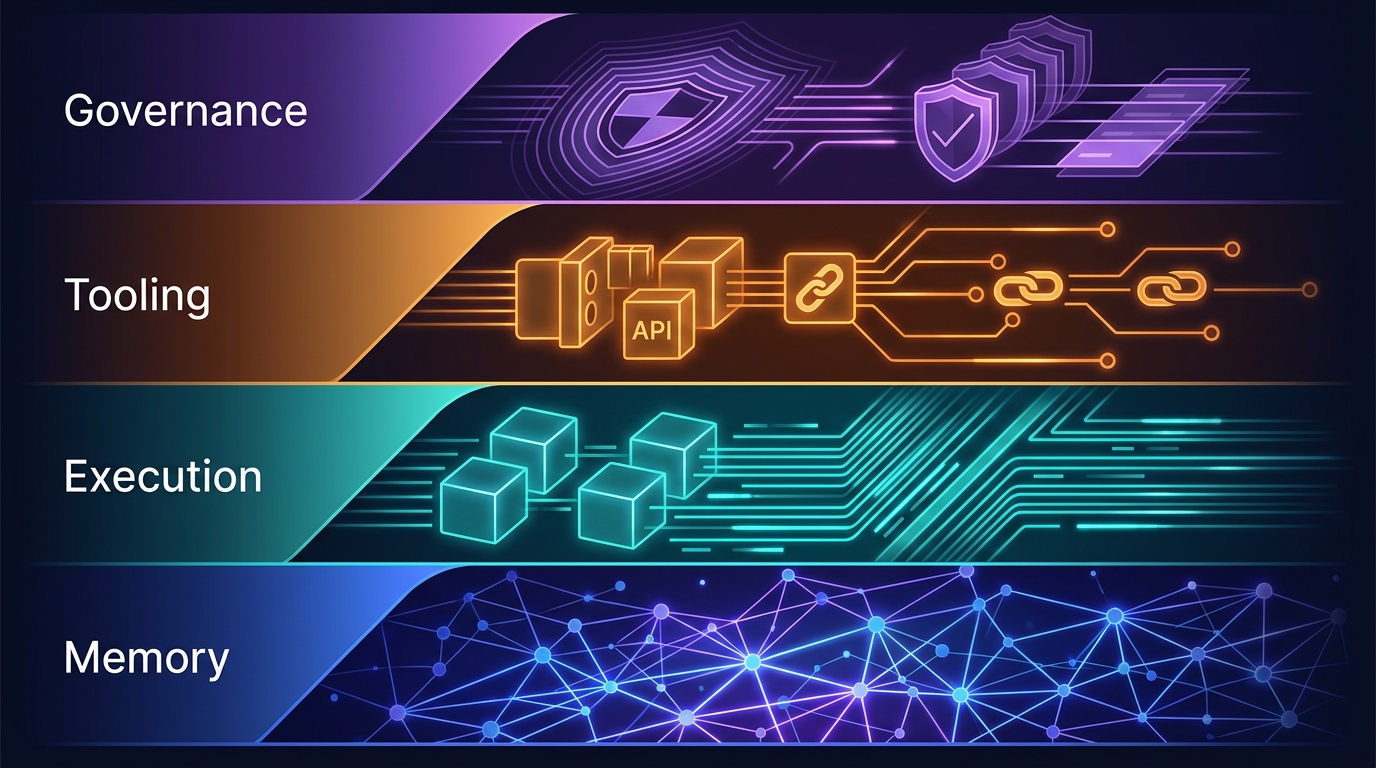

The Four-Layer Agent Infrastructure Stack: Where the Moat Actually Lives in 2026

A generation of agent startups will get commoditized. The ones that survive own one of four stateful layers: Memory, Execution, Tooling, or Governance. Here's how to tell the difference between a moat and glue code.