Inference at the Edge: Running LLMs on Consumer GPUs

Small models on laptops and phones went from a demo to a product category in 2025. The infrastructure patterns, runtimes, and deployment tradeoffs for edge LLM inference in 2026.

Inference at the Edge: Running LLMs on Consumer GPUs

For the first two years of the LLM boom, “AI” meant “call an API.” In 2024 small models started running usefully on laptops. In 2025 that became a product category — privacy-first assistants, offline tools, developer-side IDE features, voice agents with local inference. In 2026 it’s approaching mainstream.

This post covers the infrastructure side of edge LLM inference: what runs where, the runtimes that matter, deployment tradeoffs, and the privacy/product patterns emerging.

What “Edge” Actually Means Here

Three distinct tiers:

- High-end consumer desktop — RTX 4090, RTX 5090, Apple M3/M4 Ultra, AMD Ryzen AI Max. Can run 30B–70B quantized models at usable speed.

- Mid-range consumer / pro laptops — M3/M4 Pro/Max, RTX 4070 Ti laptops, high-end handhelds. Runs 7B–13B comfortably.

- Phones and embedded — Apple A18 Pro, Snapdragon 8 Gen 3+, Tensor G5, custom silicon. Runs 1B–4B specially-designed models.

Each tier has its own runtime ecosystem. The product patterns differ wildly.

The Runtime Ecosystem

For Apple Silicon: MLX

Apple’s MLX framework is the default on M-series chips. Released late 2023, matured fast.

- Unified memory model (GPU/CPU share RAM) is huge: a 70B model fits in a 128GB M3 Ultra with room

- Python API feels like PyTorch, C++ via C++ API, Swift via MLX.swift

- Performance is genuinely competitive with CUDA on comparable workloads

- Integrates cleanly with macOS/iOS apps

Strongly recommended for any Apple-first product.

For Windows + NVIDIA: llama.cpp + ExecuTorch + CUDA

llama.cpp (Georgi Gerganov’s project) is the community standard for local inference. Runs on CUDA, Vulkan, Metal, CPU. GGUF quantization format ubiquitous.

For production Windows apps with NVIDIA, CUDA direct via PyTorch or TensorRT. ExecuTorch (PyTorch’s edge runtime) is the newer managed path.

For Cross-Platform: Ollama

Ollama wraps llama.cpp with model management and an API. Best DX for “download and run” scenarios. Macs, Windows, Linux. API is OpenAI-compatible.

Default recommendation for any user who wants to run models locally without building infrastructure themselves.

For Web: WebLLM, Transformers.js

Running models in the browser via WebGPU. Transformers.js (HuggingFace) handles small models; WebLLM (CMU) scales to ~13B on capable machines.

Use cases: browser-side PII redaction, offline tools, privacy-first SaaS features.

For Mobile: Core ML, TensorFlow Lite, ExecuTorch, MediaPipe

- Core ML on iOS — Apple’s native ML runtime. Integrates tightly with OS-level features (Neural Engine use).

- MediaPipe (Google) — Android + iOS, good for small-model streaming inference.

- TensorFlow Lite — older but still widely deployed.

- ExecuTorch — PyTorch’s mobile story; gaining ground.

- mlc-mobile / llama.cpp iOS bindings — for community-supported custom models.

Mobile inference is dominated by 1B–4B specially-designed models: Apple’s foundation models, Google’s Gemini Nano, Llama 3.2 1B/3B, Phi-5, Qwen3-1.5B, DeepSeek Edge, TinyLlama.

What’s Actually Usable On Each Tier

Tier 1 (high-end desktop/workstation)

Models we routinely run with decent performance (5–20 tokens/sec):

- Llama-3-70B Q4 on M3/M4 Ultra 128GB

- Qwen-3-72B Q4 on 4090 (tight fit)

- Mistral Large Q4 on dual 3090

- DeepSeek-V3 Q4 on multi-GPU consumer setup

Quality is genuinely near-frontier for most tasks. Privacy use cases (legal, medical, code generation on private repos) are real.

Tier 2 (mid-range consumer)

- Llama-3.1-8B FP8 or Q4

- Mistral 7B

- Phi-4 (14B, strong reasoning)

- Qwen-3-14B

- Gemma-2-9B

Fast enough for interactive use, useful quality. This is the sweet spot for desktop/laptop apps.

Tier 3 (phones, tablets, small IoT)

- Apple Intelligence foundation models (3B)

- Gemini Nano

- Llama 3.2 1B / 3B

- Qwen 3 1.7B

- Phi-3.5-mini

Quality is task-dependent. Great for summarization, classification, structured generation. Less reliable for open-ended chat.

Deployment Architectures

Pattern 1: Fully local

Everything runs on device. No network calls during inference. Privacy-first.

Example: a local note-taking app that runs a 7B model in the background to generate tags and summaries.

Tradeoffs:

- 100% privacy, works offline

- Constrained by device capability

- Model updates require app updates

- Hardware fragmentation — must test across devices

Pattern 2: Hybrid (local for privacy, cloud for capability)

Local model handles sensitive operations; cloud handles complex ones. Routing happens on-device.

Example: an enterprise assistant runs PII redaction locally, sends redacted queries to a cloud model for reasoning.

Tradeoffs:

- Best privacy/capability tradeoff

- Requires careful design of what goes local vs cloud

- More complex than pure cloud or pure local

Pattern 3: Local-first with cloud fallback

Default to local; fall back to cloud when local fails (doesn’t know the answer, hits latency budget).

Example: IDE code completion — local model on keystroke, cloud model when user requests “explain” or “refactor.”

Tradeoffs:

- Free mode on device feels snappy

- Escapes the “why is this app talking to a server?” privacy complaint

- Needs a network

Pattern 4: Local for latency, cloud for quality

Not for privacy — purely for user experience. Local streams first tokens fast; cloud model provides the final answer.

Example: voice agent that starts responding immediately from local model while cloud model generates a better answer.

Tradeoffs:

- Best perceived latency

- Risk of “local said X, cloud said Y” drift

- Added engineering complexity

The Quality Gap

Edge models are not API models. For reasoning-heavy tasks, a 7B local model is no match for GPT-4o or Claude Sonnet.

What edge models are competitive at:

- Classification (especially narrow domains)

- Summarization (short-to-medium text)

- Extraction (structured fields from unstructured text)

- Translation (well-supported languages)

- Simple chat with short responses

- Tool use with constrained tool sets

What they struggle with:

- Long-context reasoning

- Multi-step agent workflows

- Novel task types not in training

- Creative long-form writing

- Complex code generation

Product design matters. Good edge AI products scope what’s asked of the local model to its capability band.

The Privacy Product Wedge

The single best reason to go edge in 2026 is privacy. Several product categories emerged specifically around local-first AI:

- Local AI assistants (Poe Mac, Msty, private versions of chat apps)

- On-device coding tools for regulated codebases

- Legal and medical AI where cloud processing is not permitted

- Personal knowledge bases that never leave the device (Reflect, Heyday, local-first Obsidian plugins)

- Privacy browsers with built-in AI (Brave, Vivaldi with local model options)

For B2B in regulated industries, “runs on your laptop, never leaves” is a serious product positioning. Worth real investment.

The Operational Side

Edge inference doesn’t have server-side ops — but it has its own ops problems:

1. Model distribution. 4GB to 40GB of weights have to reach user devices. CDN strategy matters. Delta updates help.

2. Version fragmentation. User on version 3.2 has model v1; user on 3.5 has v2. Both need to work.

3. Hardware capability detection. Choose the right model for the device. Fallback to smaller model if user’s GPU is insufficient.

4. User education. First run needs to download the model. Users need expectations set.

5. Telemetry. You can’t see failures server-side. Opt-in telemetry, error reporting, usage metrics all become harder.

6. Quality measurement. Your eval pipeline needs to run on-device, not just server-side.

Tools that help:

- Ollama registry for distribution (open-source model registry)

- Hugging Face’s edge tools for versioning

- PostHog / telemetry.deck for opt-in edge telemetry

- MLflow / W&B for model versioning

Emerging Patterns

What’s new in 2026:

1. Per-user fine-tunes

A user’s local model is tuned on their data. Private, personalized. Emerging in note-taking and coding tools.

2. Continual learning

Local models adapt based on user interactions. Privacy-preserving by design (data never leaves device).

3. Multimodal edge

Local models handle vision + text in the same pass. Especially strong on Apple Silicon (unified memory).

4. Agent-on-device

Small agents running local tools on the device itself. File search, calendar, notifications — without cloud round-trips.

5. Sub-second voice interaction

Local small models enable genuinely real-time voice agents. End-to-end latency under 500ms achievable.

The Short Version

- Consumer hardware can run capable LLMs. In 2026 this is a product-shipping capability, not a research demo.

- Apple Silicon is the friendliest platform; Apple Intelligence set baseline expectations.

- 7B–13B models on laptops, 30B–70B on high-end desktops, 1B–4B on phones.

- Privacy is the dominant product wedge; offline and latency are secondary.

- Hybrid patterns (local + cloud) are the strongest architecture for most real products.

If your product has a privacy dimension and you’re not considering edge inference, you’re missing a capability the market increasingly expects.

Further Reading

- Self-Hosting Llama 3: A Production Deployment Guide

- LoRA, QLoRA, and PEFT: The Fine-Tuning Infrastructure Guide

- The AI Infrastructure Stack: 2026 Edition

Designing an edge-AI product? Talk to us — we’ve shipped local-first AI across desktop and mobile.

Related Posts

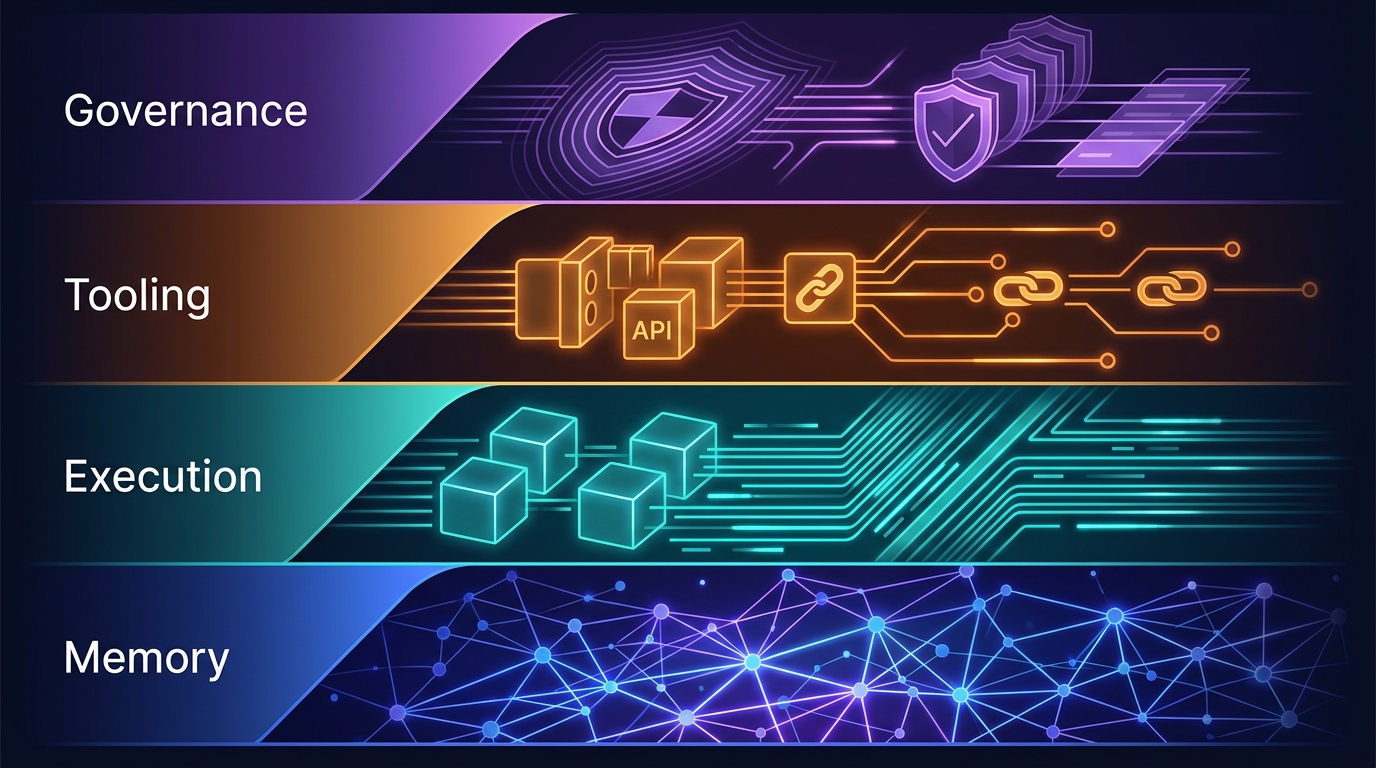

The Four-Layer Agent Infrastructure Stack: Where the Moat Actually Lives in 2026

A generation of agent startups will get commoditized. The ones that survive own one of four stateful layers: Memory, Execution, Tooling, or Governance. Here's how to tell the difference between a moat and glue code.

GPU Clouds: RunPod vs Lambda vs CoreWeave — June 2026

RunPod H100 at $2.69/hr. Lambda at $4.29/hr. CoreWeave at $6.16/hr — but requires 8-GPU minimums. Which GPU cloud makes sense for your agent workloads?

vLLM and SGLang Are Converging — and That Changes the Inference Stack

Both engines now share NVIDIA's FlashInfer kernels and expose identical OpenAI-compatible APIs. Meanwhile, SGLang spun out as RadixArk with $100M in seed funding, and vLLM hit 2M weekly installs. The inference layer is consolidating faster than anyone expected — here's what that means for teams building on top of it.