LoRA, QLoRA, and PEFT: The Fine-Tuning Infrastructure Guide

Parameter-efficient fine-tuning makes custom models affordable. A deep dive on LoRA, QLoRA, and DoRA — hardware sizing, training recipes, and the serving side most guides ignore.

LoRA, QLoRA, and PEFT: The Fine-Tuning Infrastructure Guide

Full fine-tuning of a 70B model takes 8 H100s and a week. Parameter-efficient fine-tuning (PEFT) does it on 1-2 H100s in a day, at 95% of the quality. This changed what “custom model” means. In 2025, a team of two engineers can ship a dozen fine-tuned variants per quarter.

This guide covers the infrastructure side of LoRA, QLoRA, and the rest — sizing hardware, training recipes, and the serving architecture that lets you run 50 LoRA adapters on one base model.

Why PEFT Exists

Full fine-tuning updates every parameter in a model. For a 70B model that’s:

- 140 GB of weights × 4 (weights + gradients + 2x AdamW optimizer state) ≈ 560 GB of memory

- Takes 8x H100 at minimum

- Each training step is slow

Most tasks don’t need to touch all 70B parameters. PEFT freezes the base model and trains a tiny set of new parameters that “adapt” it. Memory drops 10–20x; training time drops proportionally.

The key insight (Hu et al., 2021): fine-tuning updates are low-rank. The change in weight matrix W can be well-approximated by a product of two small matrices A and B. Instead of updating W, you train A and B.

LoRA (Low-Rank Adaptation)

LoRA adds trainable low-rank matrices alongside frozen weights:

forward(x): W_frozen @ x + (B @ A) @ x

Where W is the original weight matrix (d_in × d_out, frozen), A is r × d_in, B is d_out × r. r is the “rank” — typically 8, 16, or 64.

For r=16 on a Llama-3-70B attention layer, instead of updating 8192×8192 = 67M parameters, you train 2×8192×16 = 262K. That’s a 250x reduction in trainable parameters.

Quality: Typically 90–99% of full fine-tuning on task-specific benchmarks. Occasionally a few points lower, rarely a dealbreaker.

Training memory (Llama-3-70B, rank 16): ~40 GB, fits on a single H100 80GB at FP16.

Training time: ~4–8 hours on a 50k-example task with 1 H100.

QLoRA: LoRA + 4-bit Quantization

QLoRA adds a clever twist: quantize the frozen base weights to 4-bit (NF4). Base weights are read-only during training (only for forward passes), so quantizing them has minimal impact on gradient quality.

Memory reduction:

- Llama-3-70B in NF4: ~35 GB

- LoRA adapters in FP16: ~1 GB

- Optimizer state + gradients: ~3 GB

- Activations: ~5 GB

- Total: ~44 GB — fits on a single H100 80GB or even an A100 40GB with tight settings

QLoRA made 70B fine-tuning possible on a single consumer-grade GPU.

Quality: Typically 95–98% of full LoRA. The 4-bit quantization costs a little quality; the optimizer sees lossier gradients.

Tradeoff: Training is slightly slower than LoRA because of dequantize-on-read costs. Worth it for memory.

DoRA (Weight-Decomposed LoRA)

DoRA (2024) decomposes weight updates into magnitude and direction, adapting each separately. A little more parameters than LoRA, a little better quality. Emerging standard for new fine-tunes.

In our experiments, DoRA gives 0.5–1.5% better eval scores than LoRA on the same tasks, for ~15% more trainable parameters. Worth using for non-trivial fine-tunes.

Other PEFT Methods

The LoRA family is dominant, but other PEFT methods exist:

- Prefix Tuning / Prompt Tuning — prepend learnable soft prompts. Minimal parameters. Less expressive than LoRA; largely superseded.

- IA³ — even more parameter-efficient than LoRA; learns scaling vectors. Niche; occasionally useful.

- Adapters (bottleneck) — original adapter method; insert small bottleneck layers. More parameters, less used now.

- (QA)LoRA variants — LoRA combined with other quantizations (GPTQ, AWQ). Serving-focused.

For 95% of cases, use LoRA or QLoRA. Start with LoRA if you have memory headroom; QLoRA if not.

Hardware Sizing Guide

Llama-3-8B

- LoRA FP16: ~15 GB, fits on A10G / RTX 4090 / L4

- QLoRA: ~8 GB, fits on a T4 / consumer GPU

- Training time: ~1–2 hours per 10k examples

Llama-3-70B

- LoRA FP16: ~160 GB, needs 2x A100 / 2x H100 with tensor parallelism

- QLoRA NF4: ~44 GB, fits on single H100 80GB or 2x A100 40GB

- Training time: ~6–12 hours per 10k examples

Llama-3.1-405B

- LoRA FP16: ~900 GB, multi-node only

- QLoRA NF4: ~250 GB, fits on 4x H100 with TP

- Training time: ~24+ hours per 10k examples

Practical: QLoRA for 70B+ unless you have tight quality requirements. LoRA for 8B and smaller where memory isn’t the constraint.

Training Infrastructure

The software stack

- Hugging Face PEFT — reference implementation of LoRA, QLoRA, DoRA, and all the siblings

- TRL — HuggingFace’s fine-tuning library (SFT, DPO, GRPO trainers)

- Axolotl — YAML-driven wrapper for TRL / PEFT; simplest path for most fine-tunes

- Unsloth — heavily optimized; 2–5x faster than default HuggingFace, lower memory. Recommended for single-GPU fine-tunes.

- TorchTune (Meta) — native PyTorch reference implementation

Our default pick in 2025: Unsloth for single-GPU fine-tunes, Axolotl for multi-GPU or more complex recipes.

A minimal QLoRA recipe (Axolotl YAML)

base_model: meta-llama/Llama-3.1-8B

load_in_4bit: true

adapter: lora

lora_r: 16

lora_alpha: 32

lora_dropout: 0.05

lora_target_modules: ["q_proj", "k_proj", "v_proj", "o_proj", "gate_proj", "up_proj", "down_proj"]

datasets:

- path: my_dataset.jsonl

type: sharegpt

sequence_len: 4096

sample_packing: true

learning_rate: 2e-4

num_epochs: 3

warmup_steps: 100

gradient_accumulation_steps: 4

micro_batch_size: 2

output_dir: ./out/

Run with accelerate launch -m axolotl.cli.train config.yml. Adapter weights land in output_dir; base model is untouched.

Multi-GPU training

For larger models, use Accelerate (HuggingFace) or DeepSpeed ZeRO. FSDP for tensor-sharded training. Axolotl wraps all of these.

For 70B QLoRA on 2x H100, FSDP + QLoRA is the typical recipe. ZeRO-3 works too but has more communication overhead.

The Serving Side: Multi-LoRA Serving

Here’s where PEFT gets really interesting. Because the base model is frozen, you can load many LoRA adapters over a single base model and serve them all from one GPU.

vLLM multi-LoRA:

vllm serve meta-llama/Llama-3.1-70B-Instruct \

--enable-lora \

--max-loras 20 \

--max-lora-rank 64 \

--lora-modules cust_a=./lora_a cust_b=./lora_b cust_c=./lora_c

At request time, you specify which adapter:

response = client.chat.completions.create(

model="cust_a", # adapter name

messages=[...],

)

vLLM swaps the adapter per-request with minimal overhead. You can serve 20+ LoRAs on the same GPU fleet.

This is the enabler for per-customer fine-tunes in multi-tenant SaaS. Each customer gets their own tuned variant; all share the expensive base weights; marginal compute cost per adapter is close to zero.

TensorRT-LLM and TGI also support multi-LoRA serving with varying maturity.

Adapter Management

With many adapters, operational concerns emerge:

- Storage: adapters are small (~100 MB for Llama-3-70B at rank 16), but at 50+ adapters per customer, you need a registry.

- Loading: dynamic loading at request time vs pre-loading all at startup. vLLM supports both.

- Versioning: customer-A adapter v1, v2, v3 — keep version history, allow rollback.

- Eviction: at max-loras cap, LRU eviction. Busy adapters stay hot; rare adapters get swapped.

Some hosted services (Fireworks.ai, Together.ai) offer managed multi-LoRA serving. For high-adapter-count workloads, worth evaluating vs self-hosting.

When PEFT Isn’t The Right Tool

A few cases where full fine-tuning wins:

1. Major distribution shift. If you’re adapting a general-purpose model to a radically different domain (e.g., chemistry notation from a text model), low-rank updates may not be enough.

2. Continual pretraining. Adding new knowledge (not just new behaviors) often needs more than LoRA. Continued pretraining followed by PEFT for task alignment is common.

3. When you need to ship one model for one purpose. Full fine-tuning produces a single, self-contained model. PEFT produces base + adapter, which is two things to ship.

4. Very small model + lots of data. Full fine-tuning a 1B model might be faster and simpler than LoRA + inference overhead.

For 80% of “make this model better at my thing” cases, LoRA or QLoRA is right.

Common Pitfalls

1. Overfit on small data. LoRA is surprisingly expressive. With a small dataset (<1k examples), you can overfit in an epoch. Watch eval loss; use early stopping.

2. Wrong target modules. LoRA on only q/v projections (the original paper’s setup) often underperforms LoRA on all attention + FFN projections. Default to “all linear” unless you have a reason not to.

3. Rank too low. r=8 is the paper default but sometimes underfits. r=16 or 32 is safer for non-trivial tasks.

4. Learning rate mismatch. LoRA learning rates are typically higher than full fine-tuning (2e-4 vs 2e-5). Use defaults from known-good recipes (Axolotl, Unsloth configs) as a starting point.

5. Chat template mismatch. Fine-tune with one chat template, serve with another, and the model gets confused. Pin the template at both ends.

6. Ignoring eval. Don’t ship a fine-tune because training loss looked good. Eval on held-out examples, not training data. See Model Evals in Production.

The Short Version

- LoRA for 8B or when memory allows

- QLoRA for 70B+ on budget hardware

- Axolotl or Unsloth for your training stack

- vLLM multi-LoRA for per-customer fine-tunes at serving time

- Always eval on held-out data

- Typical fine-tune: 1 H100 × 6 hours × $15 ≈ $90 to produce a tuned variant

PEFT made “custom model” a reasonable product decision. It’s one of the most leverage-y skills an AI team can develop in 2025.

Further Reading

- Self-Hosting Llama 3: A Production Deployment Guide

- FP8 and Quantization: Serving LLMs at Half the Cost

- Model Evals in Production: Regression Testing

Planning a fine-tune or evaluating multi-LoRA serving? Reach out — we’ve shipped LoRA fleets from 3 to 300 adapters.

Related Posts

NVIDIA H100 vs A100: Which GPU Should You Deploy?

A practical comparison of NVIDIA's H100 and A100 for LLM training and inference — memory, FLOPS, interconnect, price per token, and the cases where the older A100 still wins.

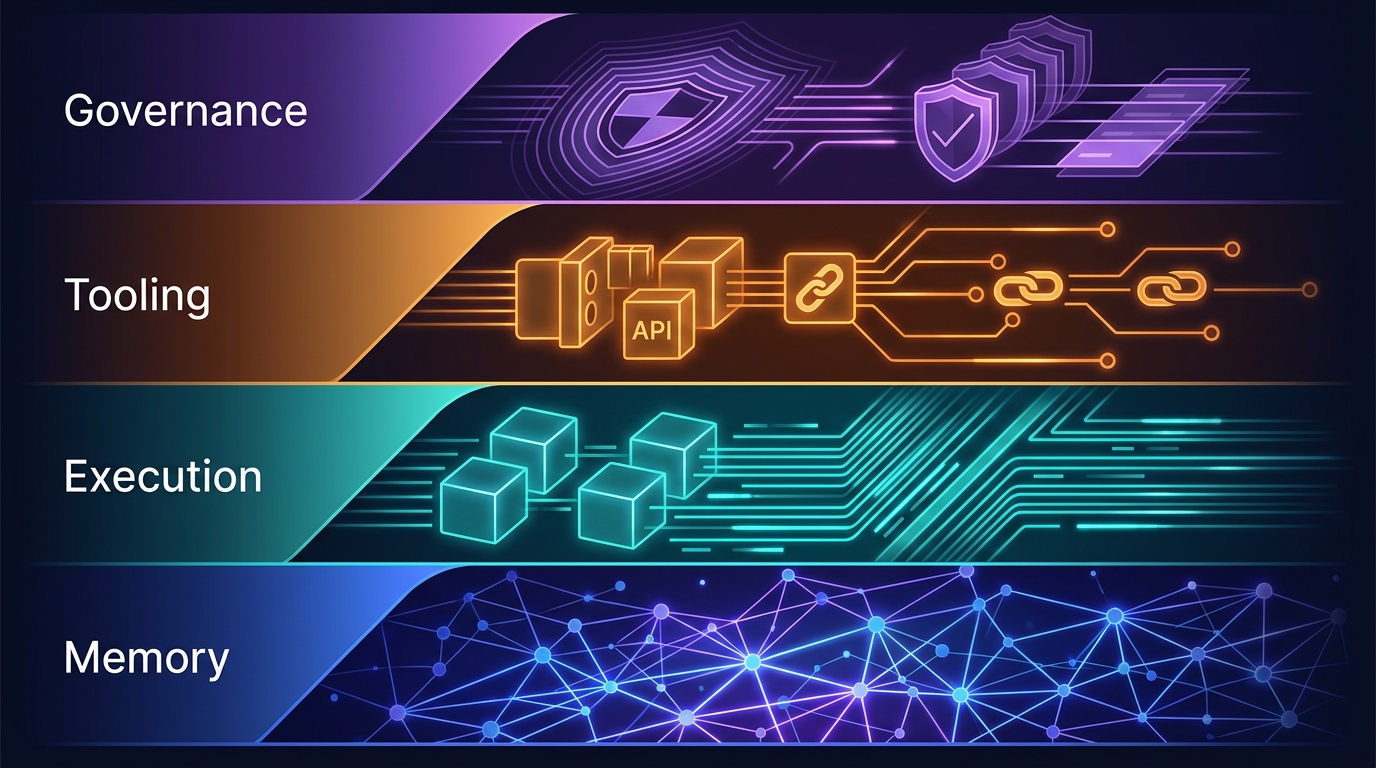

The Four-Layer Agent Infrastructure Stack: Where the Moat Actually Lives in 2026

A generation of agent startups will get commoditized. The ones that survive own one of four stateful layers: Memory, Execution, Tooling, or Governance. Here's how to tell the difference between a moat and glue code.

GPU Clouds: RunPod vs Lambda vs CoreWeave — June 2026

RunPod H100 at $2.69/hr. Lambda at $4.29/hr. CoreWeave at $6.16/hr — but requires 8-GPU minimums. Which GPU cloud makes sense for your agent workloads?