GPU FinOps: Reducing Your $10M AI Compute Bill

When GPU spend crosses $500k/month, informal cost discipline stops working. A FinOps playbook for large AI compute bills — attribution, commitments, workload placement, and the structural changes that matter.

GPU FinOps: Reducing Your $10M AI Compute Bill

An AI company’s GPU bill follows a predictable curve. First year: invisible. Second year: noticeable. Third year: dominant. At $10M/year of GPU spend, compute becomes the single largest line item after payroll — and the organization has to treat it with the same seriousness as headcount budgeting.

This post is the FinOps playbook for large GPU bills. Not the “tag your workloads” basics; the structural moves that shift millions of dollars.

The Levers, Ranked By Impact

From our work with shops at the $5M–$50M/year compute scale, the levers in rough order of dollar impact:

- Commitment structure — on-demand vs reserved vs bare-metal

- Workload right-sizing — matching workload to GPU generation

- Utilization — getting above 70% sustained

- Provider mix — neoclouds vs hyperscalers

- Model right-sizing — not using GPT-4o for classification

- Disaggregated serving — see our dedicated post

- Quantization — FP8 is essentially free on H100

- Caching — prompt caching, semantic caching

- Model distillation — custom smaller models for your workload

- Geographic optimization — region selection

We’ll focus on the top five. They represent 70–80% of the achievable savings.

Lever 1: Commitment Structure

On-demand GPU pricing is a tax you pay for flexibility. If your workload is sustained, commit.

| Commitment | Discount vs on-demand |

|---|---|

| Spot / preemptible | 30–60% |

| 1-year reserved | 30–50% |

| 3-year reserved | 50–65% |

| Dedicated / bare-metal multi-year | 50–70% |

| Co-location + owned hardware | 60–75% (incl. capex amortization) |

At $10M/year of spend, moving from on-demand to 1-year reserved saves $3M–$5M. 3-year reserved saves more but locks you in when hardware generations change.

The right blend:

- Baseline (predictable, 24/7): 60–70% on reserved or bare-metal

- Peak (sustained but bursty): 20–30% on shorter reserved

- Burst (spiky, unpredictable): 10–20% on on-demand

- Speculative (research, experiments): spot / preemptible

This is hard to execute without baseline visibility. Which leads to lever 2.

Lever 2: Workload Right-Sizing

“All our inference is on H100” is a red flag. Different workloads want different hardware.

Typical workload placement we recommend:

| Workload | GPU | Why |

|---|---|---|

| 70B+ inference | B200 / MI300X | Memory, FP4/FP8 efficiency |

| 7–13B inference at high QPS | H100 / L40S | Right-sized for throughput |

| 7–13B inference at low QPS | L4 / A10 | No need to pay for H100 |

| Batch embedding | A100 / L40S | Cheap bandwidth |

| Training foundation models | B200 / H100 w/ InfiniBand | Network matters |

| Fine-tuning | H100 (or MI300X for memory) | Balanced FLOPS / memory |

| Dev / experimentation | A100 / L40S / spot | Cost matters, perf doesn’t |

Moving classification workloads off H100 and onto L4 easily cuts that workload’s cost 4–5x. Across a $10M bill, right-sizing typically delivers 15–25% total savings.

Lever 3: Utilization

An H100 sitting at 35% utilization costs the same as one at 90%. The dirty secret of many AI fleets is sub-50% sustained utilization on expensive hardware.

What drives low utilization:

- Over-provisioned for peak. You spun up for Black Friday traffic; it’s now February.

- Batching inefficiency. Many models, few requests per model.

- Dev / experimentation on production hardware.

- Bad autoscaling. Scale-up is fast; scale-down is cautious.

- Idle workloads. Notebooks left running, forgotten replicas, dev environments.

Structural fixes:

- Multi-tenancy within a replica. Multi-LoRA serving to put many customers on one base model (see LoRA, QLoRA, and PEFT).

- Aggressive idle termination. Notebooks auto-shut after N hours. Replicas with no traffic drop to zero.

- Workload consolidation. Fewer, fuller replicas vs. many sparse ones.

- Right-sized autoscale targets. Target 70–80% average utilization, not 30%.

Each 10-point utilization improvement on a large fleet is a meaningful dollar amount. Going from 40% to 70% on a $10M fleet is effectively $4M in savings.

Lever 4: Provider Mix

Hyperscalers charge a premium for integrated services. Neoclouds undercut on GPU specifically. See Multi-Cloud GPU Strategy.

Typical discount (neocloud vs hyperscaler on-demand):

- 25–45% lower on H100

- 20–35% lower on B200

- 30–40% on MI300X (where available)

At scale, the delta is worth real engineering investment to run multi-cloud.

Pattern:

- Bulk reserved on neocloud (CoreWeave, Lambda, Crusoe): biggest cost line

- Hyperscaler for compliance-sensitive workloads: AWS / Azure / GCP

- Burst on the cheapest on-demand available

For a $10M/year bill, shifting from pure hyperscaler to a mixed deployment with neocloud reserved as the baseline typically saves $2M–$3M.

Lever 5: Model Right-Sizing

A team with only one model in their stack is always spending too much. Different queries merit different models.

Current 2026 price ladder:

| Tier | Model | Rough cost ($/M input) |

|---|---|---|

| Frontier | GPT-4o, Claude Opus 4 | $15–$25 |

| Fast frontier | Claude Sonnet 4, Gemini 2.5 Pro | $3–$5 |

| Mid tier | GPT-4o-mini, Claude Haiku 4.5 | $0.15–$0.50 |

| Cheap tier | Llama-3.3-70B hosted | $0.30–$0.50 |

| Ultra cheap | Llama-3.2-8B hosted | $0.05–$0.10 |

| Your own | Self-hosted 70B FP8 | ~$0.15–$0.40 (blended) |

A task-appropriate ladder lets you run a classification at 1/50th the cost of its GPT-4o version. Router logic costs a little complexity; the savings compound.

See AI FinOps: Tracking Token Spend for the attribution side and LLM Gateway Patterns for the routing side.

Attribution: The Foundation

You cannot cut what you don’t see. Every large AI shop ends up with the same three-layer attribution:

Layer 1: Per-workload

Which service, which team, which feature. Implemented via:

- Tags on cloud resources

- Virtual keys at the LLM gateway

- Service labels in Kubernetes

Layer 2: Per-customer (for SaaS)

Which end customer is generating which spend. Implemented via:

- Session tracking through the gateway

- Usage events to analytics

- Per-customer invoice rollups

Layer 3: Per-business-outcome

Cost per resolved support ticket. Cost per generated document. Cost per qualified lead. This is where FinOps becomes a strategic conversation.

Financial Structures Worth Knowing

As spend grows past a threshold, you gain access to commercial options:

Reserved capacity contracts

1-year or 3-year capacity at fixed pricing. Best for baseline load.

Enterprise agreements

Annual or multi-year commitments across multiple services. Hyperscalers will negotiate at $500k+/year.

Bare metal / dedicated racks

Lease racks in a colo. Buy or lease the GPUs. Eliminates cloud margin; adds ops overhead. Worth it above ~1000 GPUs sustained.

Owned infrastructure

Capex your own cluster. Best long-term economics; massive capex commitment; ops team required.

Most shops at $10M/year compute spend should have at least reserved capacity and an enterprise agreement. Bare metal is worth evaluating above $20M/year.

Organizational Structure

FinOps above $5M/year needs more than a dashboard. It needs an operating model:

- Dedicated FinOps role — one person minimum owns GPU cost as their primary metric

- Monthly cost review — engineering leadership + finance; standing agenda

- Quarterly forecasting — projected spend vs budget with assumptions

- Architecture council — major new workloads get cost-reviewed before build

- Budgets with teeth — team budgets that, if exceeded, trigger conversations

The human structure matters more than any tool. Teams with “a FinOps dashboard” save little. Teams with “Tomás reviews the GPU bill weekly and escalates deviations” save a lot.

The Common Traps

1. Saving 10% by optimizing the wrong line item. Focus on the top 3 workloads. Everything else is noise.

2. Ignoring capacity commits when scaling down. You committed to 100 H100 for 3 years; you now need 50. Either sublease, stay committed, or eat the loss.

3. Hyperscaler credits mask bad habits. “AWS gave us $5M in credits” → team doesn’t optimize → credits run out → cliff.

4. Chasing every new GPU generation. B200 cost/perf is real, but upgrading mid-reservation just costs money.

5. Under-investing in observability. You cannot manage what you cannot measure. Spend is a sacred cow until you have the data to question it.

The Roadmap From Chaos To Control

If your company’s GPU spend has crept above $500k/month without FinOps discipline:

Month 1: Baseline attribution. Who, what, how much. Accept the number is bigger than anyone thinks.

Month 2: Rate card negotiation with current providers. 10–20% gains from talking.

Month 3: Reserved commitments for baseline load. 20–30% on committed portion.

Month 4: Right-sizing. Audit workload → GPU match. 10–20% gains.

Month 5: Multi-cloud evaluation. Neoclouds vs hyperscalers for suitable workloads. 20–30% gains on shifted workloads.

Month 6: Utilization drive. Consolidate, autoscale aggressively, kill idle.

Month 7+: Structural levers — model right-sizing, disaggregation, distillation.

A team that executes this sequence typically cuts their compute bill 30–50% in the first year.

The Short Version

GPU FinOps at scale is a discipline, not a dashboard. The big wins come from:

- Commitment structure (reserved + baseline)

- Workload-to-GPU matching

- Utilization above 70% sustained

- Multi-provider mix

- Model-tier routing

These aren’t glamorous. They’re boring. They’re also where the dollars are. Companies that invest here have 20–40% lower unit economics than those that don’t. At serious scale, that’s the difference between a profitable AI product and one that isn’t.

Further Reading

- AI FinOps: Tracking Token Spend Across Your Org

- Multi-Cloud GPU Strategy: Avoiding Lock-in

- FP8 and Quantization: Serving LLMs at Half the Cost

- Disaggregated Inference: Prefill, Decode, and the New Serving Topology

Compute bill growing faster than revenue? Let’s talk — we run FinOps engagements for AI-heavy companies at every scale.

Related Posts

AI FinOps: Tracking Token Spend Across Your Org

LLM bills grew from invisible to huge in the span of a year. A complete FinOps playbook for AI workloads: attribution, budgets, alerting, and the reports finance actually wants.

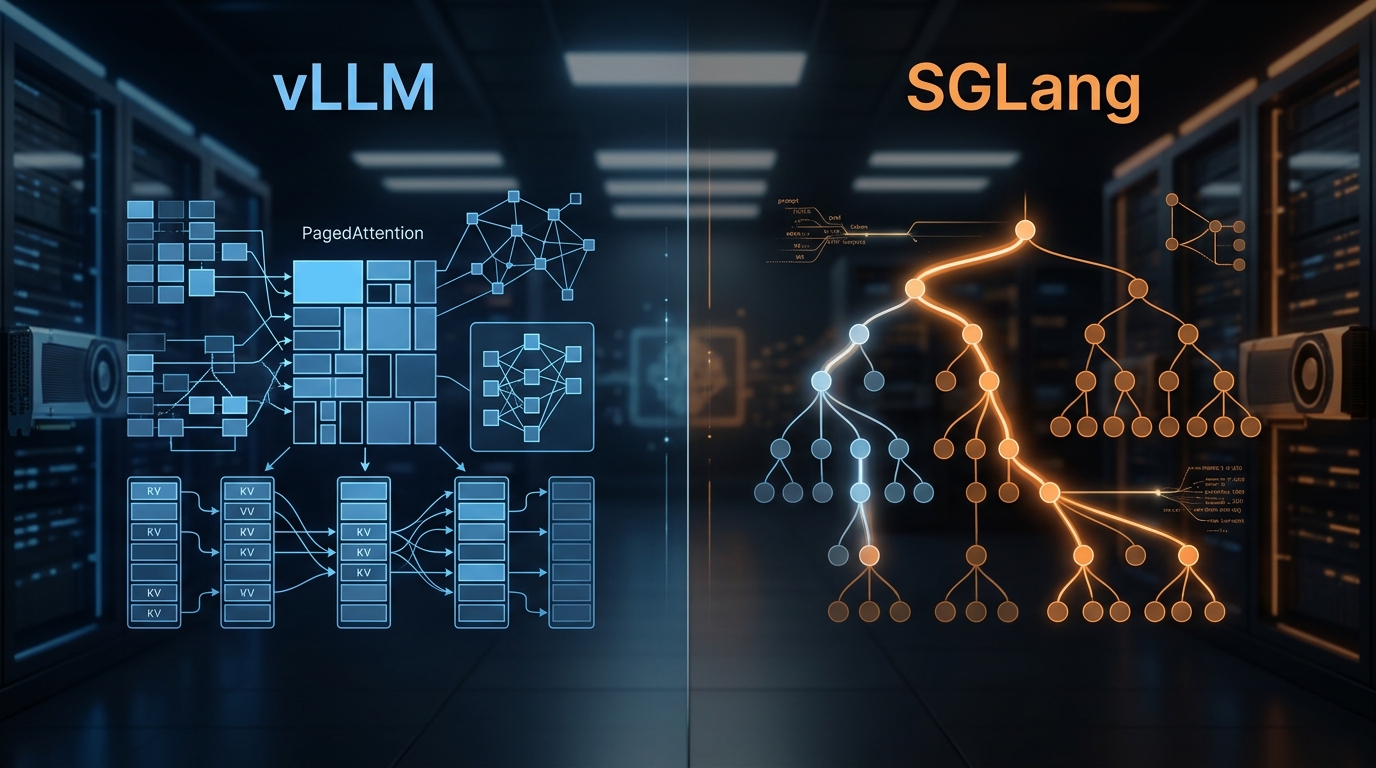

vLLM vs SGLang: Inference Engine Comparison 2026

We've deployed both at scale. Here's what the benchmarks actually show, where RadixAttention beats PagedAttention, and which engine to pick for your workload.

State of AI Infrastructure 2026: Mid-Year Reality Check

A mid-2026 ground-truth report: B200 reality, SGLang's $400M spinout, agent infra going mainstream, and the three patterns dominating production.