Building an AI Platform Team: Roles, Tools, and Rituals

AI platform engineering is a distinct discipline from ML ops and generic platform engineering. A practical guide to scoping, staffing, and operating an AI platform team — from first hire to org-wide enablement.

Building an AI Platform Team: Roles, Tools, and Rituals

At some point, every company with serious AI ambitions realizes the same thing: product teams shouldn’t be choosing their own vector database, writing their own retry logic, or re-implementing observability. That’s when the AI platform team is born.

This post covers what we’ve learned about building, staffing, and operating these teams — what they own, how they’re different from MLOps teams, when to start one, and the rituals that make them effective instead of bureaucratic.

What An AI Platform Team Actually Owns

The explicit surface area:

- Inference infrastructure — GPU fleets, serving stacks (vLLM, TGI, Triton), autoscaling, SLOs

- LLM gateway — routing, retries, cost attribution, guardrails

- Observability — tracing, evals, dashboards

- Context stack — vector DBs, memory layers, retrieval pipelines

- Tool registry — MCP servers, tool authentication

- Agent orchestration platform — workflow engine, durable state

- Developer experience — SDKs, templates, docs, golden paths

- Governance — cost, safety, compliance controls

The implicit:

- Enablement — teach product engineers how to build good AI features

- Quality engineering — eval harnesses, regression testing, red-team testing

- Vendor management — contracts with providers, capacity negotiations

- Incident response — when AI production breaks, this team is the on-call

How It’s Different From MLOps

MLOps is about training, deploying, and monitoring traditional ML models — classification, regression, recommendation. The discipline evolved around Kubeflow, MLflow, feature stores, and model registries.

AI platform (LLM-focused) is different in three structural ways:

- Less training, more serving. Most teams use foundation models. Training pipelines are smaller; inference and retrieval are dominant.

- More runtime orchestration. Agents, tool use, RAG — the complexity is runtime, not training.

- New observability primitives. Traces, evals, prompts — not the feature/model/prediction stack MLOps built for.

A good MLOps team can grow into an AI platform team. It’s not automatic — different tools, different patterns, different failure modes.

For shops doing both (traditional ML + LLM systems), many consolidate under one “AI platform” umbrella with sub-teams.

When To Start The Team

Before you need it, the platform work is spread across product teams. Each team builds its own gateway, its own tracing, its own eval. Duplication.

Signs it’s time:

- Multiple product teams are building AI features independently

- You have 3+ services calling OpenAI / Anthropic directly

- Nobody can answer “who spent how much on LLMs last month”

- You’ve had 2+ production incidents caused by prompt changes

- Total AI spend is over ~$50k/month

- Infrastructure decisions (which model, which vector DB) are being re-made per team

Most companies hit these signals around 30–100 engineers with meaningful AI investment.

Don’t wait past these signs. Every month you delay, duplication grows. Later, consolidation is painful.

The First Hire

Before a team: a platform lead. Someone who will:

- Audit current state (what’s built, by whom, how)

- Make 3–5 recommendations for consolidation

- Hire the next 2–3 people

- Embed with product teams while building

The right first hire has:

- Experience shipping LLM-based production systems (not just research or demos)

- Comfort across the stack: inference servers, gateways, vector DBs, observability

- Good judgment on build vs buy

- Strong written communication (will be writing a lot of docs)

- Patience for internal politics

Avoid:

- Researchers looking to do platform work “for a year”

- Generic platform engineers with no LLM-specific experience

- Hands-off architects who don’t ship

This is a staff+ IC or a tech lead role. Compensate accordingly.

The First Five Hires

Beyond the lead, the initial team composition that works:

- Inference infrastructure engineer — GPU, Kubernetes, vLLM/TGI. Owns the serving stack.

- Developer experience engineer — SDKs, templates, internal docs. Owns enablement.

- Observability / evals engineer — tracing, eval harness, dashboards. Owns quality signal.

- ML / model specialist — model selection, fine-tuning, evaluation judgment. Bridge to product teams on quality.

- Site reliability engineer (AI-specific) — on-call, incident response, capacity planning. Focus on operational excellence.

Beyond this core, you grow in the direction of the bottleneck. Many AI platform teams never grow past 10–15 people even at 500-engineer companies — the leverage is high.

The Platform Products

An AI platform team ships products internally. Some common ones:

The Internal LLM API

A single OpenAI-compatible endpoint that all services call. Routing, auth, rate limiting, observability, cost attribution. Built on LiteLLM / Portkey or custom.

The Eval Harness

A framework where teams define test cases, golden answers, and quality metrics. CI-integrated. Team owns the harness; product teams own their test sets.

The Agent Runtime

A managed environment for running agents: workflow engine, tool registry, state store, observability. Product teams bring agent logic; platform team runs it.

The Vector DB Service

A managed vector DB (or many) accessible via a unified API. Handles multi-tenancy, quotas, cost attribution. Product teams don’t need to know which vector DB.

The Dev Playground

A low-friction environment for prototyping. Notebook-friendly, safe credentials, cost caps.

The Safety Toolkit

Libraries for PII redaction, prompt-injection detection, output filtering. Used across services.

Not all of these on day one. Build in order of what unblocks product teams most.

Rituals That Keep It Effective

Platform teams without rituals devolve into infrastructure fortresses. Rituals we see work:

Weekly office hours

Product teams bring questions. Platform team answers. Sometimes open problems get co-designed.

Monthly architecture review

Product teams propose new AI features; platform team reviews for feasibility, cost, and alignment with the platform.

Quarterly platform roadmap review

Platform team presents: what shipped, what’s next, why. Product teams give feedback. Adjustments made.

Incident retros

When AI production breaks, the retro includes platform team. Pattern emerges over time; roadmap adjusts.

Internal blog / changelog

Platform team writes about what they built, why it matters, how to use it. Reduces “how do I…” tickets.

Shared Slack channel

One channel where product teams ask and platform team answers. Search-indexed institutional memory.

Teams that do these rituals ship 2–3x more platform value than teams that don’t. The time spent on rituals pays back in reduced ad-hoc support load.

Metrics Worth Tracking

Platform teams are easy to measure badly. A few metrics that actually mean something:

- Number of services using platform services. Growing means adoption; flat means people are going around you.

- Time from AI-feature idea to production. Down over time means the platform is actually accelerating.

- Monthly cost delta per 1M tokens. Platform team’s work should push this down (caching, routing, rightsizing).

- Incident rate attributable to prompt / model changes. Evals should drive this toward zero.

- Developer satisfaction. Quarterly NPS-style survey of product engineers. Below 7 = problems.

Avoid:

- LoC / commits shipped (measures motion, not progress)

- Number of tools built (encourages proliferation)

- Uptime alone (necessary but not sufficient)

Build vs Buy

The platform team’s most consequential decisions. Rough rules:

Always buy (or open-source adopt):

- LLM gateway (LiteLLM / Portkey — don’t build)

- Tracing backend (Langfuse / Langsmith — don’t build)

- Vector DB (Pinecone / Qdrant — don’t build)

- Workflow engine (Temporal / Inngest — don’t build)

Sometimes build:

- Custom gateway policies (on top of LiteLLM)

- Evaluation harnesses (company-specific)

- Agent templates (reflect your product patterns)

Rarely build:

- New inference servers (use vLLM)

- New model runtimes (use what exists)

The fastest way to sink a platform team is to have them re-implement open-source tooling. Customize, don’t recreate.

Common Failure Modes

1. Ivory tower. Platform team builds beautiful systems nobody uses. Fix: embed platform engineers with product teams part-time.

2. Too much governance. Every change goes through platform review. Product teams go around. Fix: opinionated defaults, easy golden paths, governance only on high-risk decisions.

3. Under-resourced. 1 person trying to support 15 product teams. Things break; frustration mounts. Fix: headcount parity grows with adoption.

4. Wrong leader. A lead without production LLM experience can’t make good architectural calls. Fix: hire right for the role.

5. Fragmented ownership. Different teams own different pieces of the platform with no integration. Fix: single throat to choke for the whole surface.

Evolution Over Time

A typical platform team evolution:

Year 1: “Stop the bleeding.” Consolidate gateway, tracing, core serving. Small team, hands-on.

Year 2: “Enable acceleration.” DX focus. SDKs, templates, docs. Product teams ship AI features 3x faster.

Year 3: “Optimize and govern.” FinOps, quality evals, compliance. Platform team becomes cost and risk owner.

Year 4+: “Strategic differentiation.” Custom models, specialized infra, multi-region. Platform is the moat.

The Short Version

- Start an AI platform team when duplication crosses a threshold (multiple product teams, multiple direct LLM callers, growing spend)

- First hire is a staff-level IC with real LLM production experience

- Build the platform as a product: users (internal engineers), features, roadmap, adoption metrics

- Invest in rituals; they’re the difference between a useful team and a bottleneck

- Buy / adopt open-source heavily; build only where you have real differentiation

- Measure adoption, speed, cost, incident rate; avoid vanity metrics

Good AI platform teams compound their value. Three years in, they’re the reason your company can ship AI features faster and cheaper than competitors. Starting well matters more than starting fast.

Further Reading

- The AI Infrastructure Stack: 2026 Edition

- AI FinOps: Tracking Token Spend Across Your Org

- GPU FinOps: Reducing Your $10M AI Compute Bill

Building or scaling an AI platform team? Reach out — we help with platform strategy, hiring, and technical direction.

Related Posts

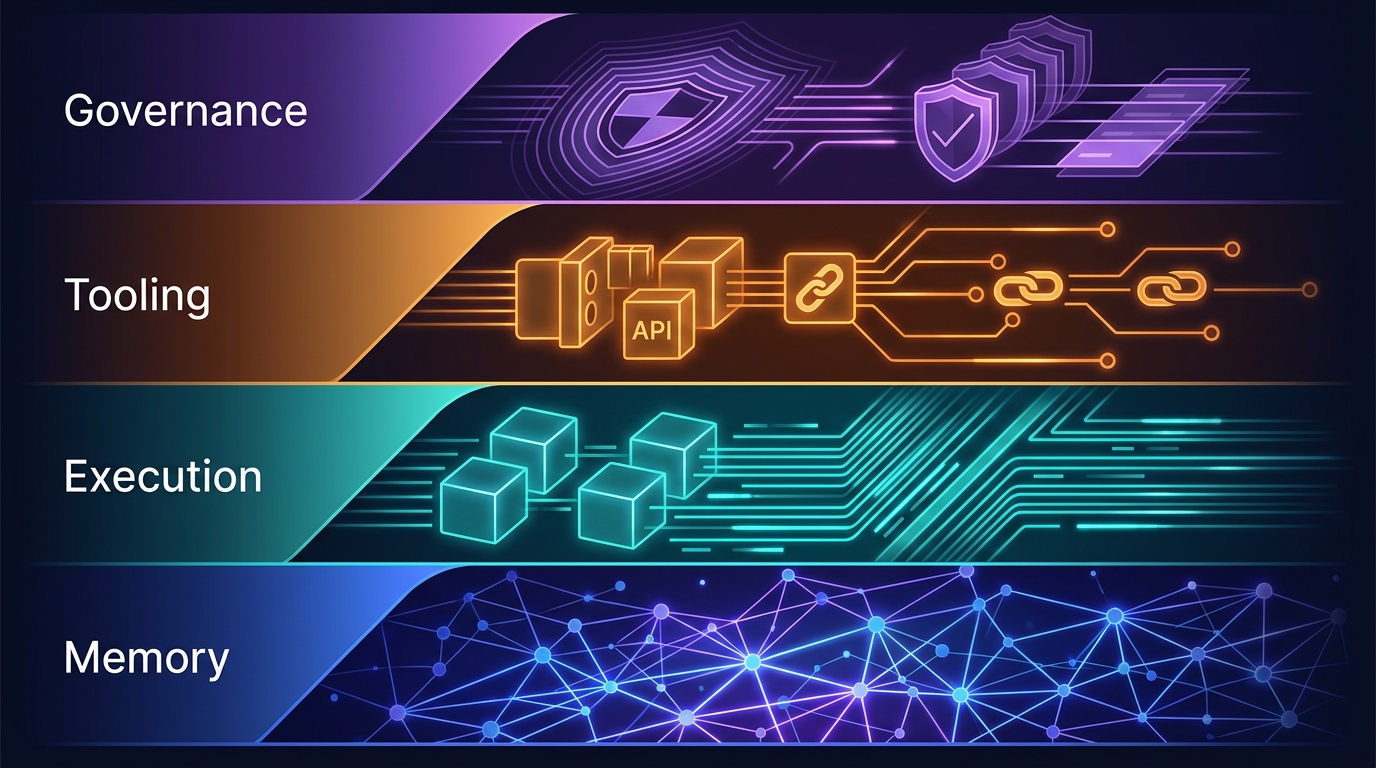

The Four-Layer Agent Infrastructure Stack: Where the Moat Actually Lives in 2026

A generation of agent startups will get commoditized. The ones that survive own one of four stateful layers: Memory, Execution, Tooling, or Governance. Here's how to tell the difference between a moat and glue code.

GPU Clouds: RunPod vs Lambda vs CoreWeave — June 2026

RunPod H100 at $2.69/hr. Lambda at $4.29/hr. CoreWeave at $6.16/hr — but requires 8-GPU minimums. Which GPU cloud makes sense for your agent workloads?

vLLM and SGLang Are Converging — and That Changes the Inference Stack

Both engines now share NVIDIA's FlashInfer kernels and expose identical OpenAI-compatible APIs. Meanwhile, SGLang spun out as RadixArk with $100M in seed funding, and vLLM hit 2M weekly installs. The inference layer is consolidating faster than anyone expected — here's what that means for teams building on top of it.