Disaggregated Inference: 30–50% Throughput Wins

Prefill is compute-bound; decode is memory-bound. Disaggregating them across separate GPUs yields 30–50% throughput wins in production.

Disaggregated Inference: Prefill, Decode, and the New Serving Topology

Walk into a well-tuned LLM inference stack in 2024 and you’d see one vLLM replica per GPU doing everything: prefill (processing the prompt) and decode (generating tokens) all interleaved. It worked. It was “good enough.”

Walk in today and you increasingly see two distinct fleets: prefill workers and decode workers, communicating over a fast network. This is disaggregated inference, and on realistic production workloads it delivers 30–50% throughput improvements on the same hardware.

The Insight

Prefill and decode have fundamentally different resource profiles.

Prefill — processing the user’s prompt. Runs one forward pass over the entire input. Parallelizes well across the input sequence. Bounded by FLOPS (compute).

Decode — generating tokens one at a time. Runs one forward pass per output token, each small. Poorly parallelizable (autoregressive). Bounded by memory bandwidth (reading weights and KV cache every step).

When you run them on the same GPU interleaved (the traditional approach):

- Prefill phases starve decode phases of compute

- Decode phases leave compute idle while saturating memory bus

- Long-prompt requests cause latency spikes for decode-in-progress

- Batching efficiency is compromised: you batch prefill or decode well, not both

Disaggregated inference runs prefill on one type of GPU (or pool) optimized for compute, decode on another optimized for bandwidth, and ships KV cache between them.

Why Now

Three conditions had to be right for this to be practical:

- Fast inter-GPU networking. NVLink, InfiniBand, and high-speed Ethernet (400 Gbps+) can move KV cache between nodes fast enough.

- Block-based KV cache. PagedAttention-style block layouts make KV cache transferable as discrete chunks.

- Mature inference servers. vLLM, SGLang, and TensorRT-LLM added first-class support for disaggregated modes in 2025.

All three hit production readiness in 2025. In 2026, disaggregation moved from experimental to standard for large deployments.

The Architecture

[ Request ]

│

▼

[ Router / Scheduler ]

│

├─────────────┐

▼ ▼

[ Prefill pool ] [ Decode pool ]

(large GPUs, (bandwidth-

compute- optimized,

optimized) ~2x decode

per $)

│ │

└─── KV ──────┘

cache transfer

(NVLink /

InfiniBand)

A request arrives:

- Router picks a prefill worker. Prefill worker processes the prompt, produces KV cache.

- KV cache is serialized and transferred to a decode worker.

- Decode worker generates tokens, streams to user.

Key design choices:

- Pool sizing. Prefill and decode have different throughput. For typical workloads, decode pools are 2–3x the size of prefill pools.

- Network topology. Prefill and decode colocated in the same rack if possible; cross-rack works but adds latency.

- KV transfer protocol. Usually NCCL-based over InfiniBand, or newer GPUDirect RDMA.

The Numbers

A realistic workload (Llama-3.1-70B, 2K average prompt, 300 average response):

Non-disaggregated (monolithic vLLM, 8 H100):

- Aggregate throughput: ~15,400 tok/s

- Prefill TTFT P50: 180ms

- Decode ITL P50: 42ms

Disaggregated (2 H100 prefill + 6 H100 decode):

- Aggregate throughput: ~22,800 tok/s (+48%)

- Prefill TTFT P50: 160ms

- Decode ITL P50: 28ms (-33%)

Better throughput and better latency, same hardware budget.

The win is larger when:

- Prompts are long (high prefill cost)

- Many concurrent requests (better batching efficiency)

- Prefill and decode have diverging optimal batch sizes

The win is smaller when:

- Prompts are short (prefill doesn’t dominate)

- Low concurrency (batching benefits smaller)

- Network between prefill and decode is slow

Hardware Heterogeneity

Disaggregation opens up using different GPUs for different roles:

- Prefill on H100 / B200 (compute-heavy)

- Decode on MI300X / L40S (bandwidth-heavy at lower cost per FLOP)

A production cluster might run H100 for prefill (where compute matters) and MI300X for decode (where memory bandwidth matters and MI300X’s 5.3 TB/s outpaces H100’s 3.35 TB/s).

Cost-per-token drops substantially with right-sized hardware.

Implementation Options

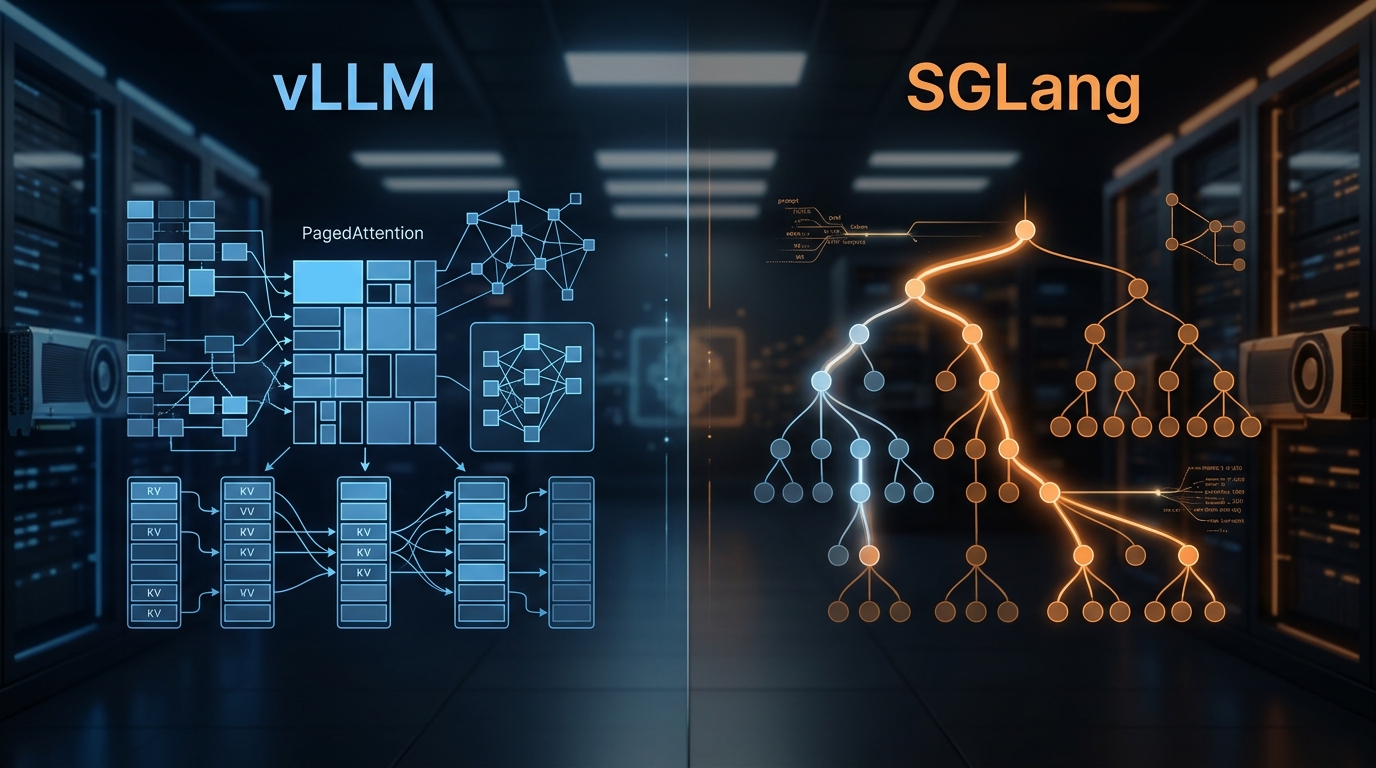

vLLM

vLLM 0.7+ supports disaggregated serving. Configuration involves:

- Running dedicated prefill-only and decode-only replicas

- A scheduler / router that assigns requests

- KV cache transfer via vLLM’s built-in NCCL-based transfer or LMCache integration

Still maturing; mostly deployed by larger shops.

SGLang

SGLang supports disaggregated serving with similar patterns. Strong for agent workloads where prompts often change but decode is long.

TensorRT-LLM

NVIDIA’s production TensorRT-LLM supports disaggregation natively. Best performance, most engineering investment to deploy.

DistServe / MoonCake / Custom

Research and open-source projects (DistServe from Beijing University) pioneered the architecture. Some teams still use research implementations; most migrate to vLLM or TRT-LLM once their features catch up.

When Disaggregation Is Worth It

Worth it:

- Large deployments (8+ GPUs sustained)

- Long prompts (RAG with big contexts, agent workloads with big system prompts)

- Mixed prefill/decode needs

- Cost-per-token matters enough to justify the complexity

Not worth it:

- Small deployments (1–2 GPUs)

- Short-prompt workloads (chat with minimal history)

- Teams without strong networking / infrastructure capacity

- Early-stage products iterating on prompts

A rough threshold: if your monthly GPU bill is under $50k, disaggregation is probably premature optimization.

Operational Considerations

Pool autoscaling

Prefill and decode pools scale independently. Your autoscaler needs to understand:

- Prefill queue depth (prompts waiting)

- Decode queue depth (requests waiting for KV transfer or decode slots)

- Inter-pool backpressure

If decode can’t keep up, prefill backs off. If prefill can’t keep up, decode workers sit idle. Needs smarter coordination than standard HPA.

Scheduling policy

When a request arrives, which prefill worker gets it? Which decode worker gets the result?

Policies:

- Round-robin (simple)

- Least-loaded (lower tail latency)

- Locality-aware (same rack when possible)

- Priority-based (premium customers first)

For mixed workload (long and short prompts), consider two prefill pools — a “fast” one for short prompts and a “bulk” one for long prompts.

KV cache transfer efficiency

Transfer is on the critical path. Optimizations:

- Pre-transfer when prefill finishes even before decode worker is assigned

- Compress KV cache during transfer

- Persist KV cache to a shared fabric (LMCache, Mooncake) so multiple decode workers can draw from it

Common Challenges

1. Cold start latency. A new decode worker has to receive KV cache before it can start. Warming strategies matter.

2. KV cache size spikes. Very long prompts produce huge KV caches. Can overwhelm transfer bandwidth.

3. Fault recovery. If a decode worker dies mid-generation, where does the request recover to? Need checkpointing or quick re-prefill.

4. Observability complexity. A request’s trace spans multiple GPUs and potentially multiple pods. Must thread trace IDs carefully.

5. Debugging. Subtle issues (off-by-one in KV layouts) don’t show up in small tests; manifest under load.

What’s Next

Patterns emerging on top of disaggregation:

Cached KV with persistent storage

KV cache persisted to a fast distributed store (Mooncake, LMCache). Any decode worker can pull any KV cache. Sessions can pause and resume cleanly.

Multi-tier KV

Hot KV in GPU memory, warm KV in CPU memory, cold KV on NVMe or object storage. Agent-style workloads with long sessions benefit substantially.

Fine-grained disaggregation

Not just prefill vs decode — also separating:

- Embedding lookup

- Attention computation

- Feedforward computation

- KV cache storage

Experimental but promising for very large-scale serving.

The Short Version

Disaggregated inference is the inference pattern that won 2025. It delivers 30–50% throughput improvements on realistic workloads by matching hardware to work type.

If you’re running 8+ GPUs sustained, evaluate it. If you’re running 1–4, stick with monolithic serving — the complexity isn’t worth the gain at that scale.

Your inference server probably already supports it (vLLM, SGLang, TensorRT-LLM). Deployment is the real work: the scheduler, the pool sizing, the observability, the fault recovery. Budget real engineering time.

Further Reading

- vLLM vs TGI vs Triton: LLM Inference Server Benchmarks

- KV Cache Optimization Techniques for LLM Serving

- The AI Infrastructure Stack: 2026 Edition

Evaluating disaggregated inference for your deployment? Reach out — we can run a sizing exercise against your actual workload.

Related Posts

vLLM and SGLang Are Converging — and That Changes the Inference Stack

Both engines now share NVIDIA's FlashInfer kernels and expose identical OpenAI-compatible APIs. Meanwhile, SGLang spun out as RadixArk with $100M in seed funding, and vLLM hit 2M weekly installs. The inference layer is consolidating faster than anyone expected — here's what that means for teams building on top of it.

vLLM vs SGLang: Inference Engine Comparison 2026

We've deployed both at scale. Here's what the benchmarks actually show, where RadixAttention beats PagedAttention, and which engine to pick for your workload.

KV Cache Optimization Techniques for LLM Serving

KV cache dominates memory and cost in LLM serving. Paged, compressed, offloaded, and shared — serve 2–4x more concurrent requests.