vLLM vs TGI vs Triton: LLM Inference Server Benchmarks

The three dominant LLM inference servers compared head-to-head on throughput, latency, features, and operational complexity. Benchmarks on H100, A100, and L40S — and which one to pick when.

vLLM vs TGI vs Triton: LLM Inference Server Benchmarks

If you’re self-hosting an LLM in 2025, three production-grade inference servers dominate: vLLM, Hugging Face TGI, and NVIDIA Triton + TensorRT-LLM. All three support continuous batching, tensor parallelism, quantization, and OpenAI-compatible APIs. The differences show up in the margins — throughput under load, latency tails, operational burden, and ecosystem fit.

This post reports benchmarks we ran across the three on identical hardware, plus a decision framework for picking.

The Contenders

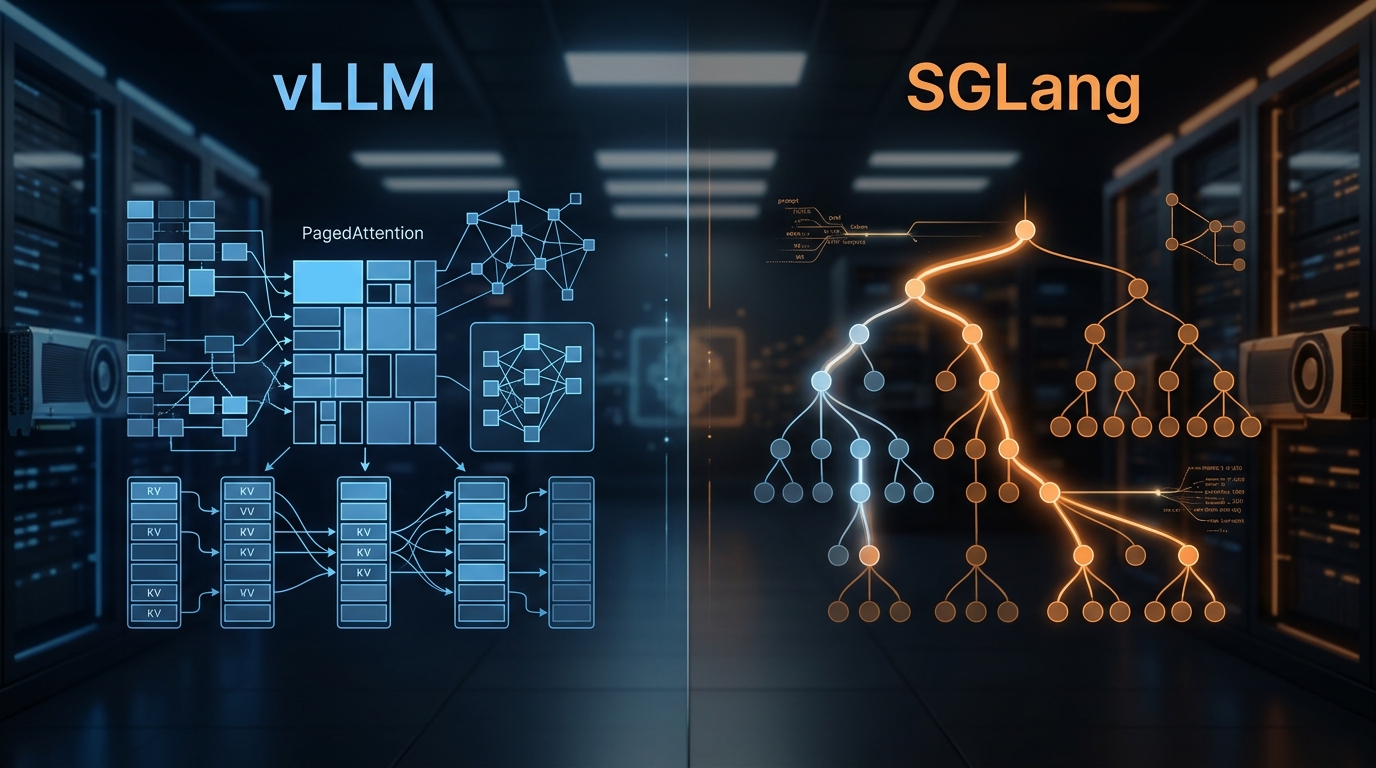

vLLM — Open-source, Python/CUDA. Reference implementation for PagedAttention. Broadest community, fastest feature velocity. Our default recommendation for most teams.

TGI (Text Generation Inference) — Hugging Face’s server, Rust/Python. Tight integration with HF Hub. Slightly more opinionated on configuration. Battle-tested at HF’s own Inference Endpoints.

NVIDIA Triton + TensorRT-LLM — Enterprise-grade serving platform from NVIDIA. TensorRT-LLM optimizes model engines; Triton serves them. Highest raw throughput if you invest in the engine build pipeline.

Honorable mentions we’ll mention briefly at the end: SGLang, LMDeploy, LightLLM, MLC-LLM.

Benchmark Setup

Fair benchmarking is hard. We tried to minimize the usual pitfalls.

Hardware: Single-node 8x H100 80GB (via CoreWeave), also tested on 2x A100 80GB and 1x L40S 48GB.

Models:

- Llama-3.1-8B-Instruct (FP16)

- Llama-3.1-70B-Instruct (FP8 on H100, AWQ on A100/L40S)

Workload: 10k sampled messages from LMSys-Chat-1M, filtered to realistic production length distribution (avg 320 input / 180 output tokens, P99 at 2500 / 1200).

Concurrency: ramped from 1 to 256 simultaneous requests.

What we measured:

- Aggregate throughput (tokens/sec across all concurrent requests)

- Time to first token (TTFT), P50 and P99

- Inter-token latency (ITL), P50 and P99

- End-to-end request latency, P99

- GPU utilization and KV cache utilization

Every server was tuned with sensible production settings — not default, but not cherry-picked either. We used each project’s own recommendations as a starting point.

Llama-3.1-8B Results (1x H100 80GB)

At concurrency 64 (sweet spot for single H100):

| Server | Throughput (tok/s) | TTFT P50 | TTFT P99 | ITL P50 | End-to-end P99 |

|---|---|---|---|---|---|

| vLLM 0.6.x | 4,840 | 62ms | 310ms | 18ms | 7.2s |

| TGI 2.3.x | 4,590 | 58ms | 265ms | 19ms | 7.9s |

| Triton + TRT-LLM | 5,210 | 48ms | 220ms | 16ms | 6.8s |

TensorRT-LLM wins throughput by ~7% and latency by ~15%. The gap is real but modest for small models.

Llama-3.1-70B Results (4x H100 80GB, TP=4)

At concurrency 128:

| Server | Throughput (tok/s) | TTFT P50 | TTFT P99 | ITL P50 | End-to-end P99 |

|---|---|---|---|---|---|

| vLLM 0.6.x | 6,420 | 180ms | 1.2s | 42ms | 15.1s |

| TGI 2.3.x | 6,180 | 190ms | 1.3s | 44ms | 16.4s |

| Triton + TRT-LLM | 8,290 | 145ms | 920ms | 36ms | 12.6s |

Triton pulls ahead more clearly at 70B. ~30% throughput advantage, noticeable TTFT improvement. TensorRT-LLM’s kernel optimizations matter more at larger scale.

Prefix Caching Impact (RAG Workload, 2K Shared System Prompt)

Real-world RAG-style workloads often have a large shared system prompt. With prefix caching enabled:

| Server | Throughput (tok/s) | Throughput vs baseline |

|---|---|---|

| vLLM + prefix caching | 9,110 | +42% |

| TGI + prefix caching | 8,640 | +39% |

| Triton (with KV reuse) | 10,420 | +26% |

Prefix caching is a bigger multiplier for vLLM and TGI than for Triton, because Triton’s baseline is already higher. All three benefit significantly.

Feature Comparison

| Feature | vLLM | TGI | Triton + TRT-LLM |

|---|---|---|---|

| Continuous batching | ✅ | ✅ | ✅ |

| Tensor parallelism | ✅ | ✅ | ✅ |

| Pipeline parallelism | Partial | Partial | ✅ |

| FP8 | ✅ | ✅ | ✅ |

| AWQ, GPTQ | ✅ | ✅ | ✅ |

| Prefix caching | ✅ | ✅ | ✅ (KV reuse) |

| Speculative decoding | ✅ | ✅ | ✅ |

| Structured output | ✅ (Outlines/guided) | Basic | ✅ |

| Multi-LoRA serving | ✅ | Partial | ✅ |

| Chunked prefill | ✅ | ✅ | ✅ |

| Disaggregated serving | Partial | No | ✅ |

| OpenAI-compatible API | ✅ | ✅ | ✅ (via front-end) |

| Multi-model per server | Partial | No | ✅ |

| AMD GPU support | ✅ | ✅ | No |

| Engine build required | No | No | Yes |

The key asymmetry: TensorRT-LLM requires an ahead-of-time engine build for each (model, hardware, config) combination. This is hours of CI work per model. vLLM and TGI load weights directly.

Operational Burden

vLLM:

pip install vllm; run server; done- Configuration via CLI flags

- Logs are Python-ey but readable

- Broad community on GitHub, Discord

TGI:

- Docker image from HF; run container

- Configuration via env vars — well-documented

- Rust internals; logs are structured

- Backed by HF; responsive team

Triton + TensorRT-LLM:

- Build TensorRT-LLM engine (requires Docker + nvidia-container-toolkit + specific CUDA versions)

- Write Triton config.pbtxt files

- Deploy Triton server container

- Debug Triton’s multi-layer stack (Python backend, C++ server, engine)

The operational gap is real. A fresh vLLM deployment takes 20 minutes. A fresh TensorRT-LLM + Triton deployment, including engine build pipeline, takes a week of engineer time the first time.

Latency Behavior Under Load

Throughput is one view; latency tails are another. At concurrency 256 on Llama-3.1-70B:

| Server | ITL P50 | ITL P99 | Failures |

|---|---|---|---|

| vLLM | 48ms | 210ms | 0.3% (mostly timeouts) |

| TGI | 52ms | 260ms | 0.4% |

| Triton | 40ms | 160ms | 0.1% |

Triton has tighter tails, which matters for latency-sensitive user experiences. vLLM and TGI are comparable.

The Decision Framework

Pick vLLM if:

- You want maximum flexibility and feature velocity

- You’re willing to trade ~10–30% throughput for 10x operational simplicity

- You need AMD GPU support

- You serve a lot of different models / LoRA variants

- Most teams: this is your answer

Pick TGI if:

- You live deep in the Hugging Face ecosystem

- You want Rust-grade stability and structured logs

- You want a slightly more opinionated, less-knobs-to-tune experience

- You already run TGI and are happy

Pick Triton + TensorRT-LLM if:

- You have a dedicated platform team willing to invest

- Performance matters enough that 30% more throughput pays back the engineering cost

- You need multi-model serving at the same infrastructure (non-LLM included)

- You’re at a scale where every percent of throughput is dollars

- You’re deploying to NVIDIA-only hardware

Our split in the field: ~70% vLLM, ~15% TGI, ~15% Triton. Triton’s share rises with customer size.

The Up-and-Comers

Worth your attention but not yet mainstream:

- SGLang — Built around a structured programming model for prompts. Competitive with vLLM on raw throughput; excellent for agent workloads with many tool calls.

- LMDeploy — Shanghai AI Lab. Strong performance, lighter operational footprint than vLLM. Popular in China; growing globally.

- LightLLM — Research-leaning, good benchmarks, smaller community.

- MLC-LLM — Cross-platform deployment story. Edge-oriented.

SGLang specifically is worth evaluating if your workload involves complex branching, many tool calls per request, or structured output. It’s the fastest server for those patterns.

Known Caveats

1. Benchmark numbers age fast. vLLM, TGI, and TRT-LLM all release every 2–4 weeks. The gap between them at any given moment shifts. Rerun if you care.

2. Workload shape matters. Long prompts + short outputs favor different servers than short prompts + long outputs. Don’t trust a single benchmark.

3. FP8 quality. All three have FP8 support, but the exact quantization routines differ subtly. Always validate on your own eval set before deploying FP8 in production.

4. Costs. TRT-LLM’s engineering overhead is real. On small fleets, the simpler server saves engineering time that dwarfs perf wins.

Further Reading

- vLLM: The Open-Source Inference Engine Changing LLM Serving

- PagedAttention Explained: How vLLM Achieves 24x Throughput

- Continuous Batching for LLMs: Why It Matters

- Self-Hosting Llama 3: A Production Deployment Guide

Benchmarking inference servers for your workload? Reach out — we’ll help you compare apples to apples.

Related Posts

Continuous Batching for LLMs: Why It Matters

Static batching leaves 50%+ of your GPU idle. Continuous batching at the iteration level closes the gap with 2–5x throughput wins.

vLLM and SGLang Are Converging — and That Changes the Inference Stack

Both engines now share NVIDIA's FlashInfer kernels and expose identical OpenAI-compatible APIs. Meanwhile, SGLang spun out as RadixArk with $100M in seed funding, and vLLM hit 2M weekly installs. The inference layer is consolidating faster than anyone expected — here's what that means for teams building on top of it.

vLLM vs SGLang: Inference Engine Comparison 2026

We've deployed both at scale. Here's what the benchmarks actually show, where RadixAttention beats PagedAttention, and which engine to pick for your workload.