vLLM: The Open-Source Inference Engine Changing LLM Serving

vLLM uses PagedAttention and continuous batching for dramatically higher LLM throughput vs. HuggingFace serving. Architecture, benchmarks, deployment notes.

vLLM: The Open-Source Inference Engine Changing LLM Serving

Before vLLM, self-hosting a large language model meant picking between HuggingFace’s generate() (simple, slow) and NVIDIA Triton + TensorRT-LLM (fast, complex, NVIDIA-only). vLLM shipped in mid-2023 from a Berkeley Sky Computing Lab team, open-sourced its PagedAttention implementation, and within a year became the default inference stack for most self-hosted LLM workloads.

This article explains what vLLM is, why it’s fast, what it’s good for, and the operational details you need to know before shipping it.

The Problem vLLM Solves

Naive LLM serving wastes GPU memory and compute in two specific ways.

1. KV cache fragmentation. When a model generates tokens, it caches the attention keys and values (KV cache) for every position. Traditional implementations pre-allocate a contiguous block per request, sized for the worst-case max length. If you serve a 4K-context model, every request reserves 4K-worth of KV even if it only generates 100 tokens. Memory utilization typically sits below 50%.

2. Batching at the wrong granularity. Static batching waits to assemble a batch, runs it start-to-finish, then assembles the next. The longest request in the batch pins everyone else. GPU utilization also sits below 50%.

Together these mean a single request workload uses the GPU well, but a multi-request production workload uses maybe 20–40% of the theoretical throughput.

vLLM fixes both.

PagedAttention: Virtual Memory for the KV Cache

The headline innovation is PagedAttention, which treats the KV cache like an OS virtual memory subsystem.

Instead of contiguous per-request blocks, KV cache is stored in fixed-size blocks (default 16 tokens). A per-request page table maps logical token positions to physical blocks. New tokens allocate blocks on demand; finished requests free them.

The consequences:

- Near-zero fragmentation. Blocks are fungible. A request that generates 50 tokens uses ~4 blocks, not the max-length allocation.

- Sharing works. Two requests that share a prefix (e.g., a system prompt) can share physical KV blocks — a technique vLLM calls prefix caching. For RAG or agent workloads where every request has the same 2K-token system preamble, this alone can halve memory use.

- Copy-on-write for beam search. Beam search traditionally duplicates KV caches; PagedAttention lets beams share blocks until they diverge.

Memory utilization in production vLLM deployments routinely hits 90%+ of available HBM. That’s directly convertible into more concurrent requests, longer contexts, or bigger batches.

For the architectural details, see our PagedAttention Explained.

Continuous Batching: The Other Half of the Win

The second big idea in vLLM is continuous batching (sometimes called “in-flight batching” or “iteration-level scheduling”).

Instead of batching at the request level, vLLM batches at the token-generation-step level. At each forward pass, the scheduler looks at all in-flight requests, decides which can make progress, and batches those. A request that finishes mid-batch is replaced immediately with a waiting request.

This means:

- Short requests don’t wait for long requests.

- The GPU stays full as long as there’s demand.

- Throughput becomes largely independent of request-length variance.

Orca, a paper by the Seoul National University team, introduced the technique in 2022. vLLM was the first widely-adopted open-source implementation, and it’s now standard across TGI, TensorRT-LLM, and others.

See our Continuous Batching explainer for the full mechanics.

Benchmarks: Is the Hype Justified?

In our own testing on a single H100 80GB, serving Llama-2-13B-chat:

| Setup | Throughput (tokens/s) | P50 latency | P99 latency |

|---|---|---|---|

HF generate(), batch 1 | 36 | 28ms/tok | 32ms/tok |

HF generate(), naive batching (8) | 220 | 36ms/tok | 190ms/tok |

| vLLM, default settings | 3,100 | 38ms/tok | 95ms/tok |

| vLLM w/ prefix caching (2K shared system prompt) | 4,600 | 35ms/tok | 88ms/tok |

That’s ~14x vs single-stream HF, ~20x vs naive batching. Gaps are larger at higher concurrency — at 64+ concurrent requests, vLLM is 30x+ because naive implementations fall over entirely.

vLLM vs other production servers:

- vs TGI (Text Generation Inference): vLLM edges it by 10–25% on throughput for most workloads; TGI has slightly better streaming latency.

- vs TensorRT-LLM: TensorRT-LLM is 1.2–1.8x faster on NVIDIA hardware if you invest in the engine-build pipeline. vLLM closes the gap each release.

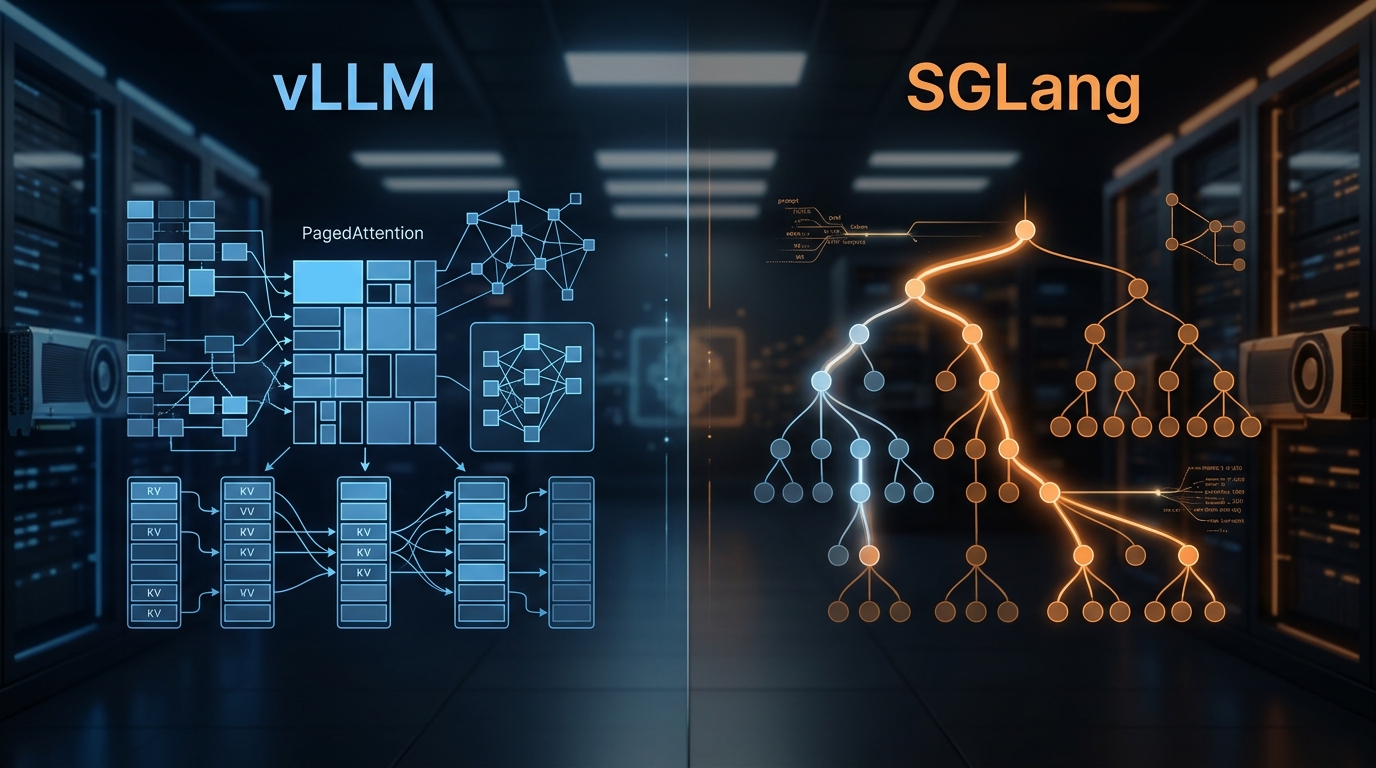

- vs SGLang: SGLang competes on complex programs with lots of tool calls and branching. For standard chat inference, vLLM is roughly tied.

For most teams, vLLM is the right default. You switch away only if you have a specific reason: structured output coverage (SGLang), maximum performance-at-any-cost (TensorRT-LLM), tight HF integration (TGI).

We cover the full comparison in vLLM vs TGI vs Triton Benchmarks.

What You Actually Get

vLLM ships as a Python package and a server. The two usage patterns:

Offline batched inference:

from vllm import LLM, SamplingParams

llm = LLM(model="meta-llama/Llama-2-13b-chat-hf")

params = SamplingParams(temperature=0.7, max_tokens=256)

outputs = llm.generate(prompts, params)

OpenAI-compatible server:

python -m vllm.entrypoints.openai.api_server \

--model meta-llama/Llama-2-13b-chat-hf \

--tensor-parallel-size 2 \

--gpu-memory-utilization 0.92

The server exposes /v1/completions, /v1/chat/completions, and /v1/embeddings endpoints compatible with OpenAI’s API. That means you can point an existing OpenAI client at a vLLM endpoint and it “just works.”

Supported features as of 2024:

- Tensor parallelism for multi-GPU. Pipeline parallelism is landing.

- Quantization: AWQ, GPTQ, FP8 (on H100+), SqueezeLLM

- Speculative decoding with draft models

- Structured output via outlines / guided decoding

- Prefix caching

- Multi-LoRA serving — serve many LoRA adapters over one base model

- Chunked prefill — for long prompts, split prefill across steps to co-batch with decode

Operational Notes From Production

Things we’ve learned running vLLM in production:

1. GPU memory utilization is a real knob. Default is 0.9 (90%). Push to 0.92–0.95 if you’re not running other workloads on the GPU. Below 0.85 you’re leaving throughput on the table.

2. max-model-len matters more than you think. vLLM pre-computes KV cache block capacity based on max length. Setting it way above your actual usage wastes memory. Set it to the largest context you actually use.

3. Warm it up. First request latency is ~10–30 seconds for weight loading and CUDA graph capture. Ship a warmup script in your container entrypoint.

4. Prefix caching is a free 1.5–2x for RAG/agent workloads. Enable --enable-prefix-caching — the only reason not to is if you’re running truly unique prompts per request.

5. Tensor parallelism has overhead. TP=2 doesn’t give you 2x throughput; it gives you 1.4–1.7x. Use it when you need the memory (a model that doesn’t fit on one GPU), not for throughput scaling.

6. The Python GIL is a real bottleneck for pre/post-processing. vLLM 0.5+ pushes more logic into C++; still worth minimizing Python work in your wrapping service.

7. Health checks lie. vLLM’s /health endpoint returns 200 even when the model is wedged. Pair it with a synthetic-request probe that actually generates a token.

When Not to Use vLLM

- Tiny models on CPU. Use llama.cpp or GGML-based runtimes.

- Edge / mobile inference. Use Ollama, MLX, ONNX Runtime Mobile.

- Low-latency, single-stream workloads. vLLM optimizes throughput; for single-request latency, a thinner stack can be slightly faster.

- Non-transformer architectures. vLLM is transformer-specific. Diffusion, Mamba, etc. go elsewhere.

Deployment Patterns

Three common patterns we see with clients:

Pattern 1: Single-model, single-node. One vLLM server, behind a LiteLLM gateway, serving one model. Simple, works up to ~1M tokens/day.

Pattern 2: Multi-LoRA, single base model. One vLLM server loaded with a base model (e.g. Llama-3-8B) and dozens of LoRA adapters. You serve many “models” from one GPU fleet. Cost-effective for per-customer fine-tunes.

Pattern 3: Sharded multi-node. For 70B+ models, tensor-parallel across 4–8 GPUs, multiple replicas behind a scheduler. This is where you start caring about Ray Serve or Kubernetes orchestration — see Ray Serve vs Kubernetes for Model Serving.

Further Reading

- PagedAttention Explained: How vLLM Achieves 24x Throughput

- Continuous Batching for LLMs: Why It Matters

- vLLM vs TGI vs Triton: LLM Inference Server Benchmarks

- Self-Hosting Llama 3: A Production Deployment Guide

Running vLLM in production and want help tuning it? Reach out — we’ve profiled vLLM deployments from a single H100 to multi-cluster fleets.

Related Posts

PagedAttention Explained: How vLLM Achieves 24x Throughput

PagedAttention borrows OS virtual-memory ideas to fix the biggest efficiency problem in LLM serving: fragmented KV caches. Here's how it works and why it changed LLM inference.

vLLM and SGLang Are Converging — and That Changes the Inference Stack

Both engines now share NVIDIA's FlashInfer kernels and expose identical OpenAI-compatible APIs. Meanwhile, SGLang spun out as RadixArk with $100M in seed funding, and vLLM hit 2M weekly installs. The inference layer is consolidating faster than anyone expected — here's what that means for teams building on top of it.

vLLM vs SGLang: Inference Engine Comparison 2026

We've deployed both at scale. Here's what the benchmarks actually show, where RadixAttention beats PagedAttention, and which engine to pick for your workload.