GPU Cloud Comparison: CoreWeave, Runpod, Lambda

Neocloud GPUs undercut hyperscalers by 40–70%. Side-by-side on CoreWeave, Runpod, Lambda, Crusoe, and Fly.io — pricing, availability, and when to pick each.

GPU Clouds Compared: CoreWeave, Lambda, Runpod, Fly and the Neoclouds

The hyperscalers — AWS, Azure, GCP — price H100 at $4–12/hr on-demand. The neoclouds — a cohort of GPU-specialist providers that emerged in 2022–2024 — price the same hardware at $2–4/hr on-demand, sometimes lower. That delta has reshaped where AI workloads live.

This guide is a practical comparison of the providers we deploy to most often. Not a ranked list; they’re each good at something different.

Who The Neoclouds Are

The names you need to know in 2024:

- CoreWeave — the largest neocloud. ~400k+ GPUs, enterprise-focused, raising billions. Public in 2025.

- Lambda Labs — founder-friendly, strong Stanford/Berkeley ties, reasonable reserved pricing.

- Runpod — strongest community product, instant pods, serverless inference endpoints.

- Crusoe Energy — runs GPUs in stranded-gas locations; competitive long-term contracts.

- Together AI — compute plus hosted inference API; both a compute provider and an inference company.

- Scaleway — European option, sovereignty story.

- Vast.ai — GPU marketplace, very cheap, variable reliability.

- Paperspace (now DigitalOcean) — acquired in 2023; integrated into DO’s ecosystem.

- Fly.io — not a GPU-specialist historically, but shipped GPU machines and small-model inference.

- Cerebras / Groq / SambaNova — custom-silicon alternatives for specific workloads.

This list changes every six months. New entrants pop up; others get acquired. The fundamentals are stable, though.

CoreWeave: The Enterprise Default

Strengths:

- Scale. They have the most H100 capacity outside the hyperscalers.

- Kubernetes-native. CKS (CoreWeave Kubernetes Service) is solid.

- Reserved pricing that’s 30–50% below hyperscalers.

- Bare metal available. InfiniBand available. All the knobs real workloads need.

Weaknesses:

- Less polished than hyperscalers for ancillary services (object storage, databases). You’ll pair with S3 or another provider for these.

- Minimum-commit contracts for the best pricing. Not great for small teams.

- Regional coverage is improving but still concentrated in US East/Central and one UK region.

Best for: Mid-to-large organizations running sustained GPU workloads with real DevOps maturity. If you’re spending $50k/mo+ on GPUs, CoreWeave should be in your mix.

Rough pricing (2024): H100 80GB at ~$2.50/hr reserved, $4.25/hr on-demand; A100 80GB at ~$1.20/hr reserved, $2.10/hr on-demand.

Lambda Labs

Strengths:

- Simple. Web UI to spin up a single GPU box or a cluster.

- Strong community — a lot of AI research runs on Lambda.

- 1-Click Clusters for small H100 training jobs.

- Competitive reserved pricing.

Weaknesses:

- Orchestration story is less mature than CoreWeave’s K8s product.

- Regional coverage narrower than CoreWeave.

- Has had capacity-constraint periods during H100 crunch (2023-2024); availability is better now.

Best for: Research teams, small companies doing serious fine-tuning, anyone who wants “give me a GPU” without heavy ops.

Rough pricing (2024): H100 80GB at ~$2.99/hr on-demand, reserved as low as $2.20/hr.

Runpod

Strengths:

- Serverless inference endpoints are the strongest in the market. Spin up a vLLM endpoint with autoscaling in minutes.

- Community pods (cheap, community-hosted GPUs) for development.

- Fast pod provisioning — seconds to minutes.

- Good for “I need 4 H100s for this afternoon” workflows.

Weaknesses:

- Community pods have variable reliability. Use secure cloud pods for production.

- Networking between nodes is less robust than purpose-built clouds like CoreWeave — distributed training is possible but you’ll fight for perf.

- Smaller operations team; support can be slower.

Best for: Developers and small teams running inference workloads, anyone wanting serverless GPU without the hyperscaler complexity.

Rough pricing (2024): H100 80GB in secure cloud at ~$2.50–$3/hr; community pods can be under $2/hr. Serverless pricing is per-second.

Crusoe Energy

Strengths:

- Long-term reserved pricing is aggressive — often the cheapest bulk H100 outside the big enterprise CoreWeave deals.

- ESG story: runs on stranded energy that would otherwise be flared.

- Building out real data-center capacity (not just co-location).

Weaknesses:

- Less flexible than CoreWeave or Lambda for small deployments.

- Best pricing requires multi-year commits.

- Newer to the market; operational maturity still growing.

Best for: Teams with long-horizon, high-volume GPU needs. Think frontier-model training or enterprise ML platforms committed for 2+ years.

Together AI

Strengths:

- Dual product: rent bare compute OR use their hosted inference API.

- Hosted inference is competitive with the best (fireworks.ai, anyscale, deepinfra) and supports most open models.

- Cluster leasing for training is clean.

Weaknesses:

- Less DIY than CoreWeave — you’re using their stack, not yours.

- Hosted API has the usual multi-tenant noisy-neighbor risks.

Best for: Teams doing inference-first workloads who want to start on a hosted API and graduate to their own cluster.

Vast.ai

Strengths:

- Cheapest GPUs available. Period.

- Global marketplace of community providers.

- Bid-based pricing — you can get H100 below $1.50/hr if you’re flexible.

Weaknesses:

- Host quality varies wildly. Some machines are great; others drop off with no warning.

- Not suitable for production critical workloads without extensive redundancy.

- Compliance story is essentially nonexistent for regulated workloads.

Best for: Research, experimentation, non-critical training runs, anyone willing to trade reliability for cost.

The Hyperscalers (AWS / Azure / GCP)

Worth addressing directly, since a lot of teams stay with them despite the price premium.

Strengths:

- Full integration with the rest of your stack (IAM, networking, databases, object storage).

- Compliance frameworks in place (FedRAMP, HIPAA, SOC 2, etc.).

- Enterprise procurement and support.

- SageMaker/Vertex AI/Azure ML provide managed ML platforms.

Weaknesses:

- Pricing is 1.5–3x the neoclouds on identical GPUs.

- H100 availability has been tight; capacity reservations often required.

- Abstractions (SageMaker, Vertex) can be leaky when you want the raw GPU experience.

Best for: Regulated industries, existing enterprise accounts with committed spend, organizations that value integrated compliance over cost.

Rough pricing (2024): H100 on-demand: AWS $4.50–$12/hr (highly regional), Azure ~$6.98/hr, GCP ~$10.16/hr.

Our Deployment Pattern

For most client deployments we end up with a mix:

- Training and bulk inference on CoreWeave, Lambda, or Crusoe reserved. Capacity is cheaper and we can get the networking we need.

- Development, experiments, notebooks on Runpod or Lambda on-demand.

- Integrated services (databases, object storage, secrets, monitoring) on AWS or GCP. Neoclouds are weak here and not worth the friction.

- Compliance-sensitive inference (HIPAA, SOC 2) on AWS or Azure, because the compliance paperwork is done.

Networking between clouds matters. Egress fees on object storage can dominate cost if you’re shipping data back and forth. Co-locate data with compute or pay for dedicated interconnects.

Evaluating a New Provider

If you’re looking at a new GPU cloud, ask:

- What’s the actual availability? Published prices mean nothing if you can’t get capacity when you need it. Ask for provisioning SLA.

- What’s the network? InfiniBand? RoCE? 100G Ethernet? Matters massively for multi-node training.

- Do you get root on bare metal or are you in their managed layer? Bare metal + root is strongest for custom workloads.

- What’s the storage story? Local NVMe per node? Shared filesystem? Object storage? Egress to other providers?

- How are GPUs allocated? Dedicated physical GPU, or MIG slice, or time-shared? Big performance and isolation implications.

- What’s the support? Dedicated slack channel with real engineers? Or ticketing-only?

- What’s the compliance posture? SOC 2? HIPAA? FedRAMP? Only matters if you need it, but matters a lot if you do.

The Bottom Line

For 2024 workloads:

- < $5k/month GPU spend, experimental/dev: Runpod, Lambda, or Vast.ai.

- $5k–$50k/month, production: CoreWeave or Lambda. Mix with hyperscaler for integrated services.

- $50k+/month, committed: Multi-cloud strategy. Reserved at CoreWeave/Crusoe/Lambda; hyperscaler for compliance-sensitive workloads; hosted APIs for spiky.

- Regulated or enterprise procurement: Hyperscalers. The compliance work is the value.

Test what you’re committing to. Publish your own internal benchmarks. The space moves every quarter.

Further Reading

- NVIDIA H100 vs A100: Which GPU Should You Deploy?

- Multi-Cloud GPU Strategy: Avoiding Lock-in

- Kubernetes for GPU Workloads: A Primer

Choosing a GPU cloud and want an unbiased read? Get in touch — we’ve benchmarked all of these with real workloads.

Related Posts

GPU Clouds: RunPod vs Lambda vs CoreWeave — June 2026

RunPod H100 at $2.69/hr. Lambda at $4.29/hr. CoreWeave at $6.16/hr — but requires 8-GPU minimums. Which GPU cloud makes sense for your agent workloads?

Multi-Cloud GPU Strategy: Avoiding Lock-in and Saving 40%

Running GPU workloads on a single cloud leaves money and resilience on the table. A practical multi-cloud pattern for AI workloads — when it's worth the complexity and when it isn't.

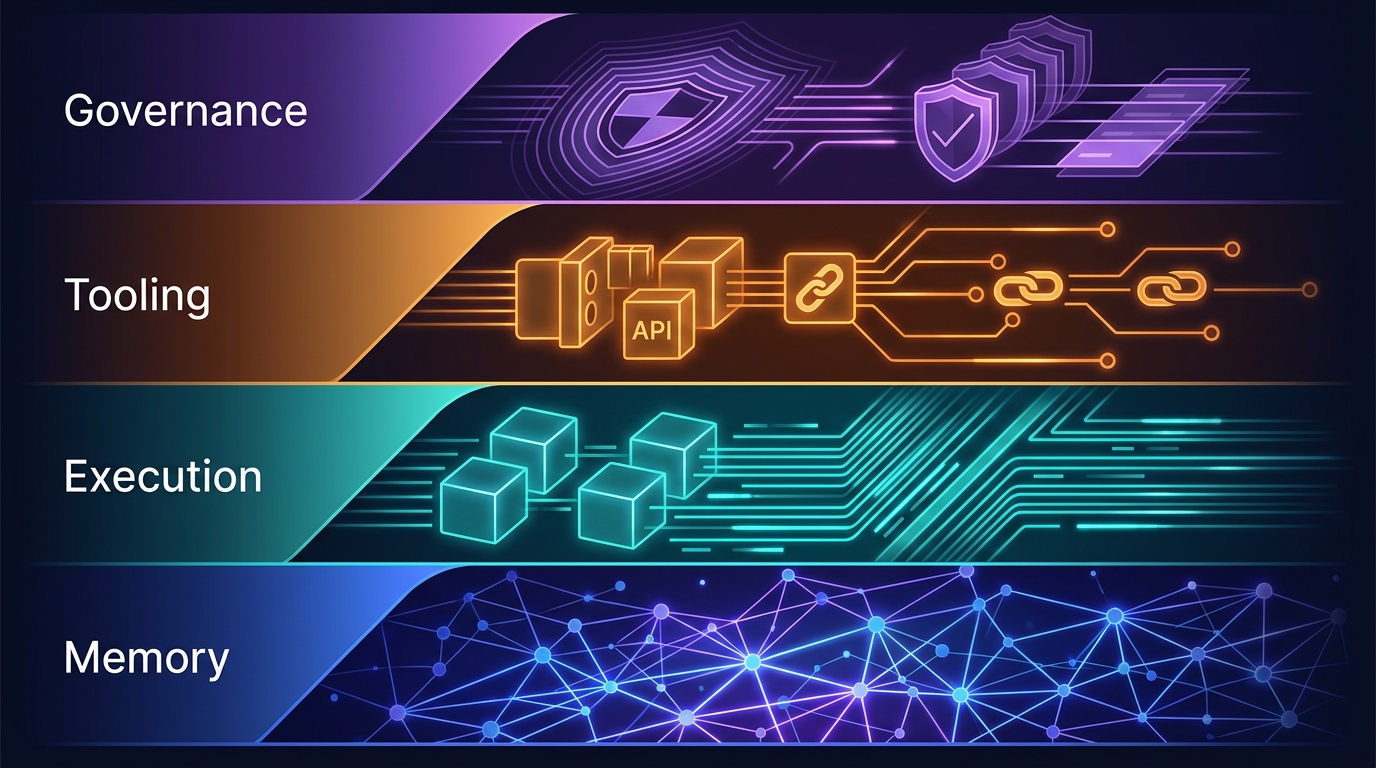

The Four-Layer Agent Infrastructure Stack: Where the Moat Actually Lives in 2026

A generation of agent startups will get commoditized. The ones that survive own one of four stateful layers: Memory, Execution, Tooling, or Governance. Here's how to tell the difference between a moat and glue code.