The State of AI Infrastructure 2025

A ground-truth report on where AI infrastructure stands at the start of 2025 — GPU availability, inference pricing, the neocloud wars, and the architecture patterns winning in production.

The State of AI Infrastructure 2025

2024 was the year AI infrastructure stopped being research and became ops. Teams that were prototyping in notebooks a year ago are now running fleets, paying six-figure GPU bills, and carrying AI services in their production pagerduty rotation. The questions shifted from “can we get it to work?” to “can we make it cheap, fast, and reliable?”

This is our annual ground-truth report on where the stack stands heading into 2025. We work with companies running AI platforms from a single A10G to multi-thousand-H100 fleets; this reflects what we actually see.

The Hardware Picture

H100 availability has normalized

The H100 shortage that dominated 2023–2024 is over. On-demand H100 is now broadly available at most hyperscalers and neoclouds without capacity reservations. Reserved-term pricing has come down 25–40% year over year.

Going rate (Jan 2025):

- H100 80GB on-demand: $2.50–$4.00/hr (was $4–$12 in mid-2024)

- H100 80GB reserved 1-year: $1.75–$2.80/hr

- A100 80GB on-demand: $1.60–$3.00/hr

- A100 80GB reserved: $0.90–$1.40/hr

A100 is now the budget option. H100 is the workhorse. The A100 crossover point — where H100 cost efficiency beats A100 on reserved pricing — is reached for almost every workload we size.

B200 is shipping

Blackwell B200 started reaching hyperscaler and neocloud fleets in Q4 2024. Early users report 2.0–2.8x H100 throughput on training workloads, with FP4 precision giving further inference wins.

What we’re telling clients in early 2025:

- Don’t rush B200 if you’re already happy with H100 capacity. Early B200 is supply-constrained and pricey.

- If you’re making new multi-year commitments, evaluate B200 in the mix.

- B200 really shines at 1T+ parameter training. For 7B–70B inference, the H100 fleet will remain the best price/perf for most of 2025.

MI300X keeps pulling weight

AMD’s MI300X went from “interesting” to “credible production option” during 2024. Several large shops (Microsoft, Meta, AMD’s launch customers) are running MI300X fleets. ROCm’s software story improved notably; vLLM, PyTorch, and HuggingFace all run cleanly on MI300X without heroic porting.

Where MI300X wins:

- 192GB HBM3 per GPU — fits 70B models comfortably on one GPU, even at FP16

- Inference cost per token on large models

- Pricing roughly 15–25% below H100 on reserved

Where it lags:

- Ecosystem depth — libraries beyond the core still often ship NVIDIA-only first

- Multi-node training scaling — less mature than NVLink + NVSwitch + InfiniBand stack

We expect MI300X to be a 20–30% share player by end of 2025, up from <5% entering 2024.

The custom silicon players

- Groq shipped LPU-backed hosted inference at remarkable latency (500+ tok/s on Llama-3-70B). Still API-only; no sale of chips to end users.

- Cerebras continues to ship wafer-scale systems into targeted markets (pharmaceutical, research). Has a hosted inference API at competitive speeds.

- SambaNova runs hosted inference on reconfigurable dataflow hardware.

- Google TPU v5p / v6 (Trillium) is available on GCP and increasingly used by external customers. TPU inference pricing is competitive with GPU.

- AWS Trainium2 shipped in late 2024 and is being positioned aggressively for Claude and other large training workloads.

The pattern: custom silicon is winning targeted workloads (specific hosted APIs, specific training pipelines) but NVIDIA still owns the general-purpose GPU market for 90%+ of teams.

Inference Pricing Fell Off a Cliff

This is the biggest story of 2024 carrying into 2025. LLM token prices collapsed:

| Model tier | Early 2024 | Early 2025 | Change |

|---|---|---|---|

| GPT-4 class | $30 / $60 per M | $2.50 / $10 (GPT-4o) | -85%+ |

| Claude Sonnet class | $3 / $15 | $3 / $15 (Sonnet 3.5), continuing | stable |

| Llama-3-70B hosted (cheapest) | $0.88 / M | $0.35 / M (DeepInfra, Together) | -60% |

| Llama-3-8B hosted | $0.20 / M | $0.07 / M | -65% |

Gemini 2.0 Flash, GPT-4o-mini, and Haiku 3.5 all sit at or near $0.25 / M input tokens. That’s basically free compared to early 2024. This changes the economics of every self-hosting decision.

Implication: The breakeven for self-hosting moved up. What used to be “self-host at 10M tokens/day” is now “self-host at 50M+ tokens/day” for most Llama-class workloads. Fine-tunes, privacy, and latency remain the strongest self-host arguments; pure cost is a harder sell.

Architecture Patterns Winning in 2025

From the deployments we work on, these are the patterns stabilizing into best practices:

1. LLM gateway first, everything else second

Every multi-provider stack needs a gateway layer. LiteLLM, Portkey, Kong AI Gateway, or custom. It handles retries, fallback, key management, PII redaction, cost attribution, and rate limiting in one place. See LLM Gateway Patterns.

2. Observability before features

Teams that instrumented early (with OTel, Langfuse, or Langsmith) iterate 3–5x faster on prompt and agent quality. The teams still adding tracing after launch are the ones stuck debugging intermittent issues for weeks.

3. Hybrid retrieval by default

BM25 + dense retrieval + reranker. Pure dense-only RAG is an anti-pattern for high-quality search. The plumbing is modest once you’ve done it once. See Hybrid Search in Production.

4. Eval-driven prompt development

Regression-tested prompts. You don’t ship a prompt change without an eval suite. This is the strongest predictor we’ve seen of shipping velocity for AI products.

5. FP8 quantization as default

On H100, FP8 is essentially free performance with negligible quality loss. Any 70B+ inference not using FP8 is wasting GPUs.

6. Disaggregated prefill/decode

The newest pattern — splitting prefill (compute-bound) and decode (bandwidth-bound) onto different node pools. Running in production at a few large shops, generally available in vLLM/SGLang during 2025. 30–50% throughput wins on workloads with long prompts.

The Open-Source Frontier

The open-source model story shifted meaningfully:

- Llama 3.1 405B closed the gap with the closed frontier models for most practical tasks

- DeepSeek V3 and R1 shipped in late 2024 with very strong reasoning on comparably cheap hardware to train, reshaping expectations

- Qwen 2.5 (Alibaba) series is competitive with Llama at similar sizes

- Mistral Large 2 remains a strong EU-based option

For teams choosing a self-host target, the default in 2025 is Llama 3.3 70B or DeepSeek V3 for general chat, Qwen 2.5-Coder for coding agents, and Mistral Large 2 for European compliance.

Cost and FinOps Became A Real Discipline

AI bills got big enough that finance started caring. In 2024, teams were adding FinOps controls as an afterthought. In 2025, it’s table stakes:

- Token spend attribution per feature, per customer, per team

- Rate limits enforced at the gateway per tenant

- Budget alerts at cluster and team level

- Quarterly compute usage reviews with engineering management

See our AI FinOps guide for the full playbook.

What’s Still Broken

Places where the stack is still immature and will be a 2025 focus:

1. Multi-region inference. Running an LLM app that’s fast in both Frankfurt and Singapore is still harder than it should be. Expect more cross-region model serving tools this year.

2. Agent orchestration at scale. LangGraph and CrewAI work for small teams, but no mature “Kubernetes for agents” exists yet. Managed offerings are starting (LangGraph Cloud, AWS Bedrock Agents), but the space is young.

3. Fine-tune management. Managing dozens of LoRA adapters, retraining on fresh data, deploying without downtime — still requires a lot of custom tooling per org.

4. Regulatory compliance. EU AI Act came into force. Most teams are still sorting out what it means operationally. Expect a wave of “AI governance” tools in 2025.

5. Evals that match production. Offline eval sets diverge from production traffic. The best teams are investing in continuous eval pipelines that sample real traffic.

Predictions for 2025

Where we think things go:

- H100 becomes the A100 — the default workhorse. B200 handles frontier training. L40S/L4 fleets proliferate for small-model inference.

- Inference margins compress further. $0.15/M tokens for 70B-class becomes realistic.

- One major neocloud IPOs (CoreWeave is the obvious candidate; it happens in 2025).

- Agent infrastructure gets its own category. Distinct from inference serving. See our early take in Agent Infrastructure: What’s Different.

- EU sovereign AI infrastructure emerges. French, German, UK providers scale up, driven by EU AI Act.

- Vector DB consolidation. Two or three clear winners emerge. Pgvector’s share keeps growing. Some smaller players get acquired or wind down.

- MCP becomes the default tool protocol. Standardizes agent tooling across frameworks.

- On-prem AI returns for regulated industries. Enterprise on-prem H100 clusters ship in meaningful volume.

What To Do With This

If you’re scoping 2025 infrastructure work:

- If you’re starting fresh: Use hosted APIs, a LiteLLM gateway, a vector DB you already know, and OTel tracing. Revisit self-hosting when usage justifies it.

- If you’re already self-hosting: Audit for FP8 use, prefix caching, and continuous batching. Price against DeepInfra/Together — sometimes the API is cheaper now.

- If you’re scaling: Build the FinOps tooling you wish you’d built in 2023.

- If you’re going long: Evaluate B200 for your next committed procurement cycle.

Further Reading

- The AI Infrastructure Stack Explained (2024)

- NVIDIA B200 vs H100: Should You Upgrade?

- MI300X vs H100: AMD’s Bet on Inference

- AI FinOps: Tracking Token Spend

Planning your 2025 AI infrastructure roadmap? Let’s talk — we help shops from pre-launch to scale-beyond-hypergrowth.

Related Posts

State of AI Infrastructure 2026: Mid-Year Reality Check

A mid-2026 ground-truth report: B200 reality, SGLang's $400M spinout, agent infra going mainstream, and the three patterns dominating production.

The AI Infrastructure Stack: 2026 Edition

A refreshed view of the production AI stack at the start of 2026 — what changed since 2024, what's consolidating, and where the next round of innovation is landing.

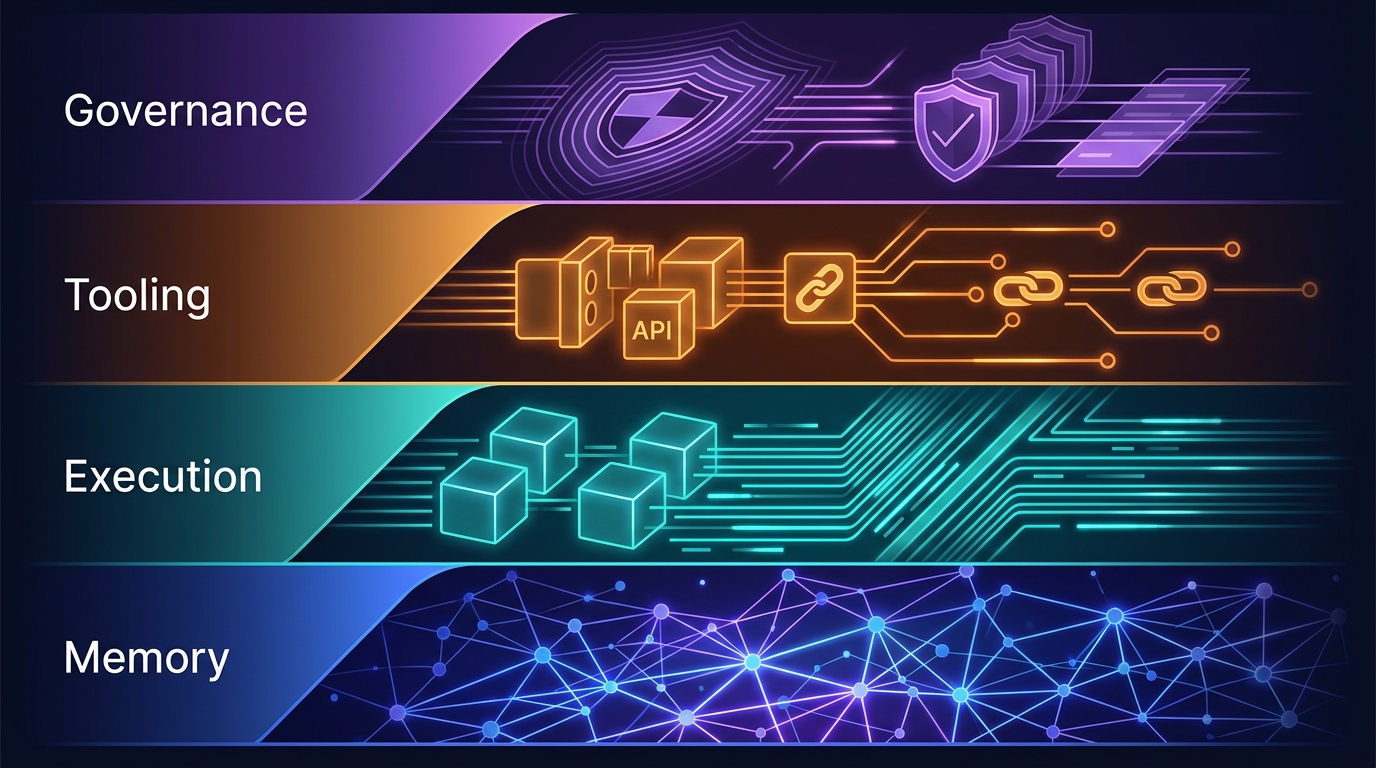

The Four-Layer Agent Infrastructure Stack: Where the Moat Actually Lives in 2026

A generation of agent startups will get commoditized. The ones that survive own one of four stateful layers: Memory, Execution, Tooling, or Governance. Here's how to tell the difference between a moat and glue code.