The AI Infrastructure Stack Explained (2024)

A grounded tour of the six layers that make modern AI systems work — from GPUs and inference servers to vector databases, orchestration, and observability — with the tradeoffs that matter in production.

The AI Infrastructure Stack Explained (2024)

Every week a team tells me their “AI project” is stuck. Usually it’s not the model. The model works fine in a notebook. What’s stuck is the stack around it — the GPU scheduling, the vector store, the inference server, the evals, the cost accounting, the fallback paths when a provider returns 503. Those pieces are “AI infrastructure,” and they are where projects die.

This guide walks through the six layers of a modern AI stack, the tradeoffs inside each, and the patterns that keep production systems alive. If you’re planning a platform investment, treat this as your mental map.

Why the AI Stack Deserves Its Own Name

Traditional web infrastructure evolved around deterministic request/response: a load balancer, a stateless service, a database, a cache. Modern AI systems inherit all of that, then add constraints the old stack was not built for:

- Non-deterministic output. Same input can produce different answers. Evals, regression tests, and structured output constraints become first-class concerns.

- Expensive, scarce compute. A single H100 costs $30k+ and is often rented by the hour. Scheduling, bin-packing, and spot reclamation are permanent concerns, not tuning problems.

- Large model weights. A 70B model is ~140GB in FP16. Loading, warming, and paging weights dwarfs any application binary.

- Provider dependence. OpenAI, Anthropic, and Google change prices, context windows, and policies on weekly cadence. Your stack needs to absorb those changes.

- New failure modes. Prompt injection, hallucination, token overruns, rate limits, latency tails that stretch to tens of seconds.

The rest of this article breaks the stack into six layers you actually deploy — not a slideware hierarchy, but the real components shipping in 2024.

Layer 1 — Compute: GPUs, TPUs, and the Silicon Lottery

At the bottom is compute. For most teams this means NVIDIA GPUs on a cloud — A100 (still ubiquitous), H100 (the 2024 workhorse), and the upcoming B200. If you are an AWS shop you may touch Trainium or Inferentia. If you are a Google shop, TPUs.

The critical decisions:

Training vs. inference hardware. Training is memory- and bandwidth-bound; H100 with NVLink dominates. Inference is increasingly throughput-bound; a fleet of L40S or even L4 can beat one H100 on cost per token for a 7–13B model.

Memory ceiling. A single H100 has 80GB; that holds a 70B model at FP8 with short context, or a 13B at FP16 with headroom. Beyond that you need tensor parallelism across GPUs, which raises networking requirements.

Interconnect. NVLink is 900 GB/s inside a node. InfiniBand is 400 Gbps across nodes. Ethernet with RoCE is catching up but nontrivial to tune. Multi-node training cares about this; single-node inference does not.

Sourcing. You can rent on-demand from the hyperscalers, reserve capacity via CoreWeave or Lambda, or commit long-term. In 2024, on-demand H100 is $2.50–$4/hr depending on region and season; reserved can be half that.

If you’re early, rent. If you’re running a fleet bigger than ~64 H100s continuously, do the math on reserved or bare-metal.

Layer 2 — Serving: Where Tokens Come From

Above raw compute is the inference server — the process that loads model weights, batches requests, runs the forward pass, and streams tokens.

The three production-grade options in 2024:

- vLLM — Open-source, dominant for self-hosted LLMs. Uses PagedAttention to pack the KV cache efficiently, supports continuous batching, and in our benchmarks delivers 3–10x the throughput of naive HuggingFace

generate(). See our PagedAttention deep-dive. - Text Generation Inference (TGI) — Hugging Face’s server. Solid, integrated with the HF ecosystem, and battle-tested at scale on Hugging Face’s own inference endpoints.

- NVIDIA Triton + TensorRT-LLM — If you want maximum throughput on NVIDIA hardware and are willing to invest in the build pipeline, this is the performance ceiling. It also handles non-LLM models uniformly.

Small and specialized servers have their place: Ollama and LM Studio for local dev, TGI or RunPod endpoints for quick experiments, vLLM for most production self-hosting.

Picking a server early matters. Each makes different tradeoffs around concurrency, token streaming, structured output (JSON mode), tool calling, and observability hooks.

Layer 3 — Orchestration: Framework, Tools, and Memory

Layer 3 is where the agent lives. It decides what to do with a user request, which tools to call, how to remember prior turns, when to hand off to a human.

In 2024 the mature options are:

- LangChain / LangGraph for flexible graph-based control flow

- LlamaIndex for retrieval-heavy workloads

- CrewAI and AutoGen for multi-agent patterns

- Semantic Kernel for .NET-centric organizations

- Custom orchestration — often the right call for mission-critical systems

These frameworks are thin — the value is in the glue code, the retries, the fallbacks, and the evals. A good pattern: start with a framework, rewrite the hot paths once you understand them.

The MCP (Model Context Protocol) is the important 2024 development here. It standardizes how tools and data sources are exposed to any LLM. We expect MCP to replace most ad-hoc tool registries within a year. Our Claude Code MCP guide has the current state of the art.

Layer 4 — Data: Vectors, Features, and Retrieval

Retrieval-augmented generation (RAG) made the vector database a standard part of the stack. The four leaders in 2024 are Pinecone (managed, most mature), Qdrant (open-source, Rust, fast), Weaviate (rich hybrid search), and Milvus (Alibaba-scale, Kubernetes-native).

The 2024 surprise: pgvector caught up for many workloads. If you are already running Postgres, a single CREATE EXTENSION vector; gives you 80% of what you need up to tens of millions of vectors. See pgvector at Scale.

Beyond the vector store, a real RAG stack needs:

- An ingestion pipeline — chunking, metadata extraction, embedding, deduplication

- A hybrid retrieval layer — BM25 plus dense vectors, rerankers (Cohere, bge-reranker, or a small local model)

- A cache — embeddings are expensive and deterministic; cache aggressively

- Freshness machinery — how do you invalidate or update when source documents change?

The data layer is where most RAG systems rot silently. Bad chunking or stale indexes produce technically-correct answers that subtly diverge from ground truth. Evals here are non-negotiable.

Layer 5 — Observability: Seeing Into a Black Box

You cannot debug what you cannot see, and LLMs are black boxes by default. Production teams run at least three observability surfaces:

- Tracing — every request, every tool call, every prompt and completion, with timings. LangSmith, Langfuse, Arize Phoenix, Helicone, and OpenTelemetry-based stacks all solve this.

- Evals — offline and online regression tests. Given fixed inputs, has the output gotten worse? This is the single highest-leverage practice for teams that want to iterate on prompts without breaking production.

- Cost and usage — tokens per request, per feature, per user, per model. Without this you cannot negotiate with finance or detect a broken loop that’s quietly burning $5k/day.

The mistake almost every team makes: treating observability as something to bolt on after launch. Build the tracing layer first, then iterate on the product. You will move faster.

Layer 6 — The Gateway: Your New Perimeter

Between your orchestration layer and the outside world — OpenAI, Anthropic, Google, self-hosted models — sits the gateway. In 2024 this is typically:

- LiteLLM proxy — open-source, unifies 100+ provider APIs behind an OpenAI-compatible endpoint

- Portkey — managed, richer observability and guardrails

- Kong AI Gateway — bolt-on for existing Kong shops

- Custom — fine for single-model workflows, not fine for multi-provider

The gateway handles:

- Retries, circuit breakers, fallback to alternate providers

- Key management, rate-limit enforcement

- Prompt redaction and PII scrubbing

- Audit logging for compliance

- Cost attribution per caller

Without a gateway, every service calls OpenAI directly and every service owns retry logic. That does not scale.

Putting It Together: A Reference Architecture

A mid-sized AI-first product in 2024 typically looks like this:

[ Clients / Apps ]

│

▼

[ API Gateway ] ← auth, rate limits, observability

│

▼

[ Orchestration Layer (LangGraph / custom) ]

│ │ │

▼ ▼ ▼

[ LLM Gateway ] [ Vector DB ] [ Tools / MCP ]

│ │

▼ ▼

[ Inference (vLLM) ] [ Retrieval cache ]

│

▼

[ GPU Fleet (K8s / Ray) ]

│

▼

[ Observability (OTel → Langfuse / Phoenix / Datadog) ]

Small teams collapse this (e.g., skip the gateway, use hosted LLMs only). Large teams fragment it further (separate fine-tuning, eval, and labeling infrastructure). The shape stays recognizable.

What This Means For You

If you’re shipping your first AI feature, focus on Layers 3 and 5 first. Pick a framework, wire tracing, and use a hosted model. You can skip Layers 1, 2, and 6 entirely.

If you’re scaling past $10k/month in inference spend, Layer 6 (the gateway) and Layer 2 (self-hosted serving of small models) start paying back. This is usually where a platform team emerges.

If you’re committed to self-hosting frontier-size models, Layer 1 (compute) and Layer 2 (serving) become a full-time concern. Expect a dedicated infra team, reserved capacity contracts, and a real SRE rotation.

Across every size: invest in observability and evals early, and treat AI infrastructure as software. The teams that do this quietly ship reliable products. The teams that don’t spend a year debugging intermittent hallucinations in their weekly deploy.

Further Reading

- Building Production AI Agents: The Complete Guide

- Complete Guide to AI Agent Frameworks (2024)

- Deploying AI Agents to Production

Looking at your AI stack and want a second opinion? Talk to our engineers about an architecture review.

Related Posts

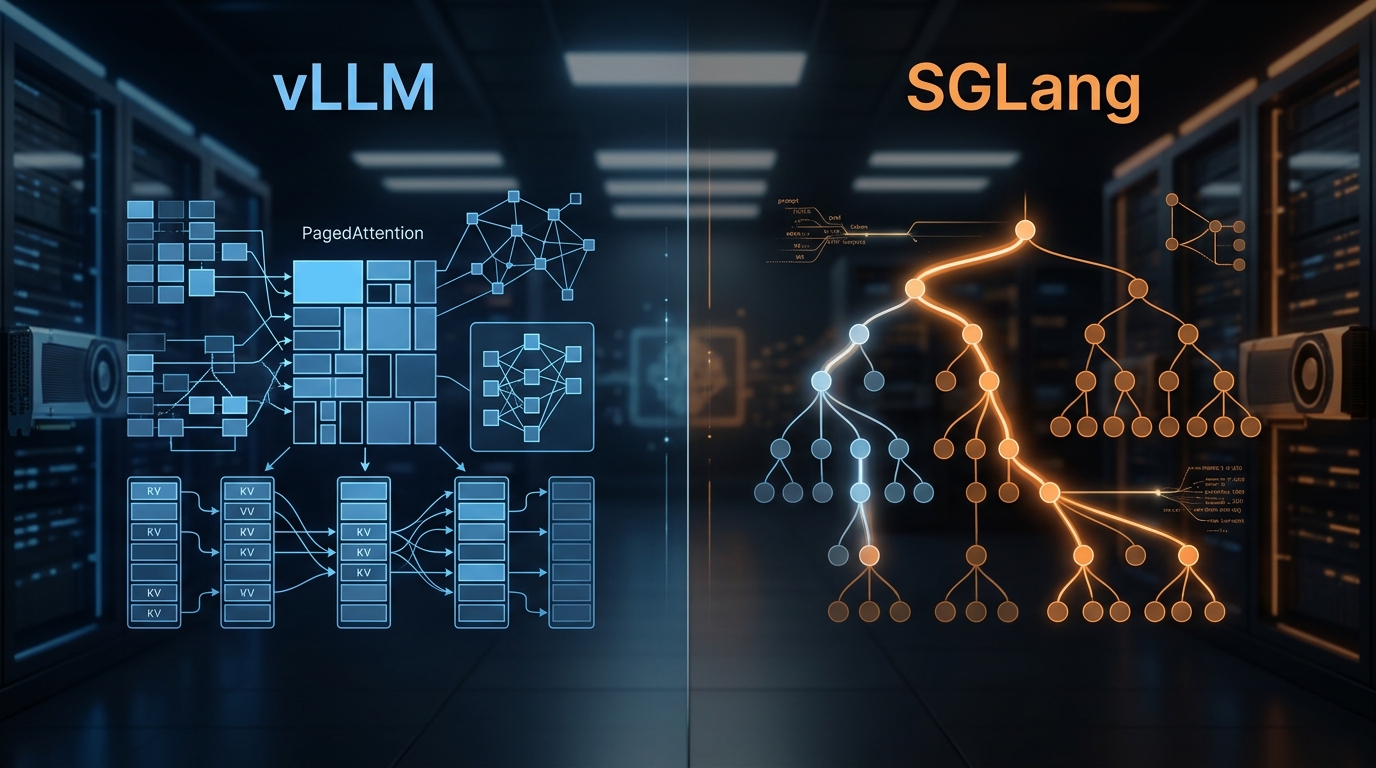

vLLM vs SGLang: Inference Engine Comparison 2026

We've deployed both at scale. Here's what the benchmarks actually show, where RadixAttention beats PagedAttention, and which engine to pick for your workload.

Kubernetes for GPU Workloads: A Primer

Running AI workloads on Kubernetes isn't the same as running stateless microservices. A primer on GPU operators, device plugins, node affinity, MIG, and the patterns that keep clusters healthy.

vLLM: The Open-Source Inference Engine Changing LLM Serving

vLLM uses PagedAttention and continuous batching for dramatically higher LLM throughput vs. HuggingFace serving. Architecture, benchmarks, deployment notes.