MI300X vs H100: AMD's Bet on Inference

AMD's MI300X turned from curiosity to production option during 2024–2025. Where AMD wins, where NVIDIA still leads, and how to integrate MI300X into a mixed fleet.

MI300X vs H100: AMD’s Bet on Inference

In 2023, AMD’s MI300X was a spec sheet. In 2024, it was a risk. By the end of 2025, it’s in production fleets at Microsoft, Meta, and the first wave of AMD-built neoclouds. The software story is no longer the blocker it was. And for large-model inference specifically, MI300X has a real argument over H100.

This post covers the current reality: where AMD wins, where NVIDIA still leads, and how we’re integrating AMD into mixed fleets with real workloads.

Spec Comparison

| Spec | H100 80GB | MI300X 192GB | Delta (MI300X) |

|---|---|---|---|

| HBM capacity | 80 GB HBM3 | 192 GB HBM3 | 2.4x |

| Memory bandwidth | 3.35 TB/s | 5.3 TB/s | 1.58x |

| BF16 TFLOPS | 989 | ~1,300 | 1.3x |

| FP8 TFLOPS | 1,979 | ~2,600 | 1.3x |

| FP4 TFLOPS | — | — | Both missing (MI325X adds this) |

| Interconnect | NVLink 900 GB/s | Infinity Fabric 896 GB/s | Comparable |

| TDP | 700W | 750W | +7% |

The headline: MI300X has way more memory (192 GB vs 80 GB) and meaningfully more bandwidth. FLOPS are closer; MI300X has a modest lead.

Memory is decisive for inference. A 70B model in FP16 (140 GB) fits on a single MI300X, leaving 52 GB for KV cache. On H100 you’re at TP=2 just to fit the weights. For 405B, MI300X fits in 4 cards; H100 needs 8.

Inference Benchmarks

Llama-3-70B (FP8/FP16 comparison, same vLLM version)

| Config | Throughput (tok/s) | TTFT P50 | Notes |

|---|---|---|---|

| 2x H100 80GB FP8, TP=2 | 6,420 | 180ms | Baseline |

| 1x MI300X 192GB BF16 | 5,890 | 150ms | Fits on single card |

| 1x MI300X 192GB FP8 | 8,730 | 130ms | ROCm FP8 support mature as of 2025 |

| 2x MI300X 192GB FP8, TP=2 | 14,200 | 110ms | Best config |

MI300X beats H100 on a per-card basis for 70B inference. Both memory capacity and single-card locality help — no TP coordination overhead.

Llama-3.1-405B

| Config | Throughput (tok/s) | Cost/M tokens |

|---|---|---|

| 8x H100 FP8, TP=8 | 3,850 | ~$0.75 |

| 4x MI300X FP8, TP=4 | 4,120 | ~$0.52 |

MI300X opens up 405B serving in half the GPU count. Cost per token drops 30%+.

Llama-3-8B

Smaller models where neither GPU is saturated:

| Config | Throughput (tok/s) |

|---|---|

| 1x H100 | 4,840 |

| 1x MI300X | 5,320 |

Similar. At this size, you’re wasting both GPUs’ memory; smaller hardware (L40S) often makes more sense.

Where MI300X Clearly Wins

1. Large-model single-card inference

70B on 1 GPU instead of 2. 405B on 4 GPUs instead of 8. This is the core value proposition and it’s real.

2. Long-context inference

A 128K-token context workload with FP8 KV cache fits on MI300X where H100 needs to shard. Simplifies deployment, improves latency.

3. MoE models

Mixture-of-experts models (Mixtral 8x22B, DeepSeek V3, Grok architecture) have variable memory pressure. MI300X’s headroom handles it gracefully.

4. Cost per token at scale

For hosted inference or very large fleets, MI300X reserved pricing is typically 15–25% below H100. Combined with per-card throughput, cost per token is often 25–35% lower.

5. ROCm software support

ROCm 6+ has closed the gap substantially. vLLM, PyTorch, HuggingFace, and TRL all run on ROCm out of the box in 2026. Performance tuning isn’t NVIDIA-level yet but it’s close for mainline workloads.

Where NVIDIA Still Leads

1. Training at scale

Multi-node training on MI300X is possible but less mature. NVLink + NVSwitch + CUDA + Megatron-LM stack still beats ROCm equivalent for frontier-scale training. We wouldn’t pick MI300X for a 405B-from-scratch training run today.

2. FP4 precision (for now)

NVIDIA’s B200 has FP4; MI300X doesn’t. MI325X adds it. For inference workloads that can use FP4, B200 beats MI300X on throughput per dollar.

3. Ecosystem niche tools

Some specialized libraries (specific kernels, research codebases) only target CUDA. If your team uses one, porting is real work.

4. Spot supply and geographic breadth

NVIDIA GPUs are everywhere. MI300X availability is concentrated in fewer regions. Multi-region fleets still need NVIDIA for coverage.

5. Operational tooling

DCGM (NVIDIA), Grafana dashboards, Kubernetes device plugin — all more polished for NVIDIA. ROCm equivalents (ROCSMI, rocm-exporter) work but are less mature.

Software Stack Reality Check

Running MI300X in production as of early 2026:

What works out of the box

- vLLM — full MI300X support, FP8, AWQ, prefix caching. Performance is within 5–10% of H100 per-FLOP.

- PyTorch 2.4+ — ROCm support is stable. Most HuggingFace models run without code changes.

- TRL / Axolotl / Unsloth — LoRA and QLoRA fine-tuning work on MI300X.

- Kubernetes + AMD GPU Operator — device plugin and monitoring work.

- Observability — OTel and Langfuse are hardware-agnostic; work the same.

What needs extra effort

- FlashAttention variants — ROCm ports are available; sometimes a version behind NVIDIA’s latest.

- Structured output libraries (outlines, guided decoding) — most work; test specific configs.

- TensorRT-LLM alternative — AMD has Migration & Dynamo tools but nothing quite equivalent in terms of performance ceiling.

- Multi-node training — works, but fewer reference setups than NVIDIA’s equivalent.

What doesn’t work yet

- FP4 precision — only MI325X+ will have it

- Some NVIDIA-specific research code — needs porting

- Triton server as the front end — alternatives exist (vLLM has OpenAI-compatible API built in); not usually a blocker

Integrating MI300X Into A Mixed Fleet

Practical pattern we deploy for clients moving to mixed fleets:

Step 1: Carve off a workload

Pick the workload with the highest MI300X advantage — usually large-model inference. Set up a dedicated MI300X node pool in your K8s cluster.

Step 2: Run production traffic through a gateway

Your LLM gateway (LiteLLM, Portkey) routes to the MI300X fleet alongside existing H100 backends. Start at 5–10% traffic. Monitor quality and latency parity.

Step 3: Operate the divergence

Accept that MI300X and H100 replicas have slightly different performance characteristics. Your autoscaling, monitoring, and cost attribution need labels for each.

Step 4: Scale the workload

Shift more traffic to MI300X as you grow confidence. Typical steady state: large-model inference on MI300X, small-model and training on NVIDIA.

Most clients end up with ~30–50% of their inference on MI300X after a year of evaluation.

Cost Dynamics (Early 2026)

- H100 80GB reserved: $1.40–$2.20/hr

- MI300X 192GB reserved: $1.80–$2.80/hr

- MI300X 192GB on-demand: $3.50–$5.50/hr

Per-card, MI300X is slightly more expensive. Per-token of 70B inference, MI300X is 25–35% cheaper. For 405B, the gap is larger.

Neoclouds offering MI300X: Tensorwave, Hot Aisle, Runpod, Lambda (early 2026), CoreWeave (announced). Availability improves every quarter.

What’s Coming Next

MI325X (shipping late 2025 / early 2026): 256 GB HBM3e, 6 TB/s bandwidth, adds FP4 support. Should further differentiate against H100; competitive with B200 for inference.

MI350 series (2026–2027): New architecture. AMD’s most aggressive performance positioning.

ROCm 7 maturity: Closing remaining software gaps. Expected 2026.

AMD’s roadmap is credible. NVIDIA still leads, but the gap is no longer “AMD is interesting” — it’s “AMD is a real option for specific workloads.” For a mixed-fleet strategy, AMD is now a must-evaluate.

The Decision Framework

Go MI300X if:

- Large-model inference (70B+) is your primary workload

- Cost per token matters more than peak performance

- You have Kubernetes + team capable of managing a heterogeneous fleet

- Long-context workloads are in your future

- You want pricing leverage against NVIDIA procurement

Stay NVIDIA if:

- Frontier training is your primary workload

- You need FP4 precision today (wait for MI325X)

- Your team has no ROCm experience and no time to learn

- You need the broadest geographic availability

- Small-model inference dominates (neither MI300X’s nor H100’s full capacity needed)

Further Reading

- NVIDIA B200 vs H100: Should You Upgrade?

- NVIDIA H100 vs A100: Which GPU Should You Deploy?

- Multi-Cloud GPU Strategy: Avoiding Lock-in

Evaluating MI300X for your inference fleet? Let’s talk — we’ve run benchmarks and integrations for both large inference shops and training teams.

Related Posts

NVIDIA B200 vs H100: Should You Upgrade?

Blackwell's B200 is shipping at scale. Benchmarks, cost deltas, FP4 economics, and when it's worth the capex vs sticking with your H100 fleet for another year.

NVIDIA H100 vs A100: Which GPU Should You Deploy?

A practical comparison of NVIDIA's H100 and A100 for LLM training and inference — memory, FLOPS, interconnect, price per token, and the cases where the older A100 still wins.

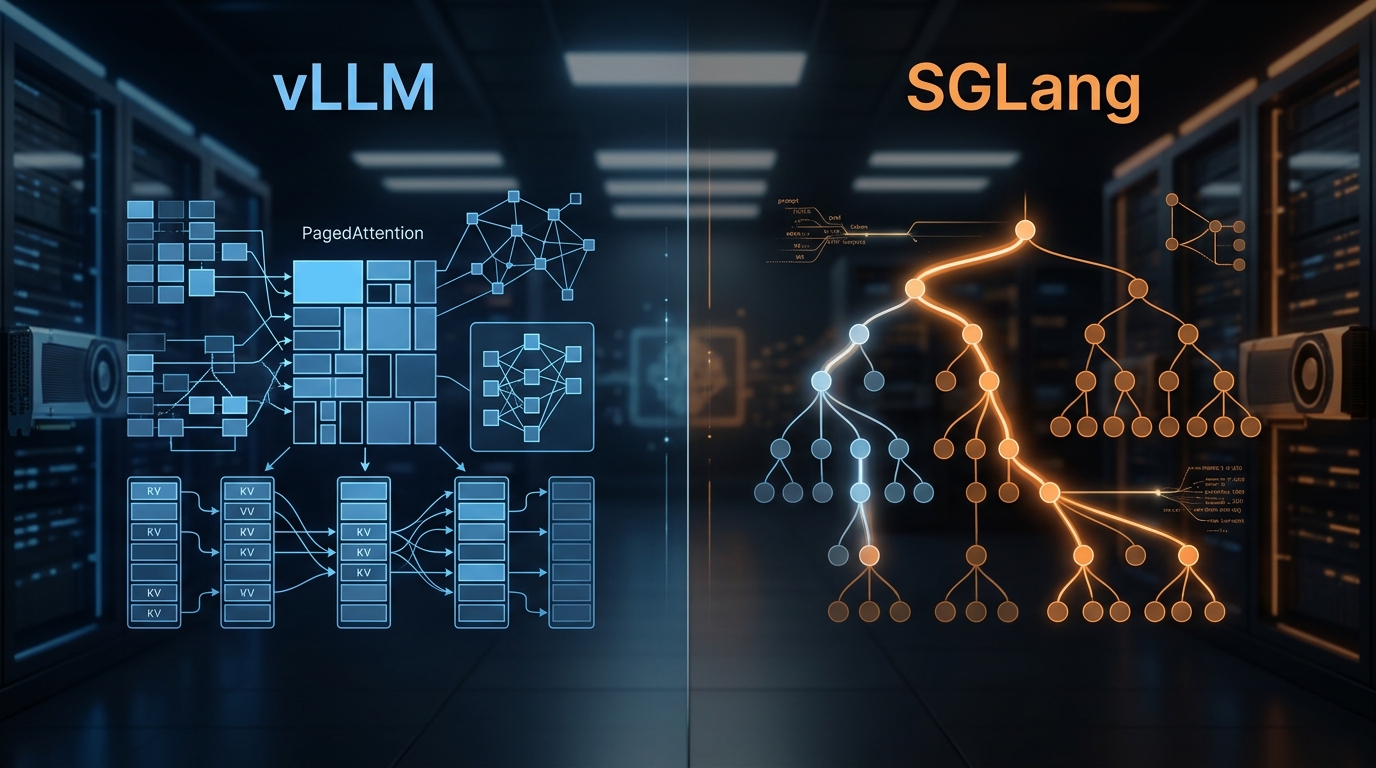

vLLM vs SGLang: Inference Engine Comparison 2026

We've deployed both at scale. Here's what the benchmarks actually show, where RadixAttention beats PagedAttention, and which engine to pick for your workload.