Semantic Kernel vs LangChain: Enterprise Framework Comparison

Semantic Kernel vs LangChain for enterprise AI agents — architecture, integration patterns, .NET vs Python tradeoffs, and when to pick each.

Semantic Kernel vs LangChain: Choosing the Right Framework for Enterprise AI Agents

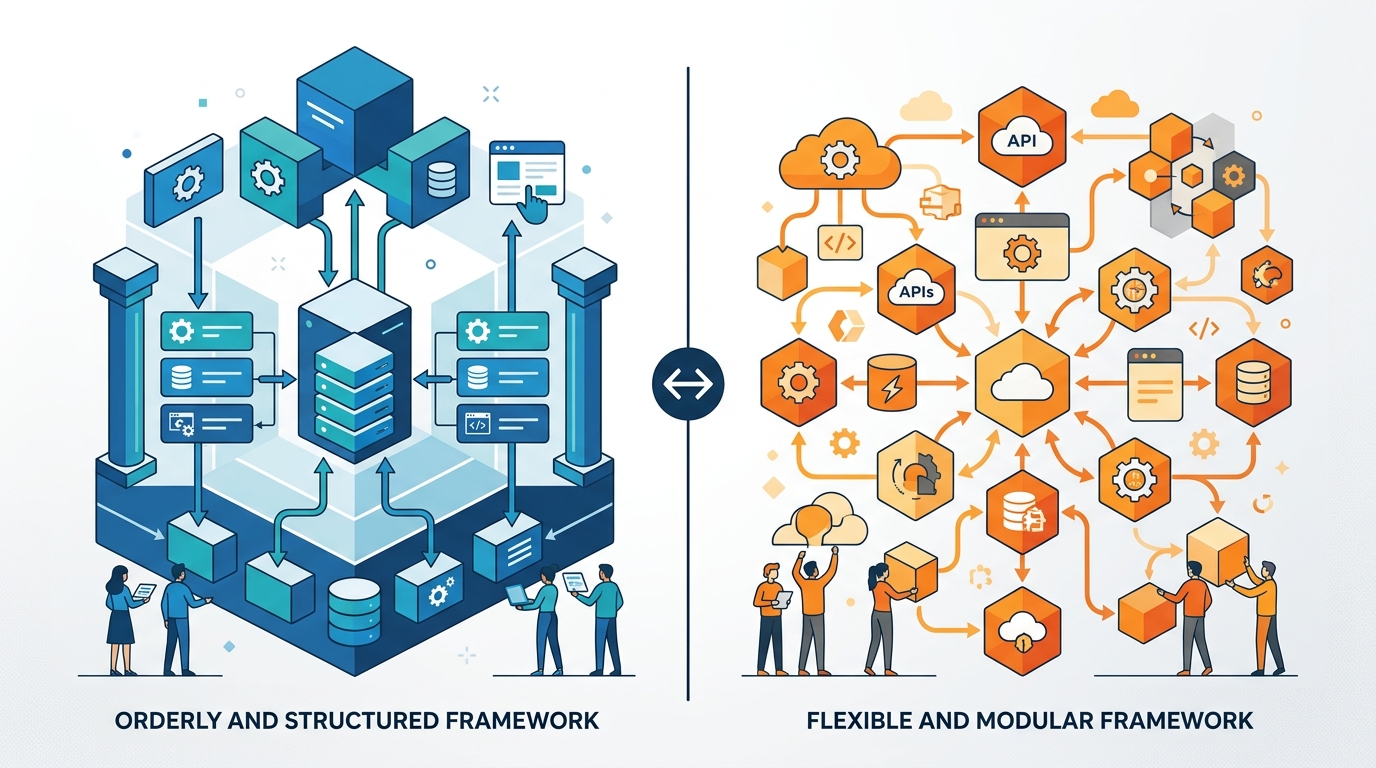

Two frameworks lead the conversation when enterprises build AI agents: LangChain, the Python-first framework that pioneered LLM orchestration, and Microsoft’s Semantic Kernel, designed from the ground up for enterprise integration. Both enable sophisticated agent development, but they target different developer ecosystems and organizational needs. This comparison breaks down their architectures, strengths, and ideal use cases.

Origins and Philosophy

LangChain

LangChain emerged in late 2022 as the first major framework for building LLM applications. It introduced the concept of “chains” connecting prompts, models, tools, and memory. The framework grew organically from community needs, resulting in broad capability coverage and extensive third-party integrations.

Core philosophy: LangChain treats LLM applications as compositions of modular components. Flexibility comes first—the framework supports almost any architecture through its extensive abstraction layer.

Semantic Kernel

Microsoft released Semantic Kernel in 2023 as an open-source SDK for integrating AI into applications. Born from Microsoft’s internal AI development experience, it reflects enterprise software patterns: strong typing, dependency injection, and native integration with Azure services.

Core philosophy: Semantic Kernel treats AI as a capability that integrates into existing software architecture. It prioritizes enterprise patterns, type safety, and seamless Azure integration.

Architecture Comparison

LangChain’s Component Model

LangChain organizes functionality around these core concepts:

- Chains: Sequential or parallel compositions of operations

- Agents: Autonomous decision-makers that select tools

- Tools: Functions the agent can invoke

- Memory: Conversation and context persistence

from langchain_openai import ChatOpenAI

from langchain.agents import create_tool_calling_agent, AgentExecutor

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.tools import tool

@tool

def search_database(query: str) -> str:

"""Search the internal database for information."""

return f"Results for: {query}"

llm = ChatOpenAI(model="gpt-4o")

prompt = ChatPromptTemplate.from_messages([

("system", "You are a helpful assistant."),

("human", "{input}"),

("placeholder", "{agent_scratchpad}")

])

agent = create_tool_calling_agent(llm, [search_database], prompt)

executor = AgentExecutor(agent=agent, tools=[search_database])

result = executor.invoke({"input": "Find customer records"})

LangChain’s strength is its uniformity across diverse use cases. Whether building chatbots, RAG systems, or multi-agent orchestrations, the abstractions remain consistent.

Semantic Kernel’s Plugin Model

Semantic Kernel organizes around enterprise software patterns:

- Kernel: The central orchestrator (similar to dependency injection containers)

- Plugins: Collections of related functions

- Functions: Semantic (prompt-based) or native (code-based) operations

- Planners: AI-powered orchestration of function sequences

using Microsoft.SemanticKernel;

using Microsoft.SemanticKernel.Connectors.OpenAI;

var builder = Kernel.CreateBuilder();

builder.AddAzureOpenAIChatCompletion(

deploymentName: "gpt-4o",

endpoint: Environment.GetEnvironmentVariable("AZURE_OPENAI_ENDPOINT"),

apiKey: Environment.GetEnvironmentVariable("AZURE_OPENAI_API_KEY")

);

var kernel = builder.Build();

// Register plugin with native functions

kernel.ImportPluginFromType<DatabasePlugin>();

// Execute with automatic function calling

var settings = new OpenAIPromptExecutionSettings {

ToolCallBehavior = ToolCallBehavior.AutoInvokeKernelFunctions

};

var result = await kernel.InvokePromptAsync(

"Find customer records for Contoso",

new(settings)

);

Semantic Kernel’s Python SDK mirrors these patterns:

import semantic_kernel as sk

from semantic_kernel.connectors.ai.open_ai import AzureChatCompletion

kernel = sk.Kernel()

kernel.add_service(AzureChatCompletion(

deployment_name="gpt-4o",

endpoint=os.environ["AZURE_OPENAI_ENDPOINT"],

api_key=os.environ["AZURE_OPENAI_API_KEY"]

))

@kernel.function(name="search_database")

def search_database(query: str) -> str:

"""Search the internal database for information."""

return f"Results for: {query}"

Feature Comparison

| Feature | LangChain | Semantic Kernel |

|---|---|---|

| Primary languages | Python, JavaScript | C#, Python, Java |

| Enterprise focus | Moderate | High |

| Azure integration | Via connectors | Native, first-class |

| Type safety | Optional | Strong (especially C#) |

| Dependency injection | Not built-in | Native support |

| Learning curve | Moderate | Moderate to steep |

| Community size | Very large | Growing |

| Integration count | 500+ | 100+ |

| Agent frameworks | AgentExecutor, LangGraph | Planners, Agents (preview) |

| RAG support | Extensive | Good |

Enterprise Integration Patterns

Semantic Kernel shines in enterprise environments:

// Native dependency injection in ASP.NET Core

services.AddKernel()

.AddAzureOpenAIChatCompletion(config["DeploymentName"], config["Endpoint"], config["ApiKey"])

.AddPlugin<CustomerPlugin>()

.AddPlugin<OrderPlugin>();

// Use in controllers like any other service

public class AgentController : ControllerBase

{

private readonly Kernel _kernel;

public AgentController(Kernel kernel) => _kernel = kernel;

[HttpPost]

public async Task<IActionResult> Query(string prompt)

{

var result = await _kernel.InvokePromptAsync(prompt);

return Ok(result);

}

}

LangChain requires more manual integration work but offers greater flexibility:

from fastapi import FastAPI, Depends

from langchain_core.runnables import RunnableConfig

app = FastAPI()

def get_agent():

# Build agent with configuration

return create_configured_agent()

@app.post("/query")

async def query(prompt: str, agent = Depends(get_agent)):

result = await agent.ainvoke({"input": prompt})

return {"result": result}

Agent Capabilities

LangChain offers more mature agent options:

- AgentExecutor: Classic ReAct-style agent loop

- LangGraph: State machine-based complex workflows

- Multi-agent systems: Hierarchical and peer-to-peer patterns

Semantic Kernel provides:

- Planners: Handlebars, Stepwise, and Function Calling planners

- Agent Framework (preview): New abstraction for multi-turn agents

- Process Framework (preview): Workflow orchestration with durable execution

LangGraph currently leads for complex agent architectures, but Semantic Kernel’s agent framework is maturing rapidly.

Observability and Debugging

Semantic Kernel integrates with Azure Application Insights and OpenTelemetry:

builder.Services.AddOpenTelemetry()

.WithTracing(tracing => tracing

.AddSource("Microsoft.SemanticKernel*")

.AddAzureMonitorTraceExporter());

LangChain offers LangSmith for tracing and evaluation:

import os

os.environ["LANGCHAIN_TRACING_V2"] = "true"

os.environ["LANGCHAIN_API_KEY"] = "your-api-key"

# Automatic tracing of all chain executions

Both approaches work well; the choice often depends on your existing observability stack.

When to Choose Semantic Kernel

Semantic Kernel is the better choice when:

- You’re a .NET shop: C# is your primary language and team expertise

- Azure is your cloud: Deep Azure OpenAI and Azure AI Search integration

- Enterprise patterns matter: Dependency injection, strong typing, and familiar software architecture

- Compliance is critical: Microsoft’s enterprise support and security certifications

- Java support needed: Semantic Kernel offers official Java SDK

Ideal for: Enterprise .NET applications, Azure-native deployments, regulated industries, organizations with Microsoft Enterprise Agreements.

When to Choose LangChain

LangChain is the better choice when:

- Python is primary: Your team lives in the Python ecosystem

- Integration variety: You need connectors to many different services

- Complex agent patterns: Multi-agent systems and sophisticated orchestration

- Community resources: Extensive tutorials, examples, and third-party tools

- Rapid prototyping: Get to a working prototype quickly

Ideal for: Data science teams, startups, Python-first organizations, research and experimentation, multi-cloud deployments.

Making Your Decision

Consider these questions:

-

What’s your primary programming language?

- C# or Java → Semantic Kernel

- Python → Either works; LangChain has larger ecosystem

-

What’s your cloud strategy?

- Azure-first → Semantic Kernel

- Multi-cloud or AWS/GCP → LangChain

-

How important is enterprise architecture?

- DI, strong typing, familiar patterns → Semantic Kernel

- Flexibility over structure → LangChain

-

What’s your agent complexity?

- Standard patterns → Either works well

- Multi-agent orchestration → LangChain (LangGraph)

The Convergence Path

Both frameworks are converging on similar capabilities. Semantic Kernel’s Python SDK increasingly mirrors LangChain patterns. LangChain is adding more enterprise features. A reasonable strategy is to:

- Match your language: Use Semantic Kernel for .NET, LangChain for Python

- Consider migration paths: Both support similar abstractions, making future migration feasible

- Evaluate enterprise needs: Compliance requirements may favor Semantic Kernel’s Microsoft backing

Looking Ahead

Microsoft continues investing heavily in Semantic Kernel, with the Agent and Process frameworks adding sophisticated orchestration. LangChain’s ecosystem remains the largest, and LangGraph is becoming the standard for complex agent workflows.

For enterprises deep in the Microsoft ecosystem, Semantic Kernel offers the path of least resistance. For Python-first teams valuing flexibility and community resources, LangChain remains the natural choice. Both are production-ready foundations for AI agent development—your decision should align with your team’s existing skills and infrastructure investments.

For hands-on tutorials, see our guide on building your first AI agent with LangGraph and our custom tools tutorial for LangChain agents.

Related Posts

LangChain vs LlamaIndex vs Semantic Kernel 2026

The 2026 showdown: LangChain's agent-first evolution, LlamaIndex's data pipeline dominance, and Semantic Kernel's absorption into Microsoft Agent Framework 1.0. Which wins?

LangChain vs LlamaIndex: Which Framework for Building AI Agents?

A comprehensive comparison of LangChain and LlamaIndex for AI agent development, covering architecture, data handling, agent capabilities, and when to use each framework

LangChain @tool Decorator: Build Custom Agent Tools

from langchain.tools import tool — build custom LangChain agent tools with the @tool decorator. Type hints, docstrings, async, error patterns.