Agent Eval Tutorial 2026: DeepEval + LangSmith Guide

Build an evaluation pipeline for AI agents with DeepEval and LangSmith, from setup to CI/CD.

If your agent works on Tuesday but breaks on Thursday after a prompt tweak, you don’t have an agent problem — you have an evaluation problem. Non-deterministic systems demand deterministic test harnesses. Yet most teams still treat eval as an afterthought, manually checking outputs before a release instead of running automated guardrails on every commit.

LangChain’s latest State of Agent Engineering report found that only 27% of teams run evals before every deployment. The gap between prototyping and production-quality agents isn’t framework choice — it’s evaluation discipline.

In this tutorial we’ll build a complete evaluation pipeline using DeepEval for pytest-native scoring and LangSmith for dataset management and tracing. By the end you’ll have evals running as CI checks, not post-mortems.

Why Two Tools?

DeepEval and LangSmith solve overlapping but distinct problems:

- DeepEval is an open-source LLM evaluation framework with pytest-style assertions and 50+ pre-built metrics.

- LangSmith provides dataset management, tracing, and observability. The dataset layer is especially valuable — synthetic test case generation, versioned golden sets, and collaborative annotation.

Together they cover the full eval lifecycle: LangSmith for dataset creation and observability, DeepEval for automated scoring and CI gating.

There’s also LangSmith’s native evaluation layer, but in practice we’ve found DeepEval’s metric library broader for agent-specific work (tool correctness, plan adherence, step efficiency). Use what covers your failure modes.

Step 1: Set Up the Project

Start with a clean virtual environment and install dependencies:

mkdir agent-evals-2026 && cd agent-evals-2026

python -m venv .venv && source .venv/bin/activate

pip install deepeval langsmith langchain langchain-openai

Set your API keys as environment variables:

export OPENAI_API_KEY="sk-..."

export LANGCHAIN_API_KEY="lsv2_..."

export LANGCHAIN_TRACING_V2="true"

export LANGCHAIN_PROJECT="agent-evals-demo"

export CONFIDENT_API_KEY="confident_api_..."

The LANGCHAIN_TRACING_V2 flag ensures every agent invocation produces a trace you can inspect in the LangSmith UI.

Step 2: Build a Simple Agent to Evaluate

We need an agent to test. This one uses create_react_agent with a weather tool and a calculator tool:

# agent.py

from langgraph.prebuilt import create_react_agent

from langchain_core.tools import tool

from langchain_openai import ChatOpenAI

@tool

def get_weather(city: str) -> str:

"""Get the current weather for a given city."""

weather_data = {

"san francisco": "Sunny, 72°F",

"london": "Cloudy, 55°F",

"tokyo": "Clear, 63°F",

"new york": "Rainy, 48°F",

}

return weather_data.get(city.lower(), "Weather data unavailable")

@tool

def calculator(expression: str) -> str:

"""Evaluate a mathematical expression safely."""

allowed = set("0123456789+-*/.() ")

if not all(c in allowed for c in expression):

return "Error: invalid characters in expression"

try:

return str(eval(expression))

except Exception as e:

return f"Error: {e}"

llm = ChatOpenAI(model="gpt-4o", temperature=0)

agent = create_react_agent(

model=llm.select_tool_choice,

tools=[get_weather, calculator],

prompt="You are a helpful assistant. Use tools when needed to answer questions accurately.",

)

This agent is trivial on purpose. The techniques scale to any LangGraph, CrewAI, or OpenAI Agents SDK agent — the eval machinery is the same.

Step 3: Instrument Tracing DeepEval

Before writing test cases, instrument the agent so DeepEval can capture traces. The @observe decorator wraps any function and records its inputs, outputs, and internal steps as spans:

# agent.py (updated)

from deepeval.tracing import observe

class TracedAgent:

def __init__(self, agent):

self.agent = agent

@observe()

def invoke(self, input_text: str) -> str:

result = self.agent.invoke(

{"messages": [{"role": "user", "content": input_text}]}

)

return result["messages"][-1].content

traced_agent = TracedAgent(agent)

Every call to traced_agent.invoke() now creates a trace visible in both LangSmith and the DeepEval trace viewer. The trace includes tool invocation arguments, intermediate LLM calls, and final output.

Step 4: Write DeepEval Test Cases

DeepEval test cases wrap a single agent interaction. The LLMTestCase class holds the input, actual output, and any expected outputs or context:

# test_agent.py

import pytest

from deepeval.test_case import LLMTestCase, ToolCall

from langsmith import Client

from deepeval import evaluate

from deepeval.metrics import (

TaskCompletionMetric,

ToolCorrectnessMetric,

AnswerRelevancyMetric,

)

from agent import traced_agent

# Use gpt-4o as the LLM-judge (a cheaper model also works for most evals)

judge_model = "gpt-4o"

def run_agent(input_text: str) -> str:

"""Invoke the agent and return its final response."""

return traced_agent.invoke(input_text)

Now define individual test cases and their metrics:

# test_agent.py (continued)

def test_weather_query_tool_correctness():

"""Agent should call get_weather with the correct city argument."""

input_text = "What's the weather in Tokyo?"

actual_output = run_agent(input_text)

test_case = LLMTestCase(

input=input_text,

actual_output=actual_output,

expected_output="Clear, 63°F",

)

result = evaluate(

metrics=[

TaskCompletionMetric(model=judge_model, threshold=0.7),

ToolCorrectnessMetric(

model=judge_model,

expected_tools=["get_weather"],

threshold=0.8,

),

],

test_cases=[test_case],

)

assert result.is_success(), f"Weather test failed: {result.to_dict()}"

The ToolCorrectnessMetric checks whether the agent selected the right tool and called it with appropriate arguments. TaskCompletionMetric evaluates whether the final answer satisfies the user’s intent.

Add a calculator test that verifies reasoning chain quality:

# test_agent.py (continued)

def test_calculator_workflow():

"""Agent should use calculator tool for math, not guess."""

input_text = "What is 847 * 382?"

actual_output = run_agent(input_text)

expected_answer = "323554"

test_case = LLMTestCase(

input=input_text,

actual_output=actual_output,

expected_output=expected_answer,

)

result = evaluate(

metrics=[

AnswerRelevancyMetric(model=judge_model, threshold=0.7),

TaskCompletionMetric(model=judge_model, threshold=0.7),

],

test_cases=[test_case],

)

assert result.is_success(), f"Calculator test failed: {result.to_dict()}"

Step 5: Load Golden Datasets from LangSmith

Manually curating test cases works for three test cases. Real agents need fifty or more. LangSmith datasets solve this by letting you create, version, and share evaluation datasets.

# test_agent_datasets.py

import pytest

from deepeval.test_case import LLMTestCase

from deepeval import evaluate

from deepeval.metrics import TaskCompletionMetric, AnswerRelevancyMetric

from langsmith import Client

from agent import traced_agent

client = Client()

def load_dataset(dataset_name: str) -> list[dict]:

"""Load examples from a LangSmith dataset."""

examples = list(client.list_examples(dataset_name=dataset_name))

return [

{

"input": ex.inputs.get("input"),

"expected_output": ex.outputs.get("expected_output", ""),

"example_id": ex.id,

}

for ex in examples

]

Now parameterize a single test function over an entire dataset:

# test_agent_datasets.py (continued)

judge_model = "gpt-4o"

golden_data = load_dataset("agent-evals-demo-dataset")

@pytest.mark.parametrize("golden", golden_data)

def test_against_golden(golden: dict):

"""Run every golden example from LangSmith through the agent."""

actual_output = traced_agent.invoke(golden["input"])

test_case = LLMTestCase(

input=golden["input"],

actual_output=actual_output,

expected_output=golden["expected_output"],

)

result = evaluate(

metrics=[

TaskCompletionMetric(model=judge_model, threshold=0.7),

AnswerRelevancyMetric(model=judge_model, threshold=0.7),

],

test_cases=[test_case],

)

assert result.is_success(), (

f"Golden {golden['example_id']} failed: {result.to_dict()}"

)

To populate that dataset from the LangSmith UI:

- Go to smith.langchain.com and select your project.

- Create a dataset via Datasets → + New Dataset.

- Manually add examples or import a CSV with

inputandexpected_outputcolumns. - Alternatively, use LangSmith’s auto-dataset feature to collect successful runs, then annotate them.

A well-curated golden dataset is the single highest-leverage investment for agent quality. Every regression caught by a golden set before it reaches production is a win.

Step 6: Component-Level Evaluation

DeepEval distinguishes between end-to-end evals (black-box: input → output) and component-level evals (score individual spans inside the trace). For agents, component-level is often more actionable — it tells you which step failed, not just that it failed.

# test_component_level.py

from deepeval.metrics import AnswerRelevancyMetric

from deepeval.tracing import observe

from deepeval import evaluate

@observe()

def evaluate_tool_output(tool_result: str, user_query: str) -> dict:

"""Evaluate the quality of a single tool's output."""

from deepeval.test_case import LLMTestCase

test_case = LLMTestCase(

input=user_query,

actual_output=tool_result,

)

result = evaluate(

metrics=[AnswerRelevancyMetric(model="gpt-4o", threshold=0.7)],

test_cases=[test_case],

)

return result.to_dict()

In practice, you’d wrap this around the tool invocation itself — for example, checking whether get_weather returned useful data before passing it back to the LLM. If a tool consistently returns low relevancy scores, the fix is in the tool implementation, not the agent prompt.

Step 7: Run in CI/CD

DeepEval integrates with Pytest natively. For production pipelines, you want cost controls, caching, and failure reporting:

# .github/workflows/agent-evals.yml

name: Agent Evaluation Pipeline

on:

pull_request:

branches: [main]

schedule:

- cron: "0 6 * * 1-5" # daily at 6 am UTC on weekdays

jobs:

eval:

runs-on: ubuntu-latest

timeout-minutes: 15

env:

OPENAI_API_KEY: ${{ secrets.OPENAI_API_KEY }}

CONFIDENT_API_KEY: ${{ secrets.CONFIDENT_API_KEY }}

DEEPEVAL_EVAL_MODEL: "gpt-4o-mini"

steps:

- uses: actions/checkout@v4

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: "3.12"

cache: "pip"

- name: Install dependencies

run: |

python -m pip install --upgrade pip

pip install -r requirements.txt

- name: Run DeepEval tests

run: |

deepeval test run tests/ \

--repeat-scores 3 \

--concurrent 4 \

--exit-on-first-failure

- name: Report results to Slack

if: failure()

uses: slackapi/slack-github-action@v1.27.0

with:

slack-message: "⚠️ Agent evals failed on PR #${{ github.event.pull_request.number }}. See Confident AI for trace details."

env:

SLACK_BOT_TOKEN: ${{ secrets.SLACK_BOT_TOKEN }}

SLACK_CHANNEL_ID: ${{ secrets.SLACK_CHANNEL_ID }}

The --repeat-scores 3 flag averages metric scores across three runs to reduce judge model variance. --concurrent 4 parallelizes test execution. The daily schedule runs the full golden set every morning so you catch overnight regressions from upstream model provider changes.

Step 8: Measure Eval Cost

LLM-as-a-judge isn’t free. Running GPT-4o as an evaluator over a 50-case dataset costs roughly $1.50 per eval run at current pricing. There are two practical ways to control costs:

First, use a cheaper judge model for high-volume metrics. TaskCompletionMetric and AnswerRelevancyMetric work well with gpt-4o-mini or gemini-2.5-flash — you’ll rarely need the strongest model for a scoring task.

Second, run evals on PR changes only. A daily cron job runs the full golden set, while PR checks run only affected tests (DeepEval’s @pytest.mark.parametrize naturally supports this if you tag test cases with metadata).

The Evaluation Discipline

An eval pipeline only works if you treat it like production infrastructure:

- Version your golden datasets alongside your agent code. Dataset changes should be reviewed in PRs.

- Separate evaluation from development data. Never evaluate on prompts from your training or fine-tuning set.

- Track baseline drift. Keep a running record of metric scores per commit to catch gradual degradation.

- Calibrate thresholds per metric. A 0.7 threshold on

TaskCompletionMetricmeans something different than 0.7 onToolCorrectnessMetric. Tune each one. - Don’t skip human review. Automated scores are directional. Spot-check 10% of failures manually each sprint.

What This Replaces

Before this pipeline, we reviewed agent outputs manually and deployed with confidence based on “looks right on three test prompts.” That workflow produces agents that work in demo and fail under load. After implementing DeepEval with LangSmith datasets, the difference is measurable: regressions surface within minutes of a code change, not hours after users hit a broken agent in production.

If you’ve been building agents without systematic evaluation, start here. The setup takes an afternoon. The peace of mind lasts.

For a broader testing strategy overview, see our original testing and evaluation guide. To understand how evaluation fits into a complete deployment pipeline, read our production deployment guide. For observability infrastructure, check out our LangSmith vs Langfuse vs Arize Phoenix comparison.

Related Posts

Testing and Evaluating AI Agents: Metrics, Benchmarks, and Quality Assurance

A comprehensive guide to testing and evaluating AI agents covering essential metrics, benchmark frameworks, quality assurance approaches, and practical strategies for building reliable agent systems

Build a Retail AI Agent with LangGraph: Inventory & Orders

Step-by-step LangGraph tutorial building a retail AI agent with StateGraph, tool-calling nodes for inventory lookup, order processing, and returns.

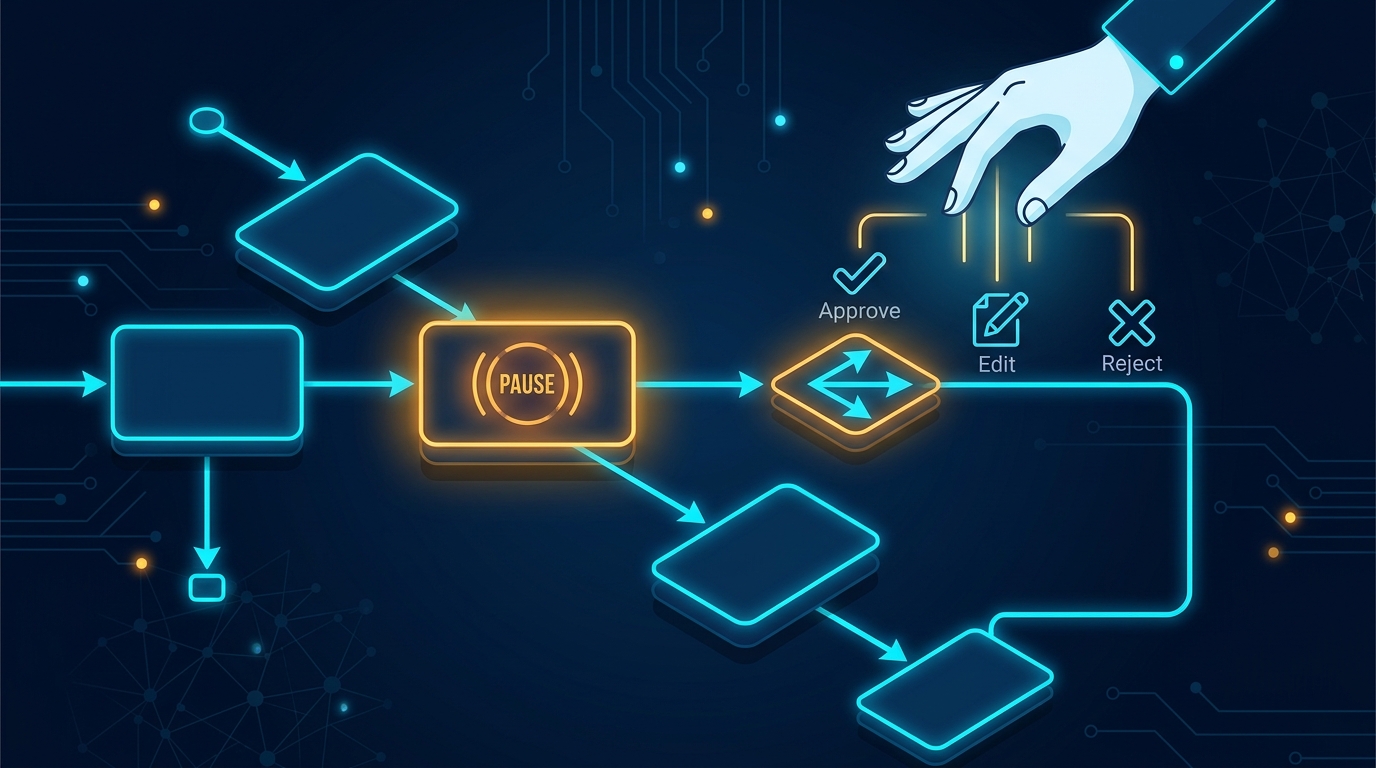

LangGraph Human-in-the-Loop: Interrupt Patterns in Python

Build approval workflows with LangGraph interrupt() and Command(). Step-by-step tutorial with approve, reject, and edit patterns.